- Table of Contents

-

- 12-Network Management and Monitoring Configuration Guide

- 00-Preface

- 01-System maintenance and debugging configuration

- 02-NQA configuration

- 03-NTP configuration

- 04-SNMP configuration

- 05-RMON configuration

- 06-NETCONF configuration

- 07-EAA configuration

- 08-Process monitoring and maintenance configuration

- 09-Sampler configuration

- 10-Mirroring configuration

- 11-NetStream configuration

- 12-IPv6 NetStream configuration

- 13-sFlow configuration

- 14-Information center configuration

- 15-GOLD configuration

- 16-Packet capture configuration

- 17-VCF fabric configuration

- 18-CWMP configuration

- 19-SmartMC configuration

- Related Documents

-

| Title | Size | Download |

|---|---|---|

| 17-VCF fabric configuration | 255.16 KB |

Neutron concepts and components

Restrictions and guidelines: VCF fabric configuration

Automated VCF fabric provisioning and deployment

Automated underlay network provisioning

Automated overlay network deployment

Configuration restrictions and guidelines

VCF fabric configuration task list

Enabling VCF fabric topology discovery

Configuration restrictions and guidelines

Configuring automated underlay network provisioning

Configuration restrictions and guidelines

Configuring automated overlay network deployment

Configuration restrictions and guidelines

Displaying and maintaining VCF fabric

Automated VCF fabric configuration example

Configuring VCF fabric

Overview

IT infrastructure which contains clouds, networks, and terminal devices is undergoing a deep transform. The IT infrastructure is migrating to the cloud with the aims of implementing the elastic expansion of computing resources and providing IT services on demand. In this context, H3C developed the Virtual Converged Framework (VCF) solution. This solution breaks the boundaries between the network, cloud management, and terminal platforms and transforms the IT infrastructure to a converged framework to accommodate all applications. It also implements automatic network provisioning and deployment.

VCF fabric topology

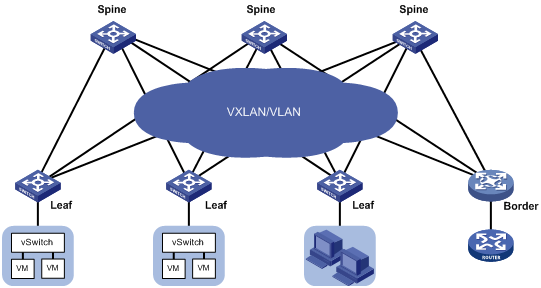

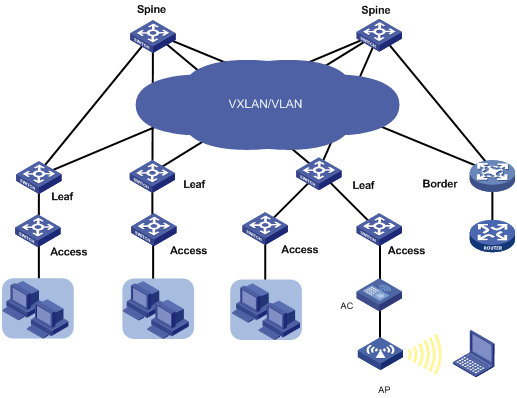

In a VCF fabric, a device has one of the following roles:

· Spine node—Connects to leaf nodes.

· Leaf node—As shown in Figure 1, a leaf node connects to a server in a typical data center network. A shown in Figure 2, a leaf node connects to an access node in a typical campus network.

· Access node—As shown in Figure 2, an access node connects to an upstream leaf node and downstream terminal devices in a typical campus network. Cascading of access nodes is supported.

· Border node—Located at the border of a VCF fabric to provide access to the external network.

Spine nodes and leaf nodes form a large Layer 2 network, which can be a VLAN, a VXLAN with centralized IP gateways, or a VXLAN with distributed IP gateways. For more information about centralized IP gateways and distributed IP gateways, see VXLAN Configuration Guide.

Figure 1 VCF fabric topology for a data center network

Figure 2 VCF fabric topology for a campus network

Neutron concepts and components

Neutron is a component in OpenStack architecture. It provides networking services for VMs, manages virtual network resources (including networks, subnets, DHCP, virtual routers), and creates an isolated virtual network for each tenant. Neutron provides a unified network resource model, based on which VCF fabric is implemented.

The following are basic concepts in Neutron:

· Network—A virtual object that can be created. It provides an independent network for each tenant in a multitenant environment. A network is equivalent to a switch with virtual ports which can be dynamically created and deleted.

· Subnet—An address pool that contains a group of IP addresses. Two different subnets communicate with each other through a router.

· Port—A connection port. A router or a VM connects to a network through a port.

· Router—A virtual router that can be created and deleted. It performs routing selection and data forwarding.

Neutron has the following components:

· Neutron server—Includes the daemon process neutron-server and multiple plug-ins (neutron-*-plugin). The Neutron server provides an API and forwards the API calls to the configured plugin. The plug-in maintains configuration data and relationships between routers, networks, subnets, and ports in the Neutron database.

· Plugin agent (neutron-*-agent)—Processes data packets on virtual networks. The choice of plug-in agents depends on Neutron plug-ins. A plug-in agent interacts with the Neutron server and the configured Neutron plug-in through a message queue.

· DHCP agent (neutron-dhcp-agent)—Provides DHCP services for tenant networks.

· L3 agent (neutron-l3-agent)—Provides Layer 3 forwarding services to enable inter-tenant communication and external network access.

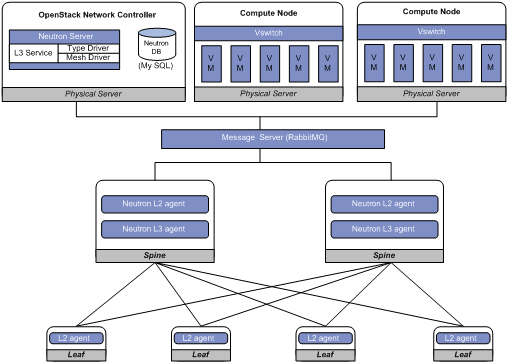

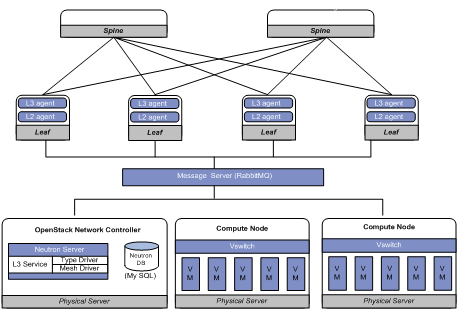

Neutron deployment

Neutron needs to be deployed on servers and network devices.

The following table shows Neutron deployment on a server.

|

Node |

Neutron components |

|

Controller node |

· Neutron server · Neutron DB · Message server (such as RabbitMQ server) · H3C ML2 Driver (For more information about H3C ML2 Driver, see H3C Neutron ML2 Driver Installation Guide.) |

|

Network node |

· neutron-openvswitch-agent · neutron-dhcp-agent |

|

Compute node |

· neutron-openvswitch-agent · LLDP |

The following table shows Neutron deployments on a network device.

|

Network type |

Network device |

Neutron components |

|

Centralized gateway deployment |

Spine |

· neutron-l2-agent · neutron-l3-agent |

|

Leaf |

neutron-l2-agent |

|

|

Distributed gateway deployment |

Spine |

N/A |

|

Leaf |

· neutron-l2-agent · neutron-l3-agent |

Figure 3 Example of Neutron deployment for centralized gateway deployment

Figure 4 Example of Neutron deployment for distributed gateway deployment

Restrictions and guidelines: VCF fabric configuration

All VCF fabric commands are supported only on the default MDC.

Automated VCF fabric provisioning and deployment

VCF provides the following features to ease deployment:

· Automatic topology discovery.

· Automated underlay network provisioning.

· Automated overlay network deployment.

Topology discovery

In a VCF fabric, each device uses LLDP to collect local topology information from directly-connected peer devices. The local topology information includes connection interfaces, roles, MAC addresses, and management interface addresses of the peer devices.

If multiple spine nodes exist in a VCF fabric, a master spine node is specified to collect the topology for the entire network.

Automated underlay network provisioning

An underlay network is a physical Layer 3 network. An overlay network is a virtual network built on top of the underlay network. The main stream overlay technology is VXLAN. For more information about VXLAN, see VXLAN Configuration Guide.

Automated underlay network provisioning sets up a Layer 3 underlay network for users. It is implemented by automatically executing configurations (such as Layer 3 reachability configurations) in user-defined template files.

Provisioning prerequisites

Before you start automated underlay network provisioning, complete the following tasks:

1. Finish the underlay network planning (such as IP address assignment, reliability design, and routing deployment) based on user requirements.

¡ In a data center network, the device obtains an IP address through a management Ethernet interface after starting up without loading configuration.

¡ In a campus network, the device obtains an IP address through VLAN-interface 1 after starting up without loading configuration.

2. Install and configure the Director server.

This step is required if you want to use the Director server to automatically create template files.

3. Configure the DHCP server, the TFTP server, and the NTP server.

4. Upload the template files to the TFTP server.

Template files for different roles in the VCF fabric are the basis of automatic provisioning. A template file name ends in .template. The Director server automatically creates template files for different device roles in the network topology. The following are available template types:

¡ Template for a leaf node in a VLAN.

¡ Template for a leaf node in a VXLAN with a centralized gateway.

¡ Template for a leaf node in a VXLAN with distributed gateways.

¡ Template for a spine node in a VLAN.

¡ Template for a spine node in a VXLAN with a centralized gateway.

¡ Template for a spine node in a VXLAN with distributed gateways.

Process of automated underlay network provisioning

The device finishes automated underlay network provisioning as follows:

1. Starts up without loading configuration.

2. Obtains an IP address, the IP address of the TFTP server, and a template file name from the DHCP server.

3. Downloads tag file device_tag.csv from the TFTP server and compares its SN code with the SN code in the tag file.

¡ If the two SN codes are the same, the device uses the device role and system description in the tag file.

¡ If the two SN codes are different, the device does not use the device role and system description.

4. Determines the name of the template file to download based on the following information:

¡ The device role in the tag file or the default device role.

¡ The template file name obtained from the DHCP server.

For example, template file dist_leaf.template is for a leaf node in a VXLAN network with distributed IP gateways.

5. Downloads the template file from the TFTP server.

6. Parses the template file and performs the following operations:

¡ Deploys static configurations that are independent from the VCF fabric topology.

¡ Deploys dynamic configurations according to the VCF fabric topology.

- In a data center network, the device usually uses a management Ethernet interface to connect to the fabric management network. Only links between leaf nodes and servers are automatically aggregated.

- In a campus network, the device uses VLAN-interface 1 to connect to the fabric management network. Links between two access nodes cascaded through GigabitEthernet interfaces and links between leaf nodes and access nodes are automatically aggregated. For links between spine nodes and leaf nodes, the trunk permit vlan command is automatically executed.

¡ Automatically enables PoE if the device is an access node.

|

|

NOTE: · On a data center network, if the template file contains software version information, the device first compares the software version with the current software version. If the two versions are inconsistent, the device downloads the new software version to perform software upgrade. After restarting, the device executes the configurations in the template file. · After all configurations in the template file are executed, use the save command to save the configurations to a configuration file. |

Template file

A template file contains the following:

· System-predefined variables—The variable names cannot be edited, and the variable values are set by the VCF topology discovery feature.

· User-defined variables—The variable names and values are defined by the user. These variables include the username and password used to establish a connection with the Neutron server, network type, and so on. The following are examples of user-defined variables:

#USERDEF

_underlayIPRange = 10.100.0.0/16

_master_spine_mac = 1122-3344-5566

_backup_spine_mac = aabb-ccdd-eeff

_username = aaa

_password = aaa

_rbacUserRole = network-admin

_neutron_username = openstack

_neutron_password = 12345678

_neutron_ip = 172.16.1.136

_loghost_ip = 172.16.1.136

_network_type = centralized-vxlan

· Static configurations—Static configurations are independent from the VCF fabric topology and can be directly executed. The following are examples of static configurations:

#STATICCFG

#

clock timezone beijing add 08:00:00

#

lldp global enable

#

stp global enable

#

· Dynamic configurations—Dynamic configurations are dependent on the VCF fabric topology. The device first obtains the topology information and then executes dynamic configurations. The following are examples of dynamic configurations:

#

interface $$_underlayIntfDown

port link-mode route

ip address unnumbered interface LoopBack0

ospf 1 area 0.0.0.0

ospf network-type p2p

lldp management-address arp-learning

lldp tlv-enable basic-tlv management-address-tlv interface LoopBack0

#

Automated overlay network deployment

Automated overlay network deployment covers VXLAN deployment and EVPN deployment.

Automated overlay network deployment is mainly implemented through the following features of Neutron:

· Layer 2 agent (L2 agent)—Responds to OpenStack events such as network creation, subnet creation, and port creation. It deploys Layer 2 networking to provide Layer 2 connectivity within a virtual network and Layer 2 isolation between different virtual networks.

· Layer 3 agent (L3 agent)—Responds to OpenStack events such as virtual router creation, interface creation, and gateway configuration. It deploys the IP gateways to provide Layer 3 forwarding services for VMs.

For the device to correctly communicate with the Neutron server through the RabbitMQ server, you need to configure the RabbitMQ server parameters. The parameters include the IP address of the RabbitMQ server, the username and password to log in to the RabbitMQ server, and the listening port.

Configuration restrictions and guidelines

VCF fabric is supported only on devices that operate in standalone mode.

Typically, the device completes both automated underlay network provisioning and automated overlay network deployment by downloading and executing the template file. You do not need to manually configure the device by using commands. If the device needs to complete only automated overlay network deployment, you can use related commands in "Enabling VCF fabric topology discovery" and "Configuring automated overlay network deployment." No template file is required.

VCF fabric configuration task list

|

Tasks at a glance |

|

(Required.) Enabling VCF fabric topology discovery |

|

(Optional.) Configuring automated underlay network provisioning |

|

(Optional.) Configuring automated overlay network deployment |

Enabling VCF fabric topology discovery

Configuration restrictions and guidelines

VCF fabric topology discovery can be automatically enabled by executing configurations in the template file or be manually enabled at the CLI. The device uses LLDP to collect topology data of directly-connected devices. Make sure you have enabled LLDP on the device before you manually enable VCF fabric topology discovery.

Configuration procedure

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Enable LLDP globally. |

lldp global enable |

By default, LLDP is disabled globally. |

|

3. Enable VCF fabric topology discovery. |

vcf-fabric topology enable |

By default, VCF fabric topology discovery is disabled. |

Configuring automated underlay network provisioning

Configuration restrictions and guidelines

When you configure automated underlay network provisioning, follow these restrictions and guidelines:

· Automated underlay network configuration can be automatically completed after the device starts up. If you need to change the automated underlay network provision on a running device, you can download the new template file through TFTP. Then, execute the vcf-fabric underlay autoconfigure command to manually specify the template file on the device.

· As a best practice, do not modify the network type or the device role while the device is running. If it is necessary to do so, make sure you understand the impacts on the network and services.

· If you change the role of the device, the new role takes effect after the device restarts up.

· User-defined MDCs do not have a role by default. You can use the vcf-fabric role { access | leaf | spine } command to specify the role for the MDCs. Automated underlay network provisioning is not supported by user-defined MDCs. The Director server can identify the role of user-defined MDCs in the VCF fabric and deploy configuration to the MDCs through NETCONF.

Configuration procedure

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. (Optional.) Specify the role of the device in the VCF fabric. |

vcf-fabric role { access | leaf | spine } |

By default, the role of the device in the VCF fabric is spine. |

|

3. Specify the template file for automated underlay network provisioning. |

vcf-fabric underlay autoconfigure template |

By default, no template file is specified for automated underlay network provisioning. |

|

4. (Optional.) Pause automated underlay network provisioning. |

vcf-fabric underlay pause |

By default, automated underlay network provisioning is not paused. |

|

5. (Optional.) Configure the device as a master spine node. |

vcf-fabric spine-role master |

By default, the device is not a master spine node. |

|

6. (Optional.) Enable Neutron and enter Neutron view. |

neutron |

By default, Neutron is disabled. |

|

7. (Optional.) Specify the network type. |

network-type { centralized-vxlan | distributed-vxlan | vlan } |

By default, the network type is VLAN. |

Configuring automated overlay network deployment

Configuration restrictions and guidelines

When you configure automated overlay network deployment, follow these restrictions and guidelines:

· If the network type is VLAN or VXLAN with a centralized IP gateway, perform this task on both the spine node and the leaf nodes.

· If the network type is VXLAN with distributed IP gateways, perform this task on all leaf nodes.

· Make sure the RabbitMQ server settings on the device are the same as those on the controller node. If the durable attribute of RabbitMQ queues is set on the Neutron server, you must enable creation of RabbitMQ durable queues on the device so that RabbitMQ queues can be correctly created.

· When you set the RabbitMQ server parameters or remove the settings, make sure the routes between the device and the RabbitMQ servers are reachable. Otherwise, the CLI does not respond until the TCP connections between the device and the RabbitMQ servers are terminated.

· Multiple virtual hosts might exist on a RabbitMQ server. Each virtual host can independently provide RabbitMQ services for the device. For the device to correctly communicate with the Neutron server, specify the same virtual host on the device and the Neutron server.

· As a best practice, do not perform any of the following tasks while the device is communicating with a RabbitMQ server:

¡ Change the source IPv4 address for the device to communicate with RabbitMQ servers.

¡ Bring up or shut down a port connected to a RabbitMQ server.

If you do so, it will take the CLI a long time to respond to the l2agent enable, undo l2agent enable, l3agent enable, or undo l3agent enable command.

Configuration procedure

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Enable Neutron and enter Neutron view. |

neutron |

By default, Neutron is disabled. |

|

3. Specify the IPv4 address, port number, and MPLS L3VPN instance of a RabbitMQ server. |

rabbit host ip ipv4-address [ port port-number ] [ vpn-instance vpn-instance-name ] |

By default, no IPv4 address or MPLS L3VPN instance of a RabbitMQ server is specified, and the port number of a RabbitMQ server is 5672. |

|

4. Specify the source IPv4 address for the device to communicate with RabbitMQ servers. |

rabbit source-ip ipv4-address [ vpn-instance vpn-instance-name ] |

By default, no source IPv4 address is specified for the device to communicate with RabbitMQ servers. The device automatically selects a source IPv4 address through the routing protocol to communicate with RabbitMQ servers. |

|

5. (Optional.) Enable creation of RabbitMQ durable queues. |

rabbit durable-queue enable |

By default, RabbitMQ non-durable queues are created. |

|

6. Configure the username used by the device to establish a connection with the RabbitMQ server. |

rabbit user username |

By default, the device uses username guest to establish a connection with the RabbitMQ server. |

|

7. Configure the password used by the device to establish a connection with the RabbitMQ server. |

rabbit password { cipher | plain } string |

By default, the device uses plaintext password guest to establish a connection with the RabbitMQ server. |

|

8. Specify a virtual host to provide RabbitMQ services. |

rabbit virtual-host hostname |

By default, the virtual host / provides RabbitMQ services for the device. |

|

9. Specify the username and password used by the device to deploy configurations through RESTful. |

restful user username password { cipher | plain } password |

By default, no username or password is configured for the device to deploy configurations through RESTful. |

|

10. (Optional.) Enable the Layer 2 agent. |

l2agent enable |

By default, the Layer 2 agent is disabled. |

|

11. (Optional.) Enable the Layer 3 agent. |

l3agent enable |

By default, the Layer 3 agent is disabled. |

|

12. (Optional.) Configure export targets for a tenant VPN instance. |

vpn-target target export-extcommunity |

By default, no export targets are configured for a tenant VPN instance. |

|

13. (Optional.) Configure import route targets for a tenant VPN instance. |

vpn-target target import-extcommunity |

By default, no import route targets are configured for a tenant VPN instance. |

|

14. (Optional.) Specify the IPv4 address of the border gateway. |

gateway ip ipv4-address |

By default, the IPv4 address of the border gateway is not specified. |

|

15. (Optional.) Configure the device as a border node. |

border enable |

By default, the device is not a border node. |

|

16. (Optional.) Enable local proxy ARP. |

proxy-arp enable |

By default, local proxy ARP is disabled. |

|

17. (Optional.) Configure the MAC address of VSI interfaces. |

vsi-mac mac-address |

By default, no MAC address is configured for VSI interfaces. |

Displaying and maintaining VCF fabric

Execute display commands in any view.

|

Task |

Command |

|

Display the role of the device in the VCF fabric. |

display vcf-fabric role |

|

Display VCF fabric topology information. |

display vcf-fabric topology |

|

Display information about automated underlay network provisioning. |

display vcf-fabric underlay autoconfigure |

|

Display the supported version and the current version of the template file for automated VCF fabric provisioning. |

display vcf-fabric underlay template-version |

Automated VCF fabric configuration example

Network requirements

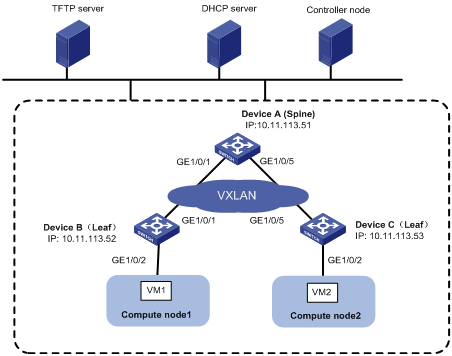

As shown in Figure 5, Devices A, B, and C all connect to the TFTP server and the DHCP server through management Ethernet interfaces. VM 1 resides on Compute node 1. VM 2 resides on the Compute node 2. The controller node runs OpenStack Kilo version and Ubuntu14.04 LTS operating system.

Configure a VCF fabric to meet the following requirements:

· The VCF fabric is a VXLAN network deployed on spine node Device A and leaf nodes Device B and Device C to provide connectivity between VM 1 and VM 2. Device A acts as a centralized VXLAN IP gateway.

· Devices A, B, and C complete automated underlay network provisioning by using template files after they start up.

· Devices A, B, and C complete automated overlay network deployment after the controller is configured.

· The DHCP server dynamically assigns IP addresses on subnet 10.11.113.0/24.

Configuration procedure

Configuring the DHCP server

1. Configure a DHCP address pool to dynamically assign IP addresses on subnet 10.11.113.0/24 to the devices.

2. Specify 10.11.113.19/24 as the IP address of the TFTP server.

3. Specify a template file (a file with the file extension .template) as the boot file.

Creating template files

Create template files and upload them to the TFTP server.

Typically, a template file includes the following contents:

· System-predefined variables—Internally used by the system. User-defined variables cannot be the same as system-predefined variables.

· User-defined variables—Defined by the user. User-defined variables include the following:

¡ Basic settings: Local username and password, user role, and so on.

¡ Neutron server settings: IP address of the Neutron server, the username and password for establishing a connection with the Neutron server, and so on.

· Software images for upgrade and the URL to download the software images.

· Configuration commands—Include commands independent from the topology (such as LLDP, NTP, and SNMP) and commands dependent on the topology (such as interfaces and Neutron settings).

Configuring the TFTP server

Place the template files on the TFTP server. In this example, both spine node and leaf node exist on the VXLAN network, so two template files (vxlan_spine.template and vxlan_leaf.template) are required.

Powering up Device A, Device B, and Device C

After starting up without loading configuration, Device A, Device B, and Device C each automatically download a template file to finish automated underlay network deployment. In this example, Device A downloads the template file vxlan_spine.template, and Device B and Device C download the template file vxlan_leaf.template.

Configuring the controller node

1. Install OpenStack Neutron related components:

a. Install Neutron, Image, Dashboard, Networking, and RabbitMQ.

b. Install H3C ML2 Driver. For more information, see H3C Neutron ML2 Driver Installation Guide.

c. Configure LLDP.

2. Configure the network as a VXLAN network:

Edit the /etc/neutron/plugin/ml2/ml2_conf.ini file as follows:

a. Add the h3c_vxlan type driver to the type driver list.

type_drivers = h3c_vxlan

b. Add h3c to the mechanism driver list.

mechanism_driver = openvswitch, h3c

c. Specify h3c_vxlan as the default tenant network type.

tenant_network_types=h3c_vxlan

d. Add the [ml2_type_h3c_vxlan] section, and specify a VXLAN ID range in the format of vxlan-id1:vxlan-id2. The value range for VXLAN IDs is 0 to 16777215.

[ml2_type_h3c_vxlan]

vni_ranges = 10000:60000

3. Configure the database:

Before you configure the database, make sure you have configured the Neutron server.

[openstack@localhost ~]$ sudo h3c_config db_sync

4. Restart the Neutron server:

[root@localhost ~]# service neutron-server restart

Configuring the compute nodes

Perform the following tasks on both Compute node 1 and Compute node 2.

1. Install the OpenStack Nova related components, openvswitch, and neutron-ovs-agent.

2. Create an OVS bridge named br-vlan and add interface Ethernet 0 (connected to the VCF fabric) to the bridge.

# ovs-vsctl add-br br-vlan

# ovs-vsctl add-port br-vlan eth0

3. Modify the bridge mapping on the OVS agent:

# Edit the /etc/neutron/plugins/ml2/openvswitch_agent.ini file as follows:

[ovs]

bridge_mappings = vlanphy:br-vlan

4. Restart the neutron-openvswitch-agent.

# service neutron-openvswitch-agent restart

Verifying the OpenStack environment

Perform the following tasks on the OpenStack dashboard.

1. Create a network named Network.

2. Create subnets:

# Create a subnet named subnet-1 and assign network address range 10.10.1.0/24 to the subnet. (Details not shown.)

# Create a subnet named subnet-2, and assign network address range 10.1.1.0/24 to the subnet. (Details not shown.)

In this example, VM 1 and VM 2 obtain IP addresses from the DHCP server. You must enable DHCP for the subnets.

3. Create a router named router. Bind a port on the router with subnet subnet-1 and then bind another port with subnet subnet-2. (Details not shown.)

4. Create VMs:

# Create VM 1 and VM 2 on Compute node 1 and Compute node 2, respectively. (Details not shown.)

In this example, VM 1 and VM 2 obtain IP addresses 10.10.1.3/24 and 10.1.1.3/24 from the DHCP server, respectively.

Verifying the configuration

1. Verify the collected topology of the underlay network.

# Display VCF fabric topology information on Device A.

[DeviceA] display vcf-fabric topology

Topology Information

----------------------------------------------------------------------------------

* indicates the master spine role among all spines

SpineIP Interface Link LeafIP Status

*10.11.113.51 GigabitEthernet1/0/1 Up 10.11.113.52 Deploying

GigabitEthernet1/0/2 Down -- --

GigabitEthernet1/0/3 Down -- --

GigabitEthernet1/0/4 Down -- --

GigabitEthernet1/0/5 Up 10.11.113.53 Deploying

GigabitEthernet1/0/6 Down -- --

GigabitEthernet1/0/7 Down -- --

GigabitEthernet1/0/8 Down -- --

2. Verify the automated configuration for the underlay network.

# Display information about automated underlay network provisioning on Device A.

[DeviceA] display vcf-fabric underlay autoconfigure

success command:

#

system

clock timezone beijing add 08:00:00

#

system

lldp global enable

#

system

stp global enable

#

system

ospf 1

graceful-restart ietf

area 0.0.0.0

#

system

interface LoopBack0

#

system

ip vpn-instance global

route-distinguisher 1:1

vpn-target 1:1 import-extcommunity

#

system

l2vpn enable

#

system

vxlan tunnel mac-learning disable

vxlan tunnel arp-learning disable

#

system

ntp-service enable

ntp-service unicast-peer 10.11.113.136

#

system

netconf soap http enable

netconf soap https enable

restful http enable

restful https enable

#

system

ip http enable

ip https enable

#

system

telnet server enable

#

system

info-center loghost 10.11.113.136

#

system

local-user aaa

password ******

service-type telnet http https

service-type ssh

authorization-attribute user-role network-admin

#

system

line vty 0 63

authentication-mode scheme

user-role network-admin

#

system

bgp 100

graceful-restart

address-family l2vpn evpn

undo policy vpn-target

#

system

vcf-fabric topology enable

#

system

neutron

rabbit user openstack

rabbit password ******

rabbit host ip 10.11.113.136

restful user aaa password ******

network-type centralized-vxlan

vpn-target 1:1 export-extcommunity

l2agent enable

l3agent enable

#

system

snmp-agent

snmp-agent community read public

snmp-agent community write private

snmp-agent sys-info version all

#interface up-down:

GigabitEthernet1/0/1

GigabitEthernet1/0/5

Loopback0 IP Allocation:

DEV_MAC LOOPBACK_IP MANAGE_IP STATE

a43c-adae-0400 10.100.16.17 10.11.113.53 up

a43c-9aa7-0100 10.100.16.15 10.11.113.51 up

a43c-a469-0300 10.100.16.16 10.11.113.52 up

bgp configure peer:

10.100.16.17

10.100.16.16

3. Verify the automated deployment for the overlay network.

# Display the running configuration for the current VSI on Device A.

[DeviceA] display current-configuration configuration vsi

#

vsi vxlan10071

gateway vsi-interface 8190

vxlan 10071

evpn encapsulation vxlan

route-distinguisher auto

vpn-target auto export-extcommunity

vpn-target auto import-extcommunity

#

return

[DeviceA] display current-configuration interface Vsi-interface

#

interface Vsi-interface8190

ip binding vpn-instance neutron-1024

ip address 11.1.1.1 255.255.255.0 sub

ip address 10.10.1.1 255.255.255.0 sub

#

return

[DeviceA] display ip vpn-instance

Total VPN-Instances configured : 1

VPN-Instance Name RD Create time

neutron-1024 1024:1024 2016/03/12 00:25:59

4. Verify the connectivity between VM 1 and VM 2.

# Ping VM 2 on Compute node 2 from VM 1 on Compute node 1.

$ ping 10.1.1.3

Ping 10.1.1.3 (10.1.1.3): 56 data bytes, press CTRL_C to break

56 bytes from 10.1.1.3: icmp_seq=0 ttl=254 time=10.000 ms

56 bytes from 10.1.1.3: icmp_seq=1 ttl=254 time=4.000 ms

56 bytes from 10.1.1.3: icmp_seq=2 ttl=254 time=4.000 ms

56 bytes from 10.1.1.3: icmp_seq=3 ttl=254 time=3.000 ms

56 bytes from 10.1.1.3: icmp_seq=4 ttl=254 time=3.000 ms

--- Ping statistics for 10.1.1.3 ---

5 packet(s) transmitted, 5 packet(s) received, 0.0% packet loss

round-trip min/avg/max/std-dev = 3.000/4.800/10.000/2.638 ms