- Products

- Solutions

- Support and Service

- Learn

- Partners

- About Us

- Contact Sales

- Become a Partner

-

Login

Login

Country / Region

Recommended

-

H3C CAS Named in 2025 Gartner Market Guide

H3C CAS Named in 2025 Gartner ® Market Guide for Server Virtualization Platforms

Recommended

Recommended

Recommended

Recommended

Recommended

-

The First Affiliated Hospital Zhejiang University School of Medicine

Zhejiang University First Hospital is the top-ranked hospital in Zhejiang Province and can be ranked in the top ten nationwide in terms of comprehensive strength.

Recommended

-

Universiti Tunku Abdul Rahman Enhances its Campus Network with H3C’s Wi-Fi 6

Due to the pandemic, much like other educational institutions, Universiti Tunku Abdul Rahman (UTAR) had to move their in-person lectures online…

Recommended

-

AD-WAN

An intelligent WAN featuring low-cost, excellent experience, simple O&M and high security based on the cloud native architecture.

Recommended

-

Joint efforts with CMRI to complete a 4.9GHz band 5G small cell pilot

H3C, in collaboration with China Mobile, has successfully completed the industry's first deployment of 5G extended pico cell in 4.9GHz frequency band -

Empowering China Unicom 5G Smart MAN successfully go online

H3C Smart Metropolitan Area Network (MAN) solution has successfully achieved full-scale commercial deployment across China Unicom.

Recommended

-

H3C SDN Network Upgrade Gives Steel Industry a Fresh Start

he successful commercialization of the ADDC solution provides a complete set of effective solutions for the construction, maintenance, and upgrade of cloud data centers in the future -

Digital Realization of Haier Interconnected Factory

H3C has developed future-oriented smart factory network solutions to help Haier Group establish and operate Haier Jiaozhou Air Conditioner Interconnected Factory

Recommended

-

Digitization is the innovative lifeline for the apparel industry to return to offline selling

In the new retail era, the focus of selling concept has changed from goods to people. Digitization decoupled consumers, merchandise, and stores and then refined their relations.

Recommended

-

H3C Empowers Atlantis Sanya to Create a Digital Journey for a Seven-Star Hotel

H3C Empowers Atlantis Sanya to Create a Digital Journey for a Seven-Star Hotel With advancements in network technologies, H3C has created a sustainable service and simplified, manageable robust network system for Atlantis Hotel. -

H3C Helps JinJiang Inn Group to Achieve a Comprehensive Hotel Upgrades

H3C, as Jinjiang Group's main ICT supplier, offers network equipment for thousands of hotels and hundreds of thousands of rooms.

Support

Documents and Downloads

Service

Product Support Service

Technical Service Solutions

Learn

Recommended

Partners

Become a Partner

Partner Policy & Program

Partner Sales Resources

Service Business

Global Learning

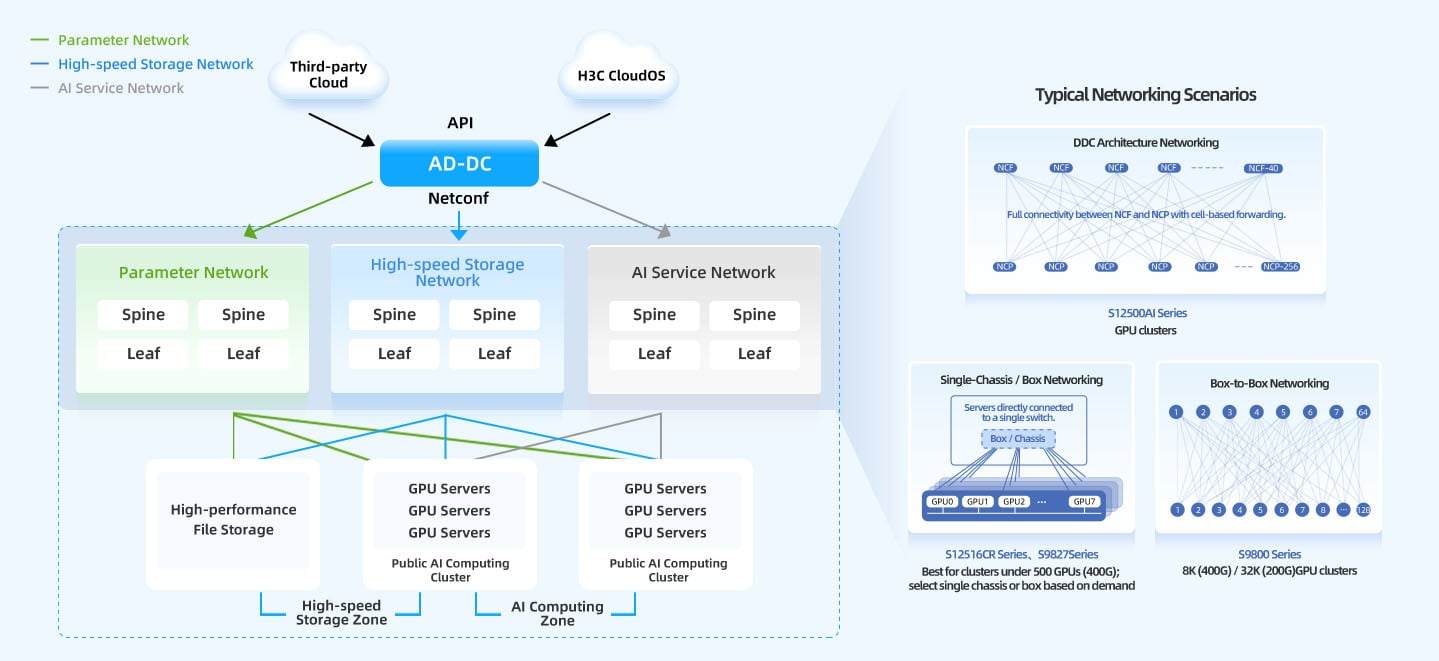

AI Computing Network Solution

Next-Gen Lossless Networking

-

Massive Connection Scale

-

Scale

Support for 100K+ GPU clusters.Speed

400G/800G high-speed networking becomes base requirements.Capacity

High-density ports determine cluster limits of single device.

-

Low Connection Efficiency

-

Bottlenecks

Packet loss and traffic bursts limit compute efficiency.MoE Pressure

All-to-All traffic surges strain the network.

-

Low Connection Availability

-

Deployment

Manual provisioning is slow and inefficient.Visibility

Difficult fault backtracking and lack of monitoring.

model evolution with hyper-scale, lossless networking.

Hyper-Scale

16x Capacity: Delivers 16x the industry-standard networking capacity per POD.

10K-GPU Support: Seamlessly scales to support clusters of 10,000+ GPUs.

Ultra-Efficiency

20% Boost: Proprietary "Multi-rail + Path Navigation" tech increases training efficiency by 20%.

IB-Level Performance: DDC architecture achieves 100% load balancing, matching InfiniBand performance.

Superior Operability

10x Faster: Integrated deployment accelerates provisioning and reliability by 10x.

Full Visibility: Real-time tracking and fault backtracking for accelerated troubleshooting.