- Table of Contents

- Related Documents

-

| Title | Size | Download |

|---|---|---|

| 02-Appendix | 4.03 MB |

Contents

Appendix A Server specifications

Server models and chassis view

Front panel view of the server

Appendix B Component specifications

DRAM DIMM rank classification label

Front 4LFF SAS/SATA drive backplane

Front 8SFF UniBay drive backplane

Front 2SFF UniBay drive backplane

Rear 2SFF UniBay drive backplane

Appendix C Managed removal of OCP network adapters

Appendix D Environment requirements

About environment requirements

General environment requirements

Operating temperature requirements

10SFF and 8SFF drive configuration

4LFF, 10SFF, and 8SFF drive configuration

Appendix A Server specifications

The information in this document might differ from your product if it contains custom configuration options or features.

Figures in this document are for illustration only.

Server models and chassis view

H3C UniServer R4700 G6 servers are 1U rack servers with two Intel Eagle Stream processors, which can satisfy the requirements of customers for deployment space and economy. The servers are widely used in general computing scenarios, including databases, virtualization, and cloud desktops. They are suitable for typical applications in multiple industries such as the Internet, enterprise, and finance. The servers balance computing performance and scalability and have better space optimization for simple deployment and management.

Figure 1 Chassis view

The servers come in the models listed in Table 1. These models support different drive configurations. For more information about drive configuration and compatible storage controller configuration, see H3C UniServer R4700 G6 Server Drive Configurations and Cabling Solutions.

Table 1 R4700 G6 server models

|

Model |

Maximum drive configuration |

|

LFF |

4LFF drives at the front + 2SFF drives at the rear |

|

SFF |

10SFF drives at the front + 2SFF drives at the rear |

|

E1.S |

32E1.S drives at the front |

Technical specifications

|

Item |

Specifications |

|

Dimensions (H × W × D) |

· Without a security bezel: ¡ LFF&SFF models: 42.9 × 434.6 × 777 mm (1.69 × 17.11 × 30.59 in) ¡ E1.S model: 42.9 × 434.6 × 835 mm (1.69 × 17.11 × 32.87 in) · With a security bezel: ¡ LFF&SFF models: 42.9 × 434.6 × 805 mm (1.69 × 17.11 × 31.69 in) ¡ E1.S model: 42.9 × 434.6 × 863 mm (1.69 × 17.11 × 33.98 in) |

|

Max. weight |

30.5 kg (67.24 lb) |

|

Processors |

2 × Intel Eagle Stream processors, maximum 350 W power consumption per processor. Montage Jintide processors are supported. |

|

Memory |

A maximum of 32 DDR5 DIMMs and the maximum speed of 4800MT/s Supports RDIMMs A maximum of 12 TB capacity for two processors |

|

Storage controllers |

· Embedded VROC storage controller · High-performance standard storage controller · NVMe VROC module · Serial & DSD module (supports RAID 1) |

|

Chipset |

Intel® C741 |

|

VGA chip |

Aspeed AST2600 |

|

Network connectors |

· 1 × embedded 1 Gbps dedicated management port · A maximum of two OCP 3.0 network adapter connectors to expand 4 × 1 GE copper ports, 2 × 10 GE copper or fiber ports, 2 × 25 GE fiber ports, or 2 × 100 GE fiber ports · (Optional) Network adapter PCIe slots that support 1/10/25/100 GE network adapters, InfiniBand cards, and intelligent network adapters |

|

Integrated graphics |

The graphics chip (model AST2600) is integrated in the BMC management chip to provide a maximum resolution of 1920 × 1200@60Hz (32bpp), where: · 1920 × 1200: 1920 horizontal pixels and 1200 vertical pixels. · 60Hz: Screen refresh rate, 60 times per second. · 32bpp: Color depts. The higher the value, the more colors that can be displayed. If you attach monitors to both the front and rear VGA connectors, only the monitor connected to the front VGA connector is available. |

|

Connectors |

· 2 × VGA connectors, one at the server front (not available for the E1.S model) and one at the server rear · 4 × USB connectors (one on the system board, two at the server rear, and one at the server front) · 1 × Type-C HDM dedicated management interface (at the server front) · 1 × serial port (optional) |

|

Expansion slots |

· 4 × PCIe 4.0 slots (three PCIe 5.0 slots and one PCIe 4.0 slot) · 2 × OCP 3.0 network adapter slots · CXL1.1 |

|

Optical drives |

External USB optical drives |

|

Power supplies |

2 × hot-swappable power supplies, 1 + 1 redundancy |

|

Standards |

CCC, UL, CE |

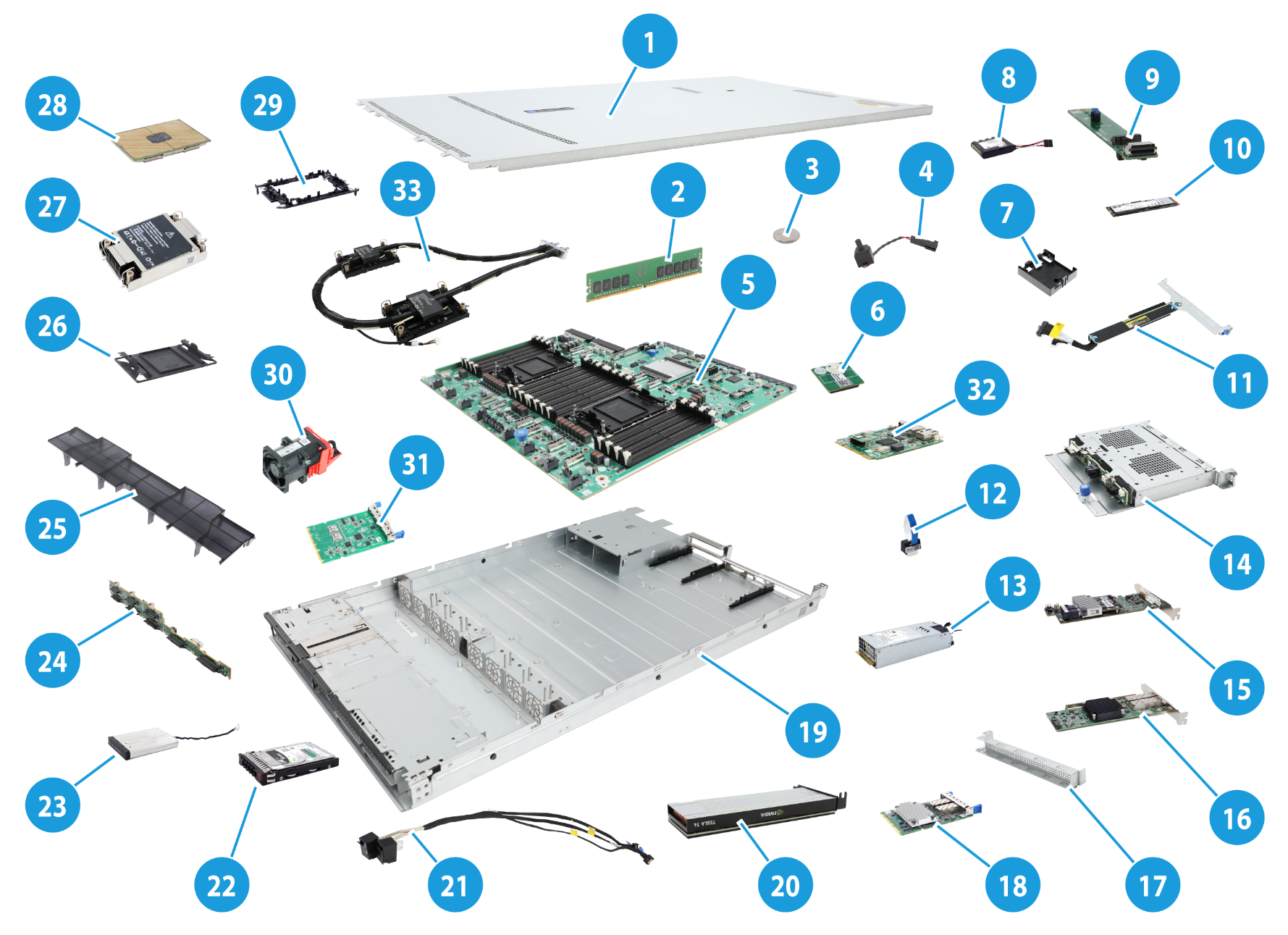

Components

Figure 2 R4700 G6 server components

|

Item |

Description |

|

(1) Chassis access panel |

N/A |

|

(2) Memory |

Stores computing data and data exchanged with external storage temporarily. DDR5 DIMMs are supported. |

|

(3) System battery |

Supplies power to the system clock to ensure system time correctness. |

|

(4) Chassis open-alarm module |

Detects if the access panel is removed. The detection result can be displayed from the HDM Web interface. |

|

(5) System board |

One of the most important parts of a server, on which multiple components are installed, such as processor, memory, and fan. It is integrated with basic server components, including the BIOS chip and PCIe connectors. |

|

(6) Encryption module |

Provides encryption services for the server to enhance data security. |

|

(7) Supercapacitor holder |

Secures a supercapacitor in the chassis. |

|

(8) Supercapacitor |

Supplies power to the flash card on the power fail safeguard module, which enables the storage controller to back up data to the flash card for protection when power outage occurs. |

|

(9) SATA M.2 SSD expander module |

Provides M.2 SSD slots. |

|

(10) SATA M.2 SSD |

Provides data storage space for the server. |

|

(11) Riser card |

Provides PCIe slots. |

|

(12) NVMe VROC module |

Works with Intel VMD to provide RAID capability for the server to virtualize storage resources of NVMe drives. |

|

(13) Power supply |

Supplies power to the server. The power supplies support hot swapping and 1+1 redundancy. |

|

(14) Rear drive backplane |

Provides power and data channels for drives at the server rear. |

|

(15) Storage controller |

Provides RAID capability to SAS/SATA drives, including RAID configuration and RAID scale-up. It supports online upgrade of the controller firmware and remote configuration. |

|

(16) Standard PCIe network adapter |

Installed in a standard PCIe slot to provide network ports. |

|

(17) Riser card blank |

Installed on an empty PCIe riser connector to ensure good ventilation. |

|

(18) OCP adapter |

Provides one slot for installing an OCP network adapter and two slots for installing drives at the server rear. |

|

(19) Chassis |

N/A |

|

(20) GPU module |

Provides computing services such as graphics processing and AI. |

|

(21) Chassis ears |

Attach the server to the rack. The right ear is integrated with the front I/O component, and the left ear is integrated with VGA connector, HDM dedicated management connector, and USB 3.0 connector. |

|

(22) Drive |

Provides data storage space. Drives support hot swapping. |

|

(23) LCD smart management module |

Displays basic server information, operating status, and fault information. Together with HDM event logs, users can fast locate faulty components and troubleshoot the server, ensuring server operation. |

|

(24) Drive backplane |

Provides power and data channels for drives. |

|

(25) Air baffle |

Provides ventilation aisles for processor heatsinks and memory modules and provides support for the supercapacitor. |

|

(26) Processor socket cover |

Installed over an empty processor socket to protect pins in the socket. |

|

(27) Processor heatsink |

Cools the processor. |

|

(28) Processor |

Integrates memory and PCIe controllers to provide data processing capabilities for the server. |

|

(29) Processor retaining bracket |

Attaches a processor to the heatsink. |

|

(30) Fan |

Helps server ventilation. Fans support hot swapping and N+1 redundancy. |

|

(31) Serial & DSD module |

Provides one serial port and two SD card slots. |

|

(32) Server management module |

Provides I/O connectors and HDM out-of-band management features. |

|

(33) Processor liquid-cooled module |

Cools processors. |

Front panel

Front panel view of the server

Figure 3 8SFF front panel

Table 2 8SFF front panel description

|

Item |

Description |

|

1 |

USB 2.0 connector |

|

2 |

Serial label pull tab |

|

3 |

2SFF drives or PCIe modules and a riser card on PCIe riser connector 4 (optional) |

|

4 |

VGA module (supports one VGA connector) (optional) |

|

5 |

Drives or LCD smart management module (optional) |

|

6 |

8SFF drives |

|

7 |

HDM dedicated management connector |

Figure 4 4LFF front panel

Table 3 4LFF front panel description

|

Item |

Description |

|

1 |

USB 2.0 connector |

|

2 |

Serial label pull tab |

|

3 |

ODD blank |

|

4 |

VGA module (supports one VGA connector)(optional) |

|

5 |

4LFF drives |

|

6 |

HDM dedicated management connector |

LEDs and buttons

Figure 5 Front panel LEDs and buttons

Table 4 LEDs and buttons on the front panel

|

Button/LED |

Status |

|

Power on/standby button and system power LED |

· Steady green—The system has started. · Flashing green (1 Hz)—The system is starting. · Steady amber—The system is in standby state. · Off—No power is present. Possible reasons: ¡ No power source is connected. ¡ No power supplies are present. ¡ The installed power supplies are faulty. ¡ The system power cords are not connected correctly. |

|

OCP 3.0 network adapter Ethernet port LED |

· Steady green—A link is present on a port of an OCP 3.0 network adapter. · Flashing green (1 Hz)—A port on an OCP 3.0 network adapter is receiving or sending data. · Off—No link is present on any port of either OCP 3.0 network adapter. |

|

Health LED |

· Steady green—The system is operating correctly or a minor alarm is present. · Flashing green (4 Hz)—HDM is initializing. · Flashing amber (1 Hz)—A major alarm is present. · Flashing red (1 Hz)—A critical alarm is present. If a system alarm is present, log in to HDM to obtain more information about the system running status. |

|

UID button LED |

· Steady blue—UID LED is activated. The UID LED can be activated by using the following methods: ¡ Press the UID button LED. ¡ Activate the UID LED from HDM. · Flashing blue: ¡ 1 Hz—The firmware is being upgraded or the system is being managed from HDM. Do not power off the server. ¡ 4 Hz—HDM is restarting. To restart HDM, press the UID button LED for eight seconds. · Off—UID LED is not activated. |

Security bezel light

The security bezel provides hardened security and uses effect light to visualize operation and health status to help inspection and fault location. The default effect light is as shown in Figure 6.

Table 5 Security bezel effect light

|

System status |

Light status |

|

Standby |

Steady white: The system is in standby state. |

|

Startup |

· Beads turn on white from middle in turn—POST progress. · Beads turn on white from middle three times—POST has finished. |

|

Running |

· Breathing white (gradient at 0.2 Hz)—Normal state, indicating the system load by the percentage of beads turning on from the middle to the two sides of the security bezel. ¡ No load—Less than 10%. ¡ Light load—10% to 50%. ¡ Middle load—50% to 80%. ¡ Heavy load—More than 80%. · Breathing white (gradient at 1 Hz )—A pre-alarm is present (only applicable to drive pre-failure). · Flashing amber (1 Hz)—A major alarm is present. · Flashing red (1 Hz)—A critical alarm is present (only applicable to drive errors). |

|

UID |

· All beads flash white (1 Hz)—The firmware is being upgraded or the system is being managed from HDM. Do not power off the server. · Some beads flash white (1 Hz)—HDM is restarting. |

Ports

Table 6 Ports on the front panel

|

Port |

Type |

Description |

|

VGA connector |

DB-15 |

Connects a display terminal, such as a monitor or KVM device. |

|

USB connector |

USB 3.0/2.0 |

Connects the following devices: · USB flash drive. · USB keyboard or mouse. · USB optical drive for operating system installation. |

|

HDM dedicated management connector |

Type-C |

Connects a Type-C to USB adapter cable, which connects to a USB Wi-Fi adapter or USB drive. |

Rear panel

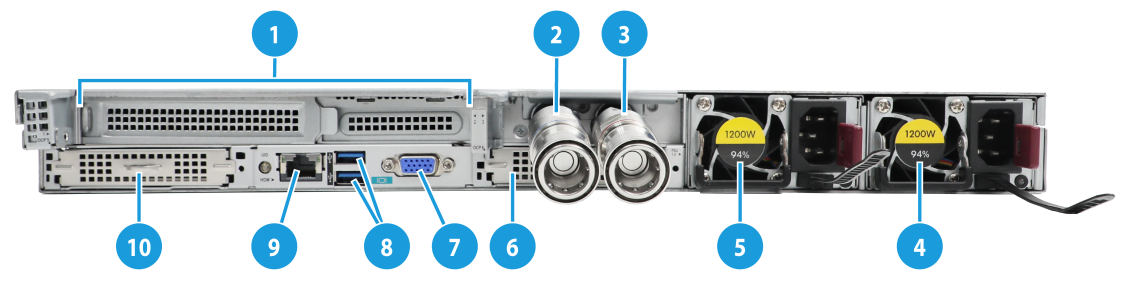

Rear panel view

Figure 7 Rear panel components

Table 7 Rear panel description

|

Item |

Description |

|

|

1 |

PCIe riser bay 1: PCIe slots 1 and 2 |

|

|

2 |

PCIe riser bay 2: PCIe slot 3 |

|

|

3 |

Power supply 2 |

|

|

4 |

Power supply 1 |

|

|

5 |

OCP 3.0 network adapter or serial & DSD module (in slot 6) (optional) |

|

|

6 |

VGA connector |

|

|

7 |

Two USB 3.0 connectors |

|

|

8 |

HDM dedicated network port (1Gbps, RJ-45, default IP address 192.168.1.2/24) |

|

|

9 |

OCP 3.0 network adapter (in slot 5) (optional) |

|

|

For more information about the serial & DSD module, see "Serial & DSD module." |

||

When the server is installed with a liquid-cooled module, slot 3 on the rear panel will be used to install the inlet and outlet ports. The quick couplers are divided into male connectors and hose connectors, as shown in Figure 8 and Figure 9, respectively.

Figure 8 Rear panel components (Processor liquid-cooled module with male connector)

Figure 9 Rear panel components (Processor liquid-cooled module with hose connector)

Table 8 Rear panel description

|

Item |

Description |

|

|

1 |

PCIe riser bay 1: PCIe slots 1 and 2 |

|

|

2 |

Liquid inlet (blue) |

|

|

3 |

Liquid outlet (red) |

|

|

4 |

Power supply 2 |

|

|

5 |

Power supply 1 |

|

|

6 |

OCP 3.0 network adapter or serial & DSD module (slot 17) (optional) |

|

|

7 |

VGA connector |

|

|

8 |

Two USB 3.0 connectors |

|

|

9 |

HDM dedicated network port (1Gbps, RJ-45, default IP address 192.168.1.2/24) |

|

|

10 |

OCP 3.0 network adapter (slot 5) (optional) |

|

LEDs

|

(1) UID LED |

(2) Link LED of the Ethernet port |

|

(3) Activity LED of the Ethernet port |

(4) Power supply LED for power supply 1 |

|

(5) Power supply LED for power supply 2 |

|

Table 9 LEDs on the rear panel

|

LED |

Status |

|

UID LED |

· Steady blue—UID LED is activated. The UID LED can be activated by using the following methods: ¡ Press the UID button LED. ¡ Enable UID LED from HDM. · Flashing blue: ¡ 1 Hz—The firmware is being upgraded in an out-of-band manner from HDM or the system is being managed from HDM. Do not power off the server. ¡ 4 Hz—HDM is restarting. To restart HDM, press the UID button LED for eight seconds. · Off—UID LED is not activated. |

|

Link LED of the Ethernet port |

· Steady green—A link is present on the port. · Off—No link is present on the port. |

|

Activity LED of the Ethernet port |

· Flashing green (1 Hz)—The port is receiving or sending data. · Off—The port is not receiving or sending data. |

|

Power supply LED |

· Steady green—The power supply is operating correctly. · Flashing green (0.33 Hz)—The power supply is in standby state and does not output power. · Flashing green (2 Hz)—The power supply is updating its firmware. · Steady amber—Either of the following conditions exists: ¡ The power supply is faulty. ¡ The power supply does not have power input, but another power supply has correct power input. · Flashing amber (1 Hz)—An alarm has occurred on the power supply. · Off—No power supplies have power input, which can be caused by an incorrect power cord connection or power source shutdown. |

Ports

Table 10 Ports on the rear panel

|

Port |

Type |

Description |

|

VGA connector |

DB-15 |

Connects a display terminal, such as a monitor or KVM device. |

|

Serial port |

RJ-45 |

The BIOS serial port is used for the following purposes: · Log in to the server when the remote network connection to the server has failed. · Establish a GSM modem or encryption lock connection. The serial port is on the serial & DSD module. For more information, see "Serial & DSD module." |

|

USB connector |

USB 3.0 |

Connects the following devices: · USB flash drive. · USB keyboard or mouse. · USB optical drive for operating system installation. |

|

HDM dedicated network port |

RJ-45 |

Establishes a network connection to manage HDM from its Web interface. |

|

Power receptacle |

Standard single-phase |

Connects the power supply to the power source. |

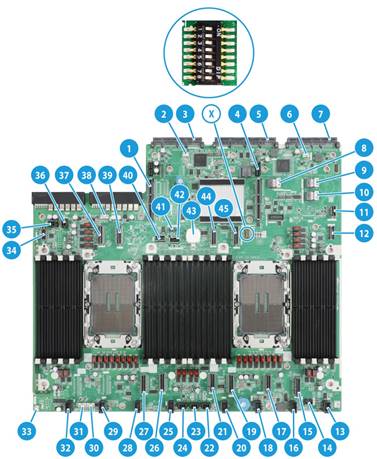

System board

System board components

Figure 11 System board components

Table 11 System board components

|

No. |

Description |

|

1 |

PCIe riser connector 3 (for processor 2)(RISER3 PCIe X16) |

|

2 |

Fan connector 2 for the OCP 3.0 network adapter |

|

3 |

OCP 3.0 network adapter slot 2/Serial & DSD module slot (OCP2&DSD&UART CARD) |

|

4 |

PCIe riser connector 1 (for processor 1)(RISER1 PCIe X16) |

|

5 |

Server management module slot (BMC) |

|

6 |

Fan connector 1 for the OCP 3.0 network adapter (OCP1 FAN) |

|

7 |

OCP 3.0 network adapter slot 1 (OCP1) |

|

8 |

SlimSAS connector 3 (x4 SATA)(SATA PORT3) |

|

9 |

SlimSAS connector 2 (x4 SATA)(SATA PORT2) |

|

10 |

SlimSAS connector 1 (x4 SATA or M.2 SSD)(M.2&SATA PORT1) |

|

11 |

Front I/O connector (RIGHT EAR) |

|

12 |

M.2 SSD AUX connector (M.2 AUX) |

|

13 |

Fan module connector 8 (FAN8) |

|

14 |

LCD smart management module connector (DIAG LCD) |

|

15 |

MCIO connector C1-P4A (x8 PCIe5.0, for processor 1)(C1-P4A) |

|

16 |

Fan module connector 7 (FAN7) |

|

17 |

MCIO connector C1-P4C (x8 PCIe5.0, for processor 1)(C1-P4C) |

|

18 |

Fan module connector 6 (FAN6) |

|

19 |

MCIO connector C1-P3C (x8 PCIe5.0, for processor 1)(C1-P3C) |

|

20 |

Fan module connector 5 (FAN5) |

|

21 |

MCIO connector C1-P3A (x8 PCIe5.0, for processor 1)(C1-P3A) |

|

22 |

Front drive backplane power connector 3 (PWR3) |

|

23 |

Front drive backplane power connector 2 (PWR2) |

|

24 |

Front drive backplane power connector 1 (PWR1) |

|

25 |

Fan module connector 4 (FAN4) |

|

26 |

MCIO connector C2-P4A (x8 PCIe5.0, for processor 2)(C2-P4A) |

|

27 |

MCIO connector C2-P4C (x8 PCIe5.0, for processor 2)(C2-P4C) |

|

28 |

Fan module connector 3 (FAN3) |

|

29 |

Fan module connector 2 (FAN2) |

|

30 |

Front drive backplane AUX connector 2 (AUX2) |

|

31 |

Front drive backplane AUX connector 1 (AUX1) |

|

32 |

Fan module connector 1 (FAN1) |

|

33 |

Chassis-open alarm module connector (INTRUDER) |

|

34 |

Fron VGA and USB 2.0 connector (LEFT EAR) |

|

35 |

Rear drive backplane power connector 4 (PWR4) |

|

36 |

Rear drive backplane power connector 5 (PWR5) |

|

37 |

MCIO connector C2-P2C ( for processor 2)(C2-P2C) |

|

38 |

Rear drive backplane AUX connector 5 (AUX5) |

|

39 |

MCIO connector C2-P2A (for processor 2)(C2-P2A) |

|

40 |

Riser card AUX connector 8 (AUX8) |

|

41 |

NVMe VROC module connector (NVMe RAID KEY) |

|

42 |

Embedded USB 2.0 connector (INTERNAL USB2.0) |

|

43 |

System battery |

|

44 |

MCIO connector C1-P2C (for processor 1)(C1-P2C) |

|

45 |

MCIO connector C1-P2A (for processor 1)(C1-P2A) |

|

X |

System maintenance switch (MAINTENANCE) |

|

x8 PCIe5.0 description: · PCIe5.0: Fifth-generation signal speed. · x8: Bus bandwidth. |

|

System maintenance switch

Figure 10 shows the system maintenance switch. Table 11 describes how to use the maintenance switch.

Figure 12 System maintenance switch

Table 12 System maintenance switch description

|

Item |

Description |

Remarks |

|

1 |

· Off (default)—HDM login requires the username and password of a valid HDM user account. · On—HDM login requires the default username and password. |

For security purposes, turn off the switch after you complete tasks with the default username and password as a best practice. |

|

5 |

· Off (default)—Normal server startup. · On—Restores the default BIOS settings. |

To restore the default BIOS settings, turn on and then turn off the switch. The server starts up with the default BIOS settings at the next startup. The server cannot start up when the switch is turned on. To avoid service data loss, stop running services and power off the server before turning on the switch. |

|

6 |

· Off (default)—Normal server startup. · On—Clears all passwords from the BIOS at server startup. |

If this switch is on, the server will clear all the passwords at each startup. Make sure you turn off the switch before the next server startup if you do not need to clear all the passwords. |

|

2, 3, 4, 7, and 8 |

Reserved for future use. |

N/A |

DIMM slots

The system board and processor mezzanine board each provide eight DIMM channels per processor, and 16 channels in total, as shown in Figure 11. Each channel contains two DIMM slots.

Figure 13 System board DIMM slot layout

Appendix B Component specifications

For components compatible with the server and detailed component information, use the component compatibility lookup tool at http://www.h3c.com/en/home/qr/default.htm?id=66.

About component model names

The model name of a hardware option in this document might differ slightly from its model name label.

A model name label might add a prefix or suffix to the hardware-coded model name for purposes such as identifying the matching server brand or applicable region. For example, the DDR5-4800-32G-1Rx4 memory model represents memory module labels including UN-DDR5-4800-32G-1Rx4-R, UN-DDR5-4800-32G-1Rx4-F, and UN-DDR5-4800-32G-1Rx4-S, which have different prefixes and suffixes.

DIMMs

The server provides eight DIMM channels per processor and each channel has two DIMM slots. If the server has one processor, the total number of DIMM slots is 16. If the server has two processors, the total number of DIMM slots is 32. For the physical layout of DIMM slots, see "DIMM slots."

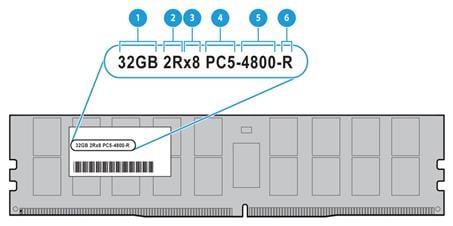

DRAM DIMM rank classification label

A DIMM rank is a set of memory chips that the system accesses while writing or reading from the memory. On a multi-rank DIMM, only one rank is accessible at a time.

To determine the rank classification of a DIMM, use the label attached to the DIMM, as shown in Figure 12. The meaning of the DDR DIMM rank classification labels are similar and this section uses the label of a DDR5 DIMM as an example.

Figure 14 DDR DIMM rank classification label

Table 13 DIMM rank classification label description

|

Callout |

Description |

Remarks |

|

1 |

Capacity |

Options include: · 8GB. · 16GB. · 32GB. · 64GB. |

|

2 |

Number of ranks |

Options include: · 1R— One rank (Single-Rank). · 2R—Two ranks (Dual-Rank). A 2R DIMM is equivalent to two 1R DIMMs. · 4R—Four ranks (Quad-Rank). A 4R DIMM is equivalent to two 2R DIMMs · 8R—Eight ranks (8-Rank). An 8R DIMM is equivalent to two 4R DIMMs. |

|

3 |

Data width |

Options include: · ×4—4 bits. · ×8—8 bits. |

|

4 |

DIMM generation |

DDR5 |

|

5 |

Data rate |

4800 indicates 4800 MHz. |

|

6 |

DIMM type |

Options include: · L—LRDIMM. · R—RDIMM. |

HDDs and SSDs

Drive numbering

The server provides different drive numbering schemes for different drive configurations at the server front and rear, as shown in Figure 13 through Figure 17.

Figure 15 Drive numbering for front 10SFF drive configurations

Figure 16 Drive numbering for front 8SFF drive configurations

Figure 17 Drive numbering for front 4LFF drive configurations

Figure 18 Drive numbering for front 32E1.S drive configurations

Figure 19 Drive numbering for rear 2SFF drive configurations

Drive LEDs

The server supports SAS, SATA, and NVMe drives, of which SAS and SATA drives support hot swapping and NVMe drives support hot insertion and managed hot removal. You can use the LEDs on a drive to identify its status after it is connected to a storage controller.

For more information about OSs that support hot insertion and managed hot removal of NVMe drives, use the component compatibility lookup tool at http://www.h3c.com/en/home/qr/default.htm?id=66.

Figure 18 shows the location of the LEDs on a drive.

|

(1) Fault/UID LED |

(2) Present/Active LED |

Figure 21 E1.S drive LEDs

|

(1) Fault/UID LED |

(2) Present/Active LED |

To identify the status of a SAS or SATA drive, use Table 13. To identify the status of an NVMe drive, use Table 14.

Table 14 SAS/SATA drive LED description

|

Fault/UID LED status |

Present/Active LED status |

Description |

|

Flashing amber (0.5 Hz) |

Steady green/Flashing green (4.0 Hz) |

A drive failure is predicted. As a best practice, replace the drive before it fails. |

|

Steady amber |

Steady green/Flashing green (4.0 Hz) |

The drive is faulty. Replace the drive immediately. |

|

Steady blue |

Steady green/Flashing green (4.0 Hz) |

The drive is operating correctly and is selected by the RAID controller. |

|

Off |

Flashing green (4.0 Hz) |

The drive is performing a RAID migration or rebuilding, or the system is reading or writing data to the drive. |

|

Off |

Steady green |

The drive is present but no data is being read or written to the drive. |

|

Off |

Off |

The drive is not securely installed. |

Table 15 NVMe drive LED description

|

Fault/UID LED status |

Present/Active LED status |

Description |

|

Flashing amber (4 Hz) |

Off |

The drive is in hot insertion process. |

|

Steady amber |

Steady green/Flashing green (4.0 Hz) |

The drive is faulty. Replace the drive immediately. |

|

Steady blue |

Steady green/Flashing green (4.0 Hz) |

The drive is operating correctly and selected by the RAID controller. |

|

Off |

Flashing green (4.0 Hz) |

The drive is performing a RAID migration or rebuilding, or the system is reading or writing data to the drive. |

|

Off |

Steady green |

The drive is present but no data is being read or written to the drive. |

|

Off |

Off |

The drive is not securely installed. |

Drive configurations

The server supports multiple drive configurations. For more information about drive configurations and their required storage controller and riser cards, see H3C UniServer R4700 G6 Server Drive Configurations and Cabling Guide.

Drive backplanes

The server supports the following types of drive backplanes:

· SAS/SATA drive backplanes—Support only SAS/SATA drives.

· NVMe drive backplanes—Support only NVMe drives.

· UniBay drive backplanes—Support both SAS/SATA and NVMe drives. You must connect both SAS/SATA and NVMe data cables. The number of supported drives varies by drive cabling.

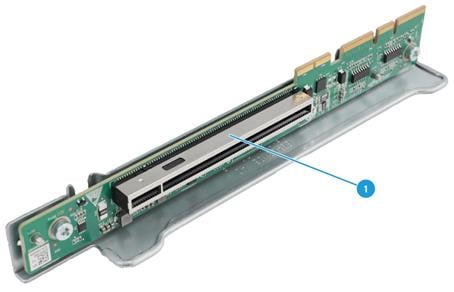

Front 4LFF SAS/SATA drive backplane

The PCA-BP-4LFF-1U-G6 4LFF SAS/SATA drive backplane can be installed at the server front to support four 3.5-inch SAS/SATA drives.

Figure 22 4LFF SAS/SATA drive backplane

|

(1) AUX connector (AUX 1) |

(2) Power connector (PWR 1) |

|

(3) x4 Mini-SAS-HD connector (SAS PORT 1) |

|

Front 8SFF UniBay drive backplane

The PCA-BP-8UniBay-1U-G6 8SFF UniBay drive backplane can be installed at the server front to support eight 2.5-inch SAS/SATA/NVMe drives.

Figure 23 8SFF UniBay drive backplane

|

(1) AUX connector (AUX1) |

(2) MCIO connector A1/A2 (PCIe5.0 x8)(NVMe A1/A2) |

|

(3) Power connector (PWR1) |

(4) MCIO connector A3/A4 (PCIe5.0 x8)(NVMe A3/A4) |

|

(5) SAS/SATA connector (SAS PORT) |

(6) MCIO connector B1/B2 (PCIe5.0 x8)(NVMe B1/B2) |

|

(7) MCIO connector B3/B4 (PCIe5.0 x8)(NVMe B3/B4) |

|

|

PCIe5.0 x8 description: · PCIe5.0: Fifth-generation signal speed. · x8: Bus bandwidth. |

|

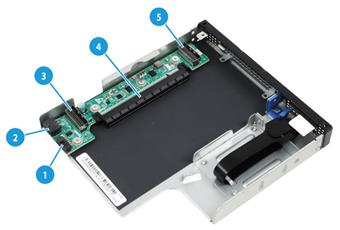

Front 2SFF UniBay drive backplane

The HDDCage-2UniBay-1U-G6 UniBay drive backplane can be installed at the server front to support two 2.5-inch SAS/SATA/NVMe drives.

Figure 24 2SFF UniBay drive backplane

|

(1) SlimSAS connector A1/A2 (PCIe4.0 x8)(NVME-A1/A2) |

(2) x4 Mini-SAS-HD connector (SAS PORT) |

|

(3) AUX connector (AUX) |

(4) Power connector (PWR) |

|

PCIe4.0 x8 description: · PCIe4.0: Fourth-generation signal speed. · x8: Bus bandwidth. |

|

Rear 2SFF UniBay drive backplane

The HDDCage-2UniBay-R-1U-G6 drive backplane can be installed at the server front to support two 3.5-inch SAS/SATA/NVMe drives.

Figure 25 2SFF UniBay drive backplane

|

(1) SlimSAS connector A1/A2 (PCIe4.0 x8)(NVME-A1/A2) |

(2) x4 Mini-SAS-HD connector (SAS PORT) |

|

(3) AUX connector (AUX) |

(4) Power connector (PWR) |

|

PCIe4.0 x8 description: · PCIe4.0: Fourth-generation signal speed. · x8: Bus bandwidth. |

|

Riser cards

To expand the server with PCIe modules, install riser cards on the PCIe riser connectors.

Riser card guidelines

Each PCIe slot in a riser card can supply a maximum of 75 W of power to the PCIe module. You must connect a separate power cord to the PCIe module if it requires more than 75 W of power.

If a processor is faulty or absent, the PCIe slots connected to it are unavailable.

The slot number of a PCIe slot varies by the PCIe riser connector that holds the riser card. For example, slot 1/4 represents PCIe slot 1 if the riser card is installed on connector 1 and represents PCIe slot 4 if the riser card is installed on connector 2. For information about PCIe riser connector locations, see "Rear panel view."

RC-1HHHL-R3-1U-SW-G6

|

Item |

Specifications |

|

PCIe riser connector |

Connector 3 |

|

PCIe slots |

Slot 3: PCIe5.0 ×16 (16, 8, 4, 2, 1) for processor 2 NOTE: The numbers in parentheses represent supported link widths. |

|

Form factors of PCIe modules |

HHHL |

|

Maximum power supplied per PCIe slot |

75 W |

Figure 26 RC-1HHHL-R3-1U-SW-G6

|

(1) PCIe slot 3 |

|

PCIe5.0 x16 (16,8,4,2,1) description: · PCIe5.0: Fifth-generation signal speed. · x16: Connector bandwidth. · (16,8,4,2,1): Compatible bus bandwidth, including x16, x8, x4, x2, and x1. |

RC-1HHHL-R4-1U-X16-G5

|

Item |

Specifications |

|

PCIe riser connector |

Connector 4 at the front |

|

PCIe slots |

Slot 4: PCIe4.0 ×16 (8, 4, 2, 1) for processor 1 NOTE: The numbers in parentheses represent supported link widths. |

|

SlimSAS connectors |

· SlimSAS port 1 (x8 SlimSAS port, connected to MCIO connector C1-P3A on the system board) for processor 1, providing an x16 PCIe link for slot 4 · SlimSAS port 2 (x8 SlimSAS port, connected to MCIO connector C1-P3C on the system board) for processor 1, providing an x16 PCIe link for slot 4 |

|

Form factors of PCIe modules |

HHHL |

|

Maximum power supplied per PCIe slot |

N/A |

Figure 27 RC-1HHHL-R4-1U-X16-G5 riser card

|

(1) GPU module power connector |

(2) AUX connector |

|

(3) SlimSAS connector 1 |

(4) PCIe slot 4 |

|

(5) SlimSAS connector 2 |

|

|

PCIe4.0 x16 (16,8,4,2,1) description: · PCIe4.0: Fourth-generation signal speed. · x16: Connector bandwidth. · (16,8,4,2,1): Compatible bus bandwidth, including x16, x8, x4, x2, and x1. |

|

RC-2FHFL-1U-SW-G6

|

Item |

Specifications |

|

PCIe riser connector |

Connector 1 |

|

PCIe slots |

Slot 1: PCIe5.0 ×16 (16, 8, 4, 2, 1) for processor 1 Slot 2: PCIe5.0 ×16 (16, 8, 4, 2, 1) for processor 1 NOTE: The numbers in parentheses represent supported link widths. |

|

Form factors of PCIe modules |

· FHFL for slot 1 · LP for slot 2 |

|

Maximum power supplied per PCIe slot |

75 W |

Figure 28 RC-2FHFL-1U-SW-G6

|

(1) PCIe slot 1 |

(2) PCIe slot 2 |

|

PCIe5.0 x16 (16,8,4,2,1) description: · PCIe5.0: Fifth-generation signal speed. · x16: Connector bandwidth. · (16,8,4,2,1): Compatible bus bandwidth, including x16, x8, x4, x2, and x1. |

|

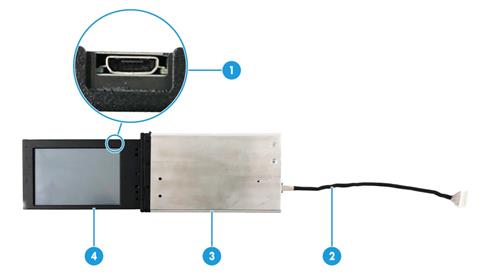

LCD smart management module

An LCD smart management module displays basic server information, operating status, and fault information, and provides diagnostics and troubleshooting capabilities. You can locate and troubleshoot component failures by using the LCD module in conjunction with the event logs generated in HDM.

Figure 29 LCD smart management module

Table 16 LCD smart management module description

|

No. |

Item |

Description |

|

1 |

Mini-USB connector |

Used for upgrading the firmware of the LCD module. |

|

2 |

LCD module cable |

Connects the LCD module to the system board of the server. For information about the LCD smart management module connector on the system board, see "System board." |

|

3 |

LCD module shell |

Protects and secures the LCD screen. |

|

4 |

LCD screen |

Displays basic server information, operating status, and fault information. |

Fan modules

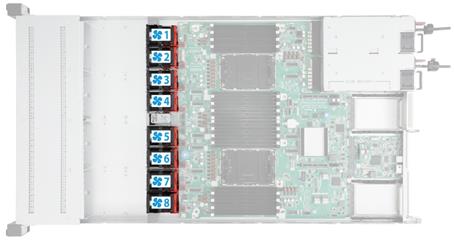

The server supports eight hot swappable fan modules. The server supports N+1 fan module redundancy. Figure 28 shows the layout of the fan modules in the chassis.

The server can adjust the fan rotation speed based on the server temperature to provide optimal performance with balanced ventilation and noise.

PCIe modules

Typically, the PCIe modules are available in the following standard form factors:

· LP—Low profile.

· FHHL—Full height and half length.

· FHFL—Full height and full length.

· HHHL—Half height and half length.

· HHFL—Half height and full length.

The following PCIe modules require PCIe I/O resources: Storage controllers, FC HBAs, and GPU modules. Make sure the number of such PCIe modules installed does not exceed 11.

Storage controllers

The server supports the following types of storage controllers:

· Embedded VROC controller—Embedded in the server and does not require installation.

· Standard storage controller—Comes in a standard PCIe form factor and typically requires a riser card for installation.

For some storage controllers, you can order a power fail safeguard module to prevent data loss from power outages. This module provides a flash card and a supercapacitor. When a system power failure occurs, the supercapacitor provides power for a minimum of 20 seconds. During this interval, the storage controller can transfer data from DDR memory to the flash card, where the data remains indefinitely or until the controller retrieves the data. If the storage controller contains a built-in flash card, you can order only a supercapacitor.

Embedded VROC controller

|

Item |

Specifications |

|

Type |

Embedded in PCH of the system board |

|

Number of internal ports |

12 internal SAS ports (compatible with SATA) |

|

Connectors |

· One onboard ×8 SlimSAS connector · Four onboard ×4 SlimSAS connectors |

|

Drive interface |

6 Gbps SATA 3.0 Supports drive hot swapping |

|

PCIe interface |

PCIe3.0 ×4 |

|

RAID levels |

0, 1, 5, 10 |

|

Built-in cache memory |

N/A |

|

Built-in flash |

N/A |

|

Power fail safeguard module |

Not supported |

|

Firmware upgrade |

Upgrade with the BIOS |

Standard storage controllers

For more information, use the component compatibility lookup tool at http://www.h3c.com/en/home/qr/default.htm?id=66.

NVMe VROC modules

|

Model |

RAID levels |

Compatible NVMe SSDs |

|

NVMe-VROC-Key-S |

0, 1, 10 |

All NVMe drives |

|

NVMe-VROC-Key-P |

0, 1, 5, 10 |

All NVMe drives |

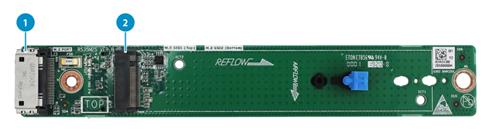

SATA M.2 expander module

Figure 31 Front view of the front SATA M.2 expander module

|

(1) Data cable connector |

(2) M.2 SSD card slot 1 |

Figure 32 Rear view of the front SATA M.2 expander module

|

(1) M.2 SSD card slot 2 |

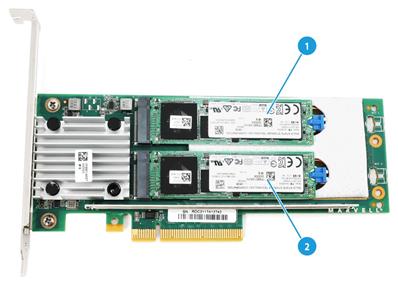

Rear NVMe M.2 expander module

Figure 33 Rear NVMe M.2 expander module

|

(1) NVMe M.2 SSD card slot 1 |

(2) NVMe M.2 SSD card slot 2 |

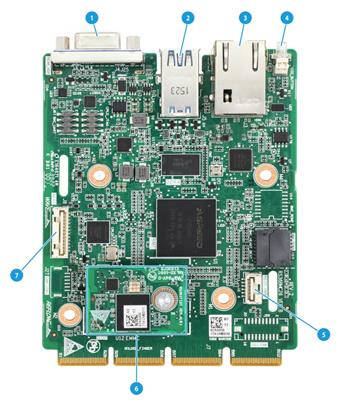

Server management module

The server management module is installed on the system board to provide I/O connectors and HDM out-of-band features for the server.

Figure 34 Server management module

|

(1) VGA connector |

(2) Two USB 3.0 connectors |

|

(3) HDM dedicated network interface |

(4) UID LED |

|

(5) Serial port |

(6) iFIST module (optional) |

|

(7) NCSI connector |

|

Serial & DSD module

The serial & DSD module is installed in the slot on the server rear panel. The module provides two SD slots and forms RAID 1 by default. For more information, see "Rear panel view."

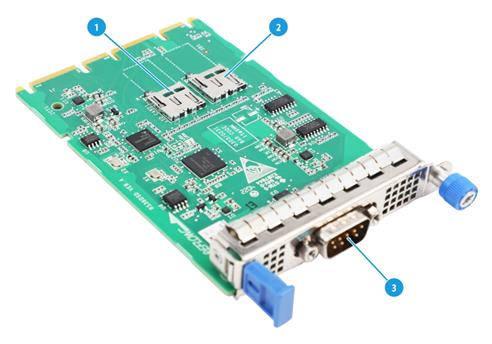

Figure 35 Serial & DSD module

Table 17 Component description

|

Item |

Description |

|

1 |

SD card slot 1 |

|

2 |

SD card slot 2 |

|

3 |

Serial port |

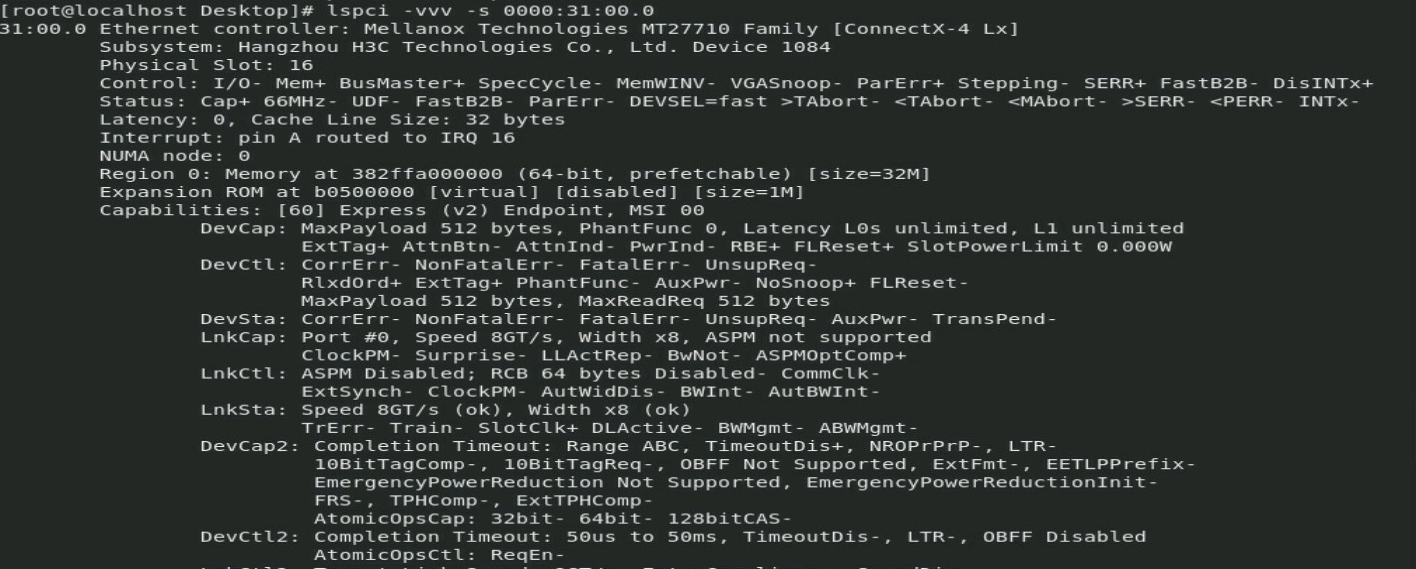

B/D/F information

You can obtain B/D/F information by using one of the following methods:

· BIOS log—Search the dumpiio keyword in the BIOS log.

· UEFI shell—Execute the pci command. For information about how to execute the command, execute the help pci command.

· Operating system—The obtaining method varies by OS.

¡ For Linux, execute the lspci command.

If Linux does not support the lspci command by default, you must execute the yum command to install the pci-utils package.

¡ For Windows, install the pciutils package, and then execute the lspci command.

¡ For VMware, execute the lspci command.

Appendix C Managed removal of OCP network adapters

Before you begin

Before you perform a managed removal of an OCP network adapter, perform the following tasks:

· Use the OS compatibility query tool at http://www.h3c.com/en/home/qr/default.htm?id=66 to obtain operating systems that support managed removal of OCP network adapters.

· Make sure the BIOS version is 6.00.15 or higher, the HDM2 version is 1.13 or higher, and the CPLD version is V001 or higher.

Performing a hot removal

This section uses an OCP network adapter in slot 16 as an example.

To perform a hot removal:

1. Access the operating system.

2. Execute the dmidecode -t 9 command to search for the bus address of the OCP network adapter. As shown in Figure 34, the bus address of the OCP network adapter in slot 16 is 0000:31:00.0.

Figure 36 Searching for the bus address of an OCP network adapter by slot number

3. Execute the echo 0 > /sys/bus/pci/slots/slot number/power command, where slot number represents the number of the slot where the OCP network adapter resides.

Figure 37 Executing the echo 0 > /sys/bus/pci/slots/slot number/power command

4. Identify whether the OCP network adapter has been disconnected:

¡ Observe the OCP network adapter LED. If the LED is off, the OCP network adapter has been disconnected.

¡ Execute the lspci –vvv –s 0000:31:00.0 command. If no output is displayed, the OCP network adapter has been disconnected.

Figure 38 Identifying OCP network adapter status

5. Replace the OCP network adapter.

6. Identify whether the OCP network adapter has been connected:

¡ Verify that the network port is connected and the link connectivity is normal before the hot swap operation, and then observe the OCP network adapter LED. If the LED is on, the OCP network adapter has been connected.

¡ Execute the lspci –vvv –s 0000:31:00.0 command. If an output is displayed, the OCP network adapter has been connected.

Figure 39 Identifying OCP network adapter status

7. Identify whether any exception exists. If any exception occurred, contact H3C Support.

Appendix D Environment requirements

About environment requirements

The operating temperature requirements for the server vary depending on the server model and hardware configuration. When the general and component-based requirements conflict, use the component-based requirement.

Be aware that the actual maximum operating temperature of the server might be lower than what is stated because of poor site cooling performance. In a real data center, the server cooling performance might decrease because of adverse external factors, including poor cabinet cooling performance, high power density inside the cabinet, or insufficient spacing between devices.

General environment requirements

|

Item |

Specifications |

|

Operating temperature |

Minimum: 5°C (41°F) Maximum: 40°C (104°F) The maximum temperature varies by hardware option presence. For more information, see "Operating temperature requirements." |

|

Storage temperature |

–40°C to +70°C (–40°F to +158°F) |

|

Operating humidity |

8% to 90%, noncondensing |

|

Storage humidity |

5% to 95%, noncondensing |

|

Operating altitude |

–60 m to +3000 m (–196.85 ft to +9842.52 ft) The allowed maximum temperature decreases by 0.33 °C (32.59°F) as the altitude increases by 100 m (328.08 ft) from 900 m (2952.76 ft) |

|

Storage altitude |

–60 m to +5000 m (–196.85 ft to +16404.20 ft) |

Operating temperature requirements

General guidelines

When a fan fails, the maximum server operating temperature decreases by 5°C (41°F). The GPU performance and performance of processors with a TDP of more than 165 W might decrease.

If a mid drive or mid GPU adapter is installed, you cannot install processors with a TDP of more than 165 W.

10SFF and 8SFF drive configuration

TOSHIBA SAS SFF HDDs are not used.

The 8SFF drives are installed in slots 0 to 7. For more information about drive slots, see "Drive numbering."

Table 18 Operating temperature requirements

|

Maximum server operating temperature |

Hardware options |

|

30°C (86°F) |

· With 2SFF drives installed at the server rear, the processors with a TDP of more than 185 W are not supported. |

|

35°C (95°F) |

· With A2 GPU modules or PCIe SSDs installed at the server rear, the processors with a TDP of more than 300 W are not supported. · With 2SFF drives installed at the server rear, the processors with a TDP of more than 135 W are not supported. · Delta CRPS 1600 W Platinum AC power supplies are not supported. |

|

40°C (104°F) |

The following hardware options are not supported: · Rear GPU modules. · Rear drives. · Processors with a TDP of more than 185 W. · Delta CRPS 1600 W Platinum AC power supplies · Rear supercapacitor. · OCP network adapters with an interface rate higher than 25 GE. |

4LFF, 10SFF, and 8SFF drive configuration

TOSHIBA SAS SFF HDDs are used in this configuration.

The 8SFF drives are installed in slots 0 to 7. For more information about drive slots, see "Drive numbering."

Table 19 Operating temperature requirements

|

Maximum server operating temperature |

Hardware options |

|

30°C (86°F) |

· With NVMe drives or HDDs installed at the server rear, the processors with a TDP of more than 135 W are not supported. · With SSDs installed at the server rear, the processors with a TDP of more than 165 W are not supported. · With A2 GPU modules installed at the server rear, the processors with a TDP of more than 220 W are not supported. |

|

35°C (95°F) |

· With A2 GPU modules installed at the server rear, the processors with a TDP of more than 165 W are not supported. · With PCIe SSDs installed at the server rear, the processors with a TDP of more than 200 W are not supported. · With PCIe M.2 drives installed at the server rear, the processors with a TDP of more than 250 W are not supported. · With MCX 623436-100G OCP network adapter installed at OCP1 and processors of a TDP higher than 250 W installed, an OCP air baffle with a fan is required. · Delta CRPS 1600W Platinum AC power supplies are not supported. · Rear 2.5-inch HDDs or NVMe drives are not supported. · With SATA/SAS SSDs installed at the server rear, the processors with a TDP of more than 135 W are not supported. |

|

40°C (104°F) |

The following hardware options are not supported: · Rear GPU modules. · Rear drives. · Processors with a TDP of more than 150 W. · Delta CRPS 1600W Platinum AC power supplies. · Rear supercapacitor. · OCP network adapters with an interface rate higher than 25 GE. |

32E1.S drive configuration

Table 20 Operating temperature requirements

|

Maximum server operating temperature |

Hardware options |

|

30°C (86°F) |

The following hardware options are not supported: · Processors with a TDP of more than 270 W. · GPU modules. · Rear drives. |

|

35°C (95°F) |

The following hardware options are not supported: · Processors with a TDP of more than 230 W. · GPU modules. · Rear drives. · Delta CRPS 1600W Platinum AC power supplies. |

|

40°C (104°F) |

The following hardware options are not supported: · Rear GPU modules. · Rear drives. · Processors with a TDP of more than 150 W. · Delta CRPS 1600W Platinum AC power supplies. · OCP network adapters with an interface rate higher than 25 GE. |

Appendix E Product recycling

New H3C Technologies Co., Ltd. provides product recycling services for its customers to ensure that hardware at the end of its life is recycled. Vendors with product recycling qualification are contracted to New H3C to process the recycled hardware in an environmentally responsible way.

For product recycling services, contact New H3C at

· Tel: 400-810-0504

· E-mail: [email protected]

· Website: http://www.h3c.com

Appendix F Glossary

|

Description |

|

|

B |

|

|

BIOS |

Basic input/output system is non-volatile firmware pre-installed in a ROM chip on a server's management module. The BIOS stores basic input/output, power-on self-test, and auto startup programs to provide the most basic hardware initialization, setup and control functionality. |

|

C |

|

|

CPLD |

Complex programmable logic device is an integrated circuit used to build reconfigurable digital circuits. |

|

G |

|

|

GPU module |

Graphics processing unit module converts digital signals to analog signals for output to a display device and assists processors with image processing to improve overall system performance. |

|

H |

|

|

HDM |

Hardware Device Management is the server management control unit with which administrators can configure server settings, view component information, monitor server health status, and remotely manage the server. |

|

A module that supports hot swapping (a hot-swappable module) can be installed or removed while the server is running without affecting the system operation. |

|

|

K |

|

|

KVM |

KVM is a management method that allows remote users to use their local video display, keyboard, and mouse to monitor and control the server. |

|

N |

|

|

NVMe VROC module |

A module that works with Intel VMD to provide RAID capability for the server to virtualize storage resources of NVMe drives. |

|

R |

|

|

RAID |

Redundant array of independent disks (RAID) is a data storage virtualization technology that combines multiple physical hard drives into a single logical unit to improve storage and security performance. |

|

Redundancy |

A mechanism that ensures high availability and business continuity by providing backup modules. In redundancy mode, a backup or standby module takes over when the primary module fails. |

|

S |

|

|

Security bezel |

A locking bezel mounted to the front of a server to prevent unauthorized access to modules such as hard drives. |

|

U |

A unit of measure defined as 44.45 mm (1.75 in) in IEC 60297-1. It is used as a measurement of the overall height of racks, as well as equipment mounted in the racks. |

|

UniBay drive backplane |

A UniBay drive backplane supports both SAS/SATA and NVMe drives. |

|

UniSystem |

UniSystem provided by H3C for easy and extensible server management. It can guide users to configure a server quickly with ease and provide an API interface to allow users to develop their own management tools. |

|

V |

|

|

VMD |

VMD provides hot removal, management and fault-tolerance functions for NVMe drives to increase availability, reliability, and serviceability. |

Appendix G Acronyms

|

Acronym |

Full name |

|

B |

|

|

BIOS |

|

|

C |

|

|

CMA |

Cable Management Arm |

|

CPLD |

|

|

D |

|

|

DCPMM |

Data Center Persistent Memory Module |

|

DDR |

Double Data Rate |

|

DIMM |

Dual In-Line Memory Module |

|

DRAM |

Dynamic Random Access Memory |

|

DVD |

Digital Versatile Disc |

|

G |

|

|

GPU |

|

|

H |

|

|

HBA |

Host Bus Adapter |

|

HDD |

Hard Disk Drive |

|

HDM |

|

|

I |

|

|

IDC |

Internet Data Center |

|

iFIST |

integrated Fast Intelligent Scalable Toolkit |

|

K |

|

|

KVM |

Keyboard, Video, Mouse |

|

L |

|

|

LRDIMM |

Load Reduced Dual Inline Memory Module |

|

N |

|

|

NCSI |

Network Controller Sideband Interface |

|

NVMe |

Non-Volatile Memory Express |

|

P |

|

|

PCIe |

Peripheral Component Interconnect Express |

|

POST |

Power-On Self-Test |

|

R |

|

|

RDIMM |

Registered Dual Inline Memory Module |

|

S |

|

|

SAS |

Serial Attached Small Computer System Interface |

|

SATA |

Serial ATA |

|

SD |

Secure Digital |

|

SDS |

Secure Diagnosis System |

|

SFF |

Small Form Factor |

|

sLOM |

Small form factor Local Area Network on Motherboard |

|

SSD |

Solid State Drive |

|

T |

|

|

TCM |

Trusted Cryptography Module |

|

TDP |

Thermal Design Power |

|

TPM |

Trusted Platform Module |

|

U |

|

|

UID |

Unit Identification |

|

UPI |

Ultra Path Interconnect |

|

UPS |

Uninterruptible Power Supply |

|

USB |

Universal Serial Bus |

|

V |

|

|

VROC |

Virtual RAID on CPU |

|

VMD |

Volume Management Device |