- Table of Contents

- Related Documents

-

| Title | Size | Download |

|---|---|---|

| 01-Text | 1.95 MB |

Contents

About the SeerEngine-DC controller

Hardware requirements for deployment on physical servers

Hardware requirements for deployment on VMs

Deployment procedure at a glance

Installing the Unified Platform

(Optional.) Configuring HugePages

Deploying the Unified Platform

Registering and installing licenses

Registering and installing licenses for the Unified Platform

Registering and installing licenses for the controller

Installing the activation file on the license server

Backing up and restoring the controller configuration

Upgrading the controller and DTN

Uninstalling SeerEngine-DC and DTN

Uninstalling the DTN component only

RDRS deployment procedure at a glance

Deploying the primary and backup sites

Configuring the RDRS settings for the controllers at the primary and backup sites

Deploying the third-party arbitration service

Deploying the third-party arbitration service

Uninstalling the third-party arbitration service

Upgrading the third-party arbitration service

Upgrading SeerEngine-DC to support RDRS

Cluster deployment over a Layer 3 network

Deploying the SeerEngine-DC controller at Layer 3

About cluster 2+1+1 deployment

About the SeerEngine-DC controller

SeerEngine-DC is a data center controller. Similar to a network operating system, the SeerEngine-DC controller drives SDN application development and allows operation of various SDN applications. It can control various resources on the network and provide interfaces for applications to enable specific network forwarding.

The controller has the following features:

· It supports OpenFlow 1.3 and provides built-in services and a device driver framework.

· It is a distributed platform with high availability and scalability.

· It provides extensible REST APIs and GUI.

· It can operate in standalone or cluster mode.

Preparing for installation

Server requirements

Hardware requirements for deployment on physical servers

|

CAUTION: · To avoid unrecoverable system failures caused by unexpected power failures, use a storage controller that supports power fail protection on the servers and make sure a supercapacitor is in place. · The DTN component does not support RDRS. · In the RDRS mode, the primary and backup sites each require three servers. |

Controller cluster + DTN physical host deployment (x86, Intel64/AMD64 architecture)

Table 1 Hardware requirements for controller cluster + DTN physical host deployment (x86, Intel64/AMD64 architecture)

|

Item |

Requirements |

|

Controller node (standard configuration), 3 |

|

|

CPU |

16 cores, 2.0 GHz or above |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1 GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs configured in RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1 GB cache, installed with a power fail safeguard module. |

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP, 3+3 RDRS system, or DTN, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP, 3+3 RDRS system, or DTN, add two network interfaces separately for each of them. |

|

Managed devices and servers |

Device: A maximum of 100. Server: A maximum of 2000. |

|

Controller node (high-end configuration), 3 |

|

|

CPU |

20 cores, 2.2 GHz or above |

|

Memory |

256 GB or above |

|

Drive |

Select either of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs configured in RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP, 3+3 RDRS system, or DTN, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP, 3+3 RDRS system, or DTN, add two network interfaces separately for each of them. |

|

Managed devices and servers |

Device: A maximum of 300. Server: A maximum of 6000. |

|

DTN physical host (high-end configuration), number of simulated devices/80 |

|

|

CPU |

x86, Intel64/AMD64 20 cores 2.2 GHz or above Support for VX-T/VX-D |

|

Memory |

256 GB or above |

|

Drive |

Select either of the following drive options. · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 80 per server. |

|

DTN physical host (standard configuration), number of simulated devices/30 |

|

|

CPU |

x86, Intel64/AMD64 16 cores 2.0 GHz or above Support for VX-T/VX-D |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options: · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 30 per server. |

|

Remarks · To use the simulation feature, add 100GB or above memory for the server where the DTN component is deployed. · Deploy a DTN physical host only if you are to use the simulation feature. · To deploy the general_PLAT_kernel-region application package, one more CPU core and 6GB more memory are required. · To deploy the general_PLAT_network application package, three more CPU cores and 16GB more memory are required. |

|

Table 2 Hardware requirements for a controller in standalone mode + DTN physical host deployment (x86-Intel64/AMD64)

|

Item |

Requirements |

|

CPU |

16 cores, 2.0 GHz or above |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs configured in RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy the DTN component, add a network interface. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy the DTN component, add two network interfaces. |

|

Managed devices and servers |

· Device: A maximum of 36. · Server: A maximum of 600. |

|

DTN physical host (high-end configuration), number of simulated devices/80 |

|

|

CPU |

x86, Intel64/AMD64 20 cores 2.2 GHz or above Support for VX-T/VX-D |

|

Memory |

256 GB or above |

|

Drive |

Select either of the following drive options. · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 80 per sever. |

|

DTN physical host (standard configuration), number of simulated devices/30 |

|

|

CPU |

x86, Intel64/AMD64 16 cores 2.0 GHz or above Support for VX-T/VX-D |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options. · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 30 per sever. |

|

Remarks · To use the simulation feature, add 100GB or above memory for the server where the DTN component is deployed. · Deploy a DTN physical host only if you are to use the simulation feature. · To deploy the general_PLAT_kernel-region application package, one more CPU core and 6GB more memory are required. · To deploy the general_PLAT_network application package, three more CPU cores and 16GB more memory are required. |

|

|

CAUTION: · If a failure occurs on a controller in standalone mode, the services might be interrupted. As a best practice, configure a remote backup server for a controller in standalone mode. · A controller in standalone mode cannot be upgraded to operate in cluster mode, and does not support hybrid overlay, multi-fabric, security groups, QoS, or interoperation with CloudOS. |

Controller cluster deployment + DTN physical host deployment (Haiguang x86-64 server)

Table 3 Hardware requirements for controller cluster deployment (Haiguang x86-64 server)

|

Item |

Requirements |

|

Controller node (standard configuration), 3 |

|

|

CPU |

2 × Hygon C86 7265s 24 cores 2.2 GHz |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs configured in RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

|

Managed devices and servers |

· Device: A maximum of 100. · Server: A maximum of 2000. |

|

Controller node (high-end configuration), 3 |

|

|

CPU |

2 × Hygon C86 7280s 32 cores 2.0 GHz |

|

Memory size |

256 GB or above |

|

Drive |

Select either of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

|

Managed devices and servers |

· Device: A maximum of 300. · Server: A maximum of 6000. |

|

DTN physical host (high-end configuration), number of simulated devices/80 |

|

|

CPU |

x86, Intel64/AMD64 2*Hygon C86 7280 32 cores 2.0 GHz or above Support for VX-T/VX-D |

|

Memory |

256 GB or above |

|

Drive |

Select either of the following drive options. · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 80 per sever. |

|

DTN physical host (standard configuration), number of simulated devices/30 |

|

|

CPU |

x86, Intel64/AMD64 2*Hygon C86 7265 24 cores 2.2 GHz or above Support for VX-T/VX-D |

|

Memory |

128 GB or above |

|

Drive |

Select either of the following drive options. · 2 × 960 GB SSDs + RAID 1 or 4 × 480 GB SSDs + RAID 10 (600 GB or higher after RAID setup). · 2 × 600 GB 7.2K RPM or above HDDs + RAID 1 (600 GB or higher after RAID setup). |

|

Network interface |

A minimum of 3, 1 to 10 Gbps. |

|

Simulated devices |

A maximum of 30 per sever. |

|

Remarks · To use the simulation feature, add 100GB or above memory for the server where the DTN component is deployed. · Deploy a DTN physical host only if you are to use the simulation feature. · To deploy the general_PLAT_kernel-region application package, one more CPU core and 6GB more memory are required. · To deploy the general_PLAT_network application package, three more CPU cores and 16GB more memory are required. |

|

Controller cluster deployment (Kunpeng ARM server)

Kunpeng servers do not support DTN deployment.

Table 4 Hardware requirements for controller cluster deployment (Kunpeng ARM server)

|

Item |

Requirements |

||

|

Controller node (standard configuration), 3 |

|||

|

CPU |

2 × Kunpeng 920s, 24 cores, 2.6 GHz |

||

|

Memory |

128 GB or above |

||

|

Drive |

Select one of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

||

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

||

|

Managed devices and servers |

· Device: A maximum of 100. · Server: A maximum of 2000. |

||

|

Controller node (high-end configuration), 3 in total |

|||

|

CPU |

2 × Kunpeng 920s, 48 cores, 2.6 GHz |

||

|

Memory |

384 GB (12 × 32 GB) |

||

|

Drive |

Select one of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup) (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

||

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

||

|

Managed devices and servers |

· Device: A maximum of 300. · Server: A maximum of 6000. |

||

|

Remarks · To deploy the general_PLAT_kernel-region application package, one more CPU core and 6GB more memory are required. · To deploy the general_PLAT_network application package, three more CPU cores and 16GB more memory are required. |

|||

Controller cluster deployment (FeiTeng ARM server)

FeiTeng servers do not support DTN deployment.

Table 5 Hardware requirements for controller cluster deployment (FeiTeng ARM server)

|

Item |

Requirements |

||

|

Controller node (standard configuration), 3 |

|||

|

CPU |

2 × FeiTeng S2500, 64 cores, 2.1 GHz |

||

|

Memory |

128 GB or above |

||

|

Drive |

Select one of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

||

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

||

|

Managed devices and servers |

· Device: A maximum of 100. · Server: A maximum of 2000. |

||

|

Controller node (high-end configuration), 3 |

|||

|

CPU |

2 × Feiteng S2500, 64 cores, 2.1 GHz |

||

|

Memory |

384 GB |

||

|

Drive |

Select one of the following drive options: · Drive configuration option 1: ¡ System drive: 4 × 960 GB SSDs or 8 × 480 GB SSDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 480 GB SSDs, RAID 1 (50 GB or higher after RAID setup). (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. · Drive configuration option 2: ¡ System drive: 4 × 1200 GB or 8 × 600 GB 7.2K RPM or above HDDs, RAID 10 (1920 GB or higher after RAID setup). ¡ etcd drive: 2 × 600 GB 7.2K RPM or above HDDs, RAID 1 (50 GB or higher after RAID setup) (Installation path: /var/lib/etcd.) ¡ Storage controller: 1GB cache, installed with a power fail safeguard module. |

||

|

Network interface |

· Non-bonding mode: 1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. · Bonding mode: 2 × 10 Gbps Linux bonding interfaces. To deploy vBGP or 3+3 RDRS system, add two network interfaces separately for each of them. |

||

|

Managed devices and servers |

· Device: A maximum of 300. · Server: A maximum of 6000. |

||

|

Remarks · To deploy the general_PLAT_kernel-region application package, one more CPU core and 6GB more memory are required. · To deploy the general_PLAT_network application package, three more CPU cores and 16GB more memory are required. |

|||

Hardware requirements for deployment on VMs

The controller can also be installed on a VM deployed on the following virtualization platforms, and the virtualized environment provides the CPU, memory, and disk resources required by the controller:

· VMware ESXi 6.7.0

· H3C_CAS-E0706

The number of vCPU cores required for deploying the controller on a VM is twice the number of CPU cores required for deploying the controller on a physical server if hyper-threading is enabled on the server where the virtualization platform is deployed. If hyper-threading is disabled, the required number of vCPU cores is the same as that of CPU cores, and memory and disks can also be configured as required for deployment on a physical server.

This section uses a server enabled with hyper-threading as an example to describe the requirements for deploying the controller on a VM.

|

CAUTION: · You can deploy the controller on a VM only in scenarios where the controller will not interoperate with CloudOS. · To ensure system environment stability, make sure the CPUs, memory, and disks allocated to the VM meet the recommended capacity requirements and there are physical resources with corresponding capacity. Make sure VM resources are not overcommitted, and reserve resources for the VM. · Make sure etcd has an exclusive use of a physical disk. · To deploy the controller on a VMware-managed VM, enable the network card hybrid mode and pseudo transmission on the host where the VM resides. · Do not deploy DTN on a VM. · Do not deploy vBGP on a VM. · To ensure high reliability, deploy the three VM nodes of the controller cluster on three different physical hosts. · Deploy the controllers on physical servers in medium and large-scale (leaf number greater than 30) scenarios. |

Table 6 Hardware requirements for controller cluster deployment

|

Item |

Requirements |

|

Number of controller nodes |

3 |

|

vCPU |

32 cores, 2.0 GHz |

|

Memory |

128 GB or above |

|

Drive |

· System drive: 2.4 TB · etcd drive: 50 GB |

|

Network interface |

1 × 10 Gbps Ethernet port. To deploy vBGP or 3+3 RDRS system, add a network interface separately for each of them. |

|

Managed devices and servers |

· Device: A maximum of 30. · Server: A maximum of 600. |

|

Remarks · To use the simulation feature, add 100GB or above memory for the server where the DTN component is deployed. · To deploy the general_PLAT_kernel-region application package, 2 more CPU cores and 6GB more memory are required. · To deploy the general_PLAT_network application package, 3 more CPU cores and 16GB more memory are required. |

|

Software requirements

SeerEngine-DC runs on the Unified Platform as a component. Before deploying SeerEngine-DC, first install the Unified Platform.

Client requirements

You can access the Unified Platform from a Web browser without installing any client. As a best practice, use Google Chrome 70 or a later version.

Pre-installation checklist

Table 7 Pre-installation checklist

|

Item |

Requirements |

|

|

Server |

Hardware |

· The CPUs, memory, drives, and network interfaces meet the requirements. · The server supports the Unified Platform. |

|

Software |

The system time settings are configured correctly. As a best practice, configure NTP for time synchronization and make sure the devices synchronize to the same clock source. |

|

|

Client |

You can access the Unified Platform from a Web browser without installing any client. As a best practice, use Google Chrome 70 or a later version. |

|

Deployment procedure at a glance

Use the following procedure to deploy the controller:

1. Prepare for installation.

Prepare a minimum of three physical servers. Make sure the physical servers meet the hardware and software requirements as described in "Server requirements."

2. Deploy the Unified Platform.

For the deployment procedure, see H3C Unified Platform Deployment Guide.

3. Deploy SeerEngine-DC components.

4. Deploy the DTN component.

For the deployment procedure, see H3C SeerEngine-DC Simulation Network Deployment Guide.

5. Deploy simulated services.

For the deployment procedure, see H3C SeerEngine-DC Simulation Network Environment Deployment Guide.

Installing the Unified Platform

SeerEngine-DC runs on the Unified Platform as a component. Before deploying SeerEngine-DC, first install the Unified Platform. For the detailed procedure, see H3C Unified Platform Deployment Guide.

To run SeerEngine-DC on Unified Platform, you are required to partition the drive, configure HugePages, and deploy the application packages required by SeerEngine-DC.

Partitioning the system drive

Before installing the Unified Platform, partition the system drive as described in Table 8.

Table 8 Drive partition settings (2400GB partition)

|

RAID configuration |

Partition |

Mount point |

Min. capacity |

Remarks |

|

2400 GB or higher after RAID 10 setup |

/dev/sda1 |

/boot/efi |

200 MiB |

EFI System Partition type. This partition is required only in UEFI mode. |

|

/dev/sda2 |

/boot |

1024 MiB |

N/A |

|

|

/dev/sda3 |

/ |

1000 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda4 |

/var/lib/docker |

500 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda6 |

swap |

1024 MiB |

Swap type. |

|

|

/dev/sda7 |

/var/lib/ssdata |

550 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda8 |

N/A |

300 GiB |

Reserved for GlusterFS. Not required when the operating system is installed. |

|

|

50 GB or higher after RAID 1 setup |

/dev/sdb |

/var/lib/etcd |

50 GiB |

Required to be mounted on a separate drive. |

Table 9 Drive partition settings (1920GB partition)

|

RAID configuration |

Partition |

Mount point |

Min. capacity |

Remarks |

|

1920 GB or higher after RAID 10 setup |

/dev/sda1 |

/boot/efi |

200 MiB |

EFI System Partition type. This partition is required only in UEFI mode. |

|

/dev/sda2 |

/boot |

1024 MiB |

N/A |

|

|

/dev/sda3 |

/ |

700 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda4 |

/var/lib/docker |

450 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda6 |

swap |

1024 MiB |

Swap type. |

|

|

/dev/sda7 |

/var/lib/ssdata |

500 GiB |

Expandable when the drive space is sufficient. |

|

|

/dev/sda8 |

N/A |

220 GiB |

Reserved for GlusterFS. Not required when the operating system is installed. |

|

|

50 GB or higher after RAID 1 setup |

/dev/sdb |

/var/lib/etcd |

50 GiB |

Required to be mounted on a separate drive. |

(Optional.) Configuring HugePages

ARM hosts do not support multi-vBGP cluster.

To use the vBGP component, you must enable and configure HugePages. To deploy multiple vBGP clusters on a server, plan the HugePages configuration in advance to ensure adequate HugePages resources.

You are not required to configure HugePages if the vBGP component is not to be deployed. If SeerAnalyzer is to be deployed, you are not allowed to configure HugePages. After you enable or disable HugePages, restart the server for the configuration to take effect. HugePages are enabled on a server by default.

H3Linux operating system

H3Linux operating system supports two huge page sizes. Table 10 describes HugePages parameter settings for different page sizes.

Table 10 HugePages parameter settings for different page sizes

|

Huge page size |

Number of huge pages |

Remarks |

|

1 G |

8 + (n-1) |

· N is the number of vBGP clusters. · A single vBGP node uses 1 GB of HugePages memory. |

|

2 M |

4096 + 400 × (n-1) |

· N is the number of vBGP clusters. · A single vBGP node uses 400 MB of HugePages memory. |

You must configure HugePages on each node, and reboot the system for the configuration to take effect.

|

IMPORTANT: To deploy the Unified Platform on an H3C CAS-managed VM, enable HugePages for the host and VM on the CAS platform and set the CPU operating mode for the VM to straight-through before issuing the HugePages configuration. |

To configure HugePages on the H3Linux operating system:

1. Execute the hugepage.sh script.

[root@node1 ~]# cd /etc

[root@node1 etc]# ./hugepage.sh

2. Set the memory size and number of pages for HugePages during the script execution process.

The default parametersr ae set as follows: default_hugepagesz=1G hugepagesz=1G hugepages=8 }

Do you want to reset these parameters? [Y/n] Y

Please enter the value of [ default_hugepagesz ],Optional units: M or G >> 2M

Please enter the value of [ hugepagesz ],Optional units: M or G >> 2M

Please enter the value of [ hugepages ], Unitless >> 4096

3. Reboot the server as promoted.

GRUB_CMDLINE_LINUX="crashkernel=auto rhgb quiet default_hugepagesz=2M hugepagesz=2M hugepages=4096 "

Legacy update grub.cfg

Generating grub configuration file ...

Found linux image: /boot/vmlinuz-3.10.0-957.27.2.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-957.27.2.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-664108661c92423bb0402df71ce0e6cc

Found initrd image: /boot/initramfs-0-rescue-664108661c92423bb0402df71ce0e6cc.img

done

update grub.cfg success,reboot now...

************************************************

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=saved

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="console"

GRUB_CMDLINE_LINUX="crashkernel=auto rhgb quiet default_hugepagesz=2M hugepagesz=2M hugepages=4096 "

GRUB_DISABLE_RECOVERY="true"

************************************************

Reboot to complete the configuration? [Y/n] Y

4. Verify that HugePages is configured correctly. If the configuration result is displayed as configured, the configuration is successful.

[root@node1 ~]# cat /proc/cmdline

BOOT_IMAGE=/vmlinuz-3.10.0-957.27.2.el7.x86_64 root=UUID=6b5a31a2-7e55-437c-a6f9-e3bc711d3683 ro crashkernel=auto rhgb quiet default_hugepagesz=2M hugepagesz=2M hugepages=4096

Galaxy Kylin operating system

Galaxy Kylin operating system supports one huge page size. Table 11 describes HugePages parameter settings.

Table 11 HugePages parameter settings

|

Huge page size |

Number of huge pages |

Remarks |

|

2 M |

4096 + 400 × (n-1) |

· N is the number of vBGP clusters. · A single vBGP node uses 400 MB of HugePages memory. |

1. Open the grub file.

vim /etc/default/grub

2. Edit the values of the default_hugepagesz, hugepagesz, and hugepages parameters at the line starting with GRUB_CMDLINE_LINUX.

GRUB_CMDLINE_LINUX="default_hugepagesz=2M hugepagesz=2M hugepages=4096

3. Update the configuration file.

¡ If the system boots in UEFI mode, execute the following command:

grub2-mkconfig -o /boot/efi/EFI/kylin/grub.cfg

¡ If the system boots in Legacy mode, execute the following command:

grub2-mkconfig -o /boot/grub2/grub.cfg

4. Restart the server for the configuration to take effect.

5. Execute the cat/proc/cmdline command to view the configuration result. If the result is consistent with your configuration, the configuration succeeds.

default_hugepagesz=2M hugepagesz=2M hugepages=4096

Deploying the Unified Platform

The Unified Platform can be installed on x86 or ARM servers. Select the installation packages specific to the server type and install the selected packages in sequence as described in Table 12.

For the installation procedures of the packages, see H3C Unified Platform Deployment Guide.

The following installation packages must be deployed when you deploy the Unified Platform:

· common_PLAT_GlusterFS_2.0

· general_PLAT_portal_2.0

· general_PLAT_kernel_2.0

The following installation packages are deployed automatically when you deploy SeerEngine-DC components:

· general_PLAT_kernel-base_2.0

· general_PLAT_Dashboard_2.0

· general_PLAT_widget_2.0

To use the general_PLAT_network_2.0 and syslog packages, deploy them after SeerEngine-DC components are deployed.

Table 12 Installation packages required by the controller

|

Installation package |

Description |

|

· x86: common_PLAT_GlusterFS_2.0_version.zip · ARM: common_PLAT_GlusterFS_2.0_version_arm.zip |

Provides local shared storage functionalities. |

|

· x86: general_PLAT_portal_2.0_version.zip · ARM: general_PLAT_portal_2.0_version_arm.zip |

Provides portal, unified authentication, user management, service gateway, and help center functionalities. |

|

· x86: general_PLAT_kernel_2.0_version.zip · ARM: general_PLAT_kernel_2.0_version_arm.zip |

Provides access control, resource identification, license, configuration center, resource group, and log functionalities. |

|

· x86: general_PLAT_kernel-base_2.0_version.zip · ARM: general_PLAT_kernel-base_2.0_version_arm.zip |

Provides alarm, access parameter template, monitoring template, report, email, and SMS forwarding functionalities. |

|

· x86: general_PLAT_kernel-region_2.0_version.zip · ARM: general_PLAT_kernel-region_2.0_version_arm.zip |

(Optional.) Provides the hierarchical management functionality. If you are to use hierarchical management, install the general_PLAT_network_2.0 application and use it together with the super controller component for the super controller to manage DC networks. |

|

· x86: general_PLAT_network_2.0_version.zip · ARM: general_PLAT_network_2.0_version_arm.zip |

(Optional.) Provides basic management of network resources, network performance, network topology, and iCC. Install this application if you are to check match of the software versions with the solution. |

|

· x86: common_PLAT_Dashboard_2.0_version.zip · ARM: common_PLAT_Dashboard _2.0_version_arm.zip |

Provides the dashboard framework. |

|

· x86: general_PLAT_widget_2.0_version.zip · ARM: general_PLAT_widget_2.0_version_arm.zip |

Provides dashboard widget management. |

|

· x86: Syslog-version_x86.zip · ARM: Syslog-version_arm.zip |

(Optional.) Provides syslog management (log viewing, alarm upgrade rules, log parsing) Install this application package if you are to use syslog management. |

|

|

NOTE: · To deploy the general_PLAT_kernel-region application package on a physical server, one more CPU core and 6GB more memory are required. To deploy the general_PLAT_network application package on a physical server, three more CPU cores and 16GB more memory are required. · To deploy the general_PLAT_kernel-region application package on a VM, two more vCPU cores and 6G more memory are required. To deploy the general_PLAT_network application package on a VM, six more vCPU cores and 16GB more memory are required. |

Deploying the controller

|

IMPORTANT: · The controller runs on the Unified Platform. You can deploy, upgrade, and uninstall it only on the Unified Platform. · Before deploying the controller, make sure the required applications have been deployed. · To deploy RDRS for the controller, see "RDRS" for network planning and component deployment. |

Preparing for deployment

Enabling network interfaces

If the server uses multiple network interfaces for connecting to the network, enable the network interfaces before deployment.

The procedure is the same for all network interfaces. The following procedure enables network interface ens34.

To enable a network interface:

1. Access the server that hosts the Unified Platform.

2. Access the network interface configuration file.

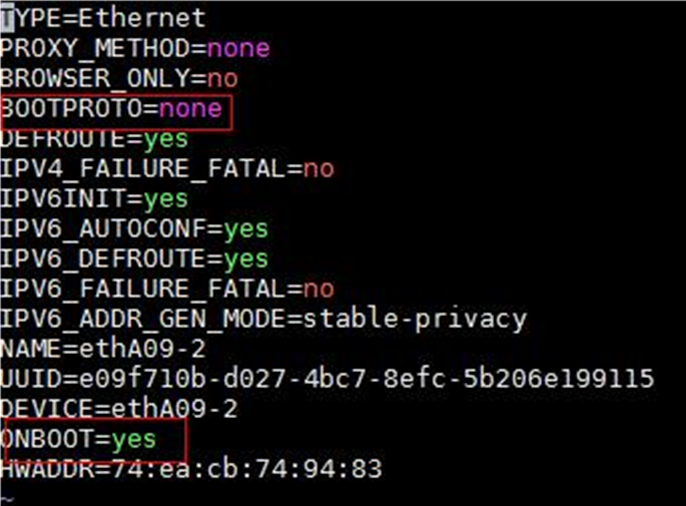

[root@node1 /]# vi /etc/sysconfig/network-scripts/ifcfg-ens34

3. Set the BOOTPROTO field to none to not specify a boot-up protocol and set the ONBOOT field to yes to activate the network interface at system startup.

Figure 1 Modifying the configuration file for a network interface

4. Execute the ifdown and ifup commands in sequence to reboot the network interface.

[root@node1 /]# ifdown ens34

[root@node1 /]# ifup ens34

5. Execute the ifconfig command to verify that the network interface is in up state.

Planning the networks

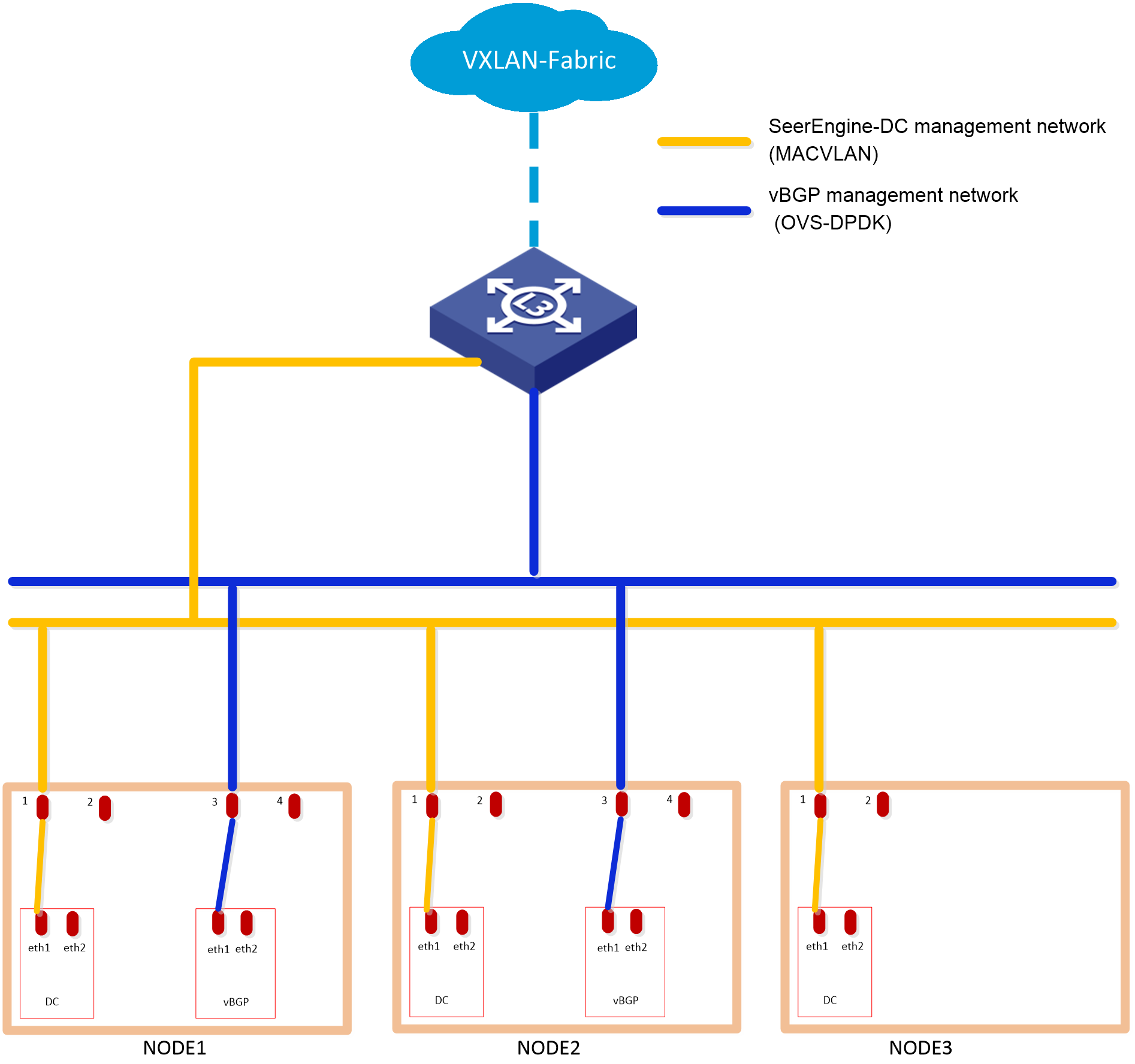

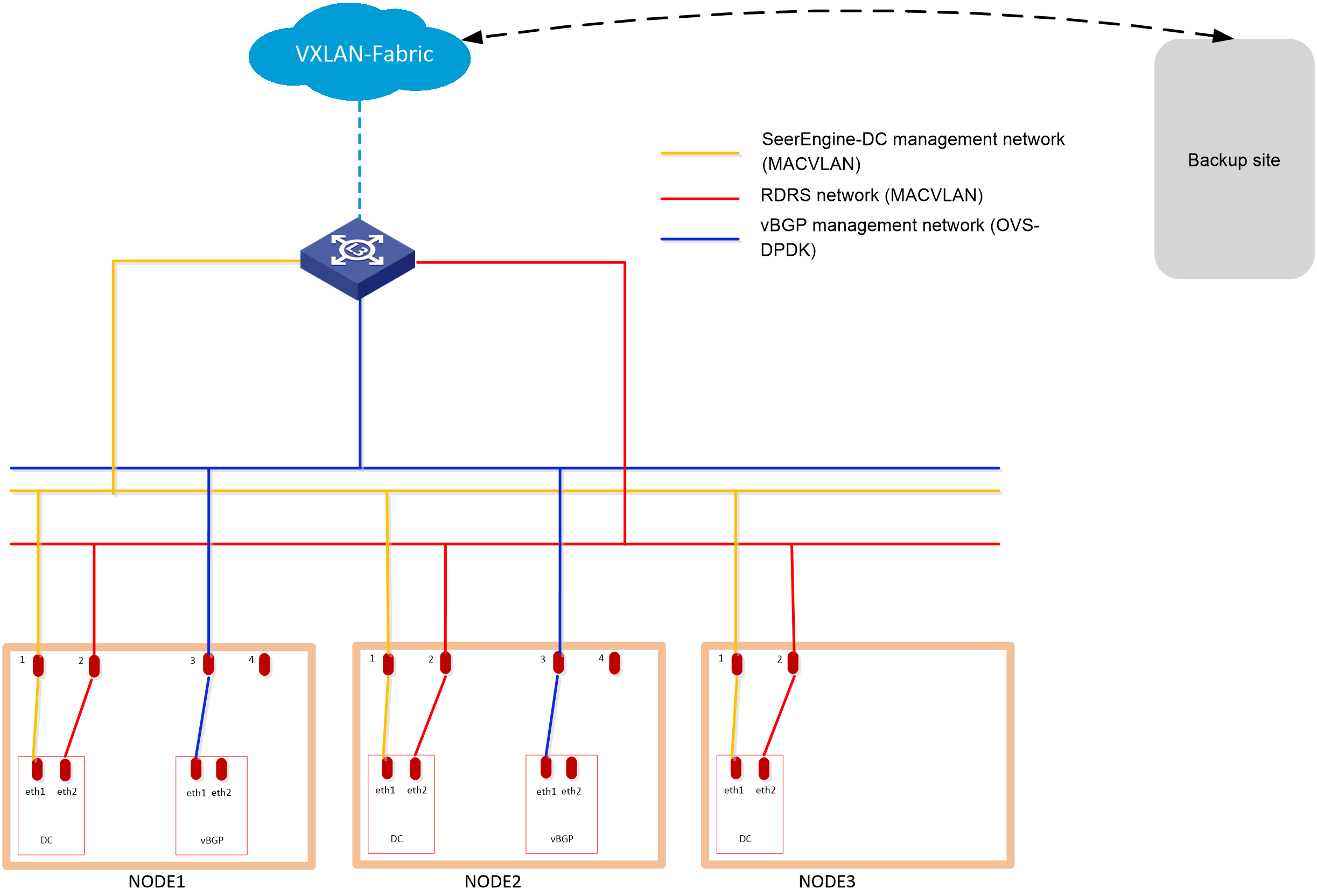

Network planning

Plan for the following three types of networks:

· Calico network

Calico is an open source networking and network security solution for containers, Vims, and native host-based workloads. The Calico network is an internal network used for container interactions. The network segment of the Calico network is the IP address pool set for containers when the cluster is deployed. The default network segment is 177.177.0.0. You do not need to configure an address pool for the Calico network when installing and deploying the controller. The Calico network and MACVLAN network can use the same network interface.

· MACVLAN network

The MACVLAN network is used as a management network.

The MACVLAN virtual network technology allows you to bind multiple IPs and MAC addresses to a physical network interface. Some applications, especially legacy applications or applications that monitor network traffic, require a direct connection to the physical network. You can use the MACVLAN network driver program to assign a MAC address to the virtual network interface of each container, making the virtual network interface seem to be a physical network interface directly connected to the physical network. The physical network interface must be able to handle "promiscuous mode", supporting bundling of multiple MAC addresses to a physical interface.

· OVS-DPDK network

The OVS-DPDK type network is used as a management network.

Open vSwitch is a multi-layer virtual switch that supports SDN control semantics through the OpenFlow protocol and its OVSDB management interface. DPDK has a set of user space libraries and enables faster development of high speed data packet networking applications. Integrating DPDK and Open vSwitch, the OVS-DPDK network architecture can accelerate OVS data stream forwarding.

The required management networks depend on the deployed components and application scenarios. Before deployment, plan the network address pools in advance.

Table 13 Network types and numbers used by components in the non-RDRS scenario

|

Component |

Network type |

Number of networks |

Remarks |

|

|

SeerEngine-DC |

MACVLAN (management network) |

1 |

N/A |

|

|

vBGP |

Management network and service network converged |

OVS-DPDK (management network) |

1*Number of vBGP clusters |

· Used for communication between the vBGP and SeerEngine-DC components and service traffic transmission. · Each vBGP cluster requires a separate OVS-DPDK management network. |

|

Management network and service network separated |

OVS-DPDK (management network) |

1*Number of vBGP clusters |

· Used for communication between the vBGP and SeerEngine-DC components. · Each vBGP cluster requires a separate OVS-DPDK management network. |

|

|

OVS-DPDK (service network) |

1*Number of vBGP clusters |

· Used for service traffic transmission. · Each vBGP cluster requires a separate OVS-DPDK service network. |

||

|

Digital Twin Network (DTN) |

MACVLAN (simulation management network) |

1 |

Used for simulation services. A separate network interface is required. |

|

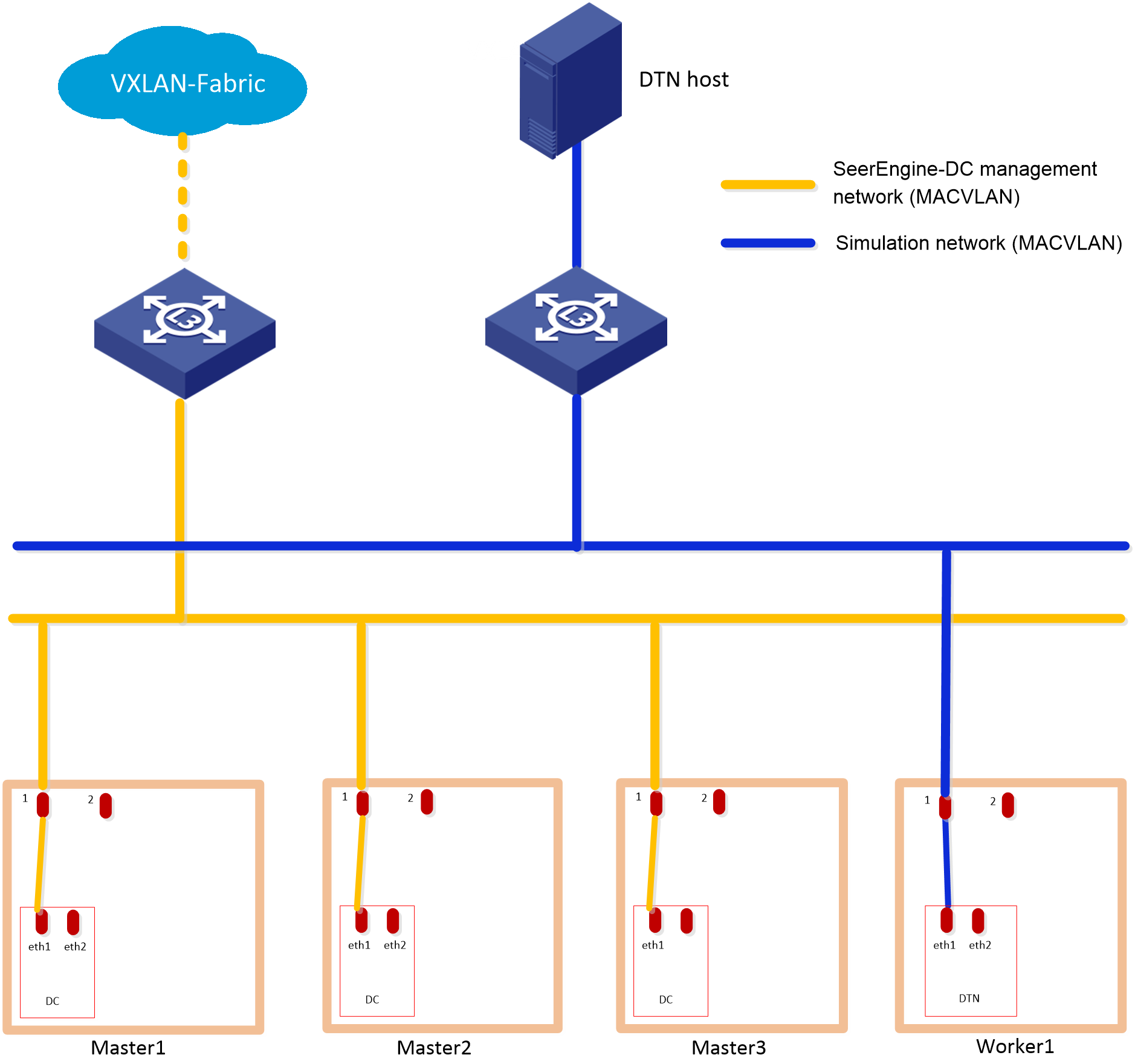

Figure 3 Cloud data center networks in the non-RDRS scenario (only DTN deployed)

|

IMPORTANT: · The MACVLAN and OVS-DPDK subnets are on different network segments. You must configure routing entries on the switches connected to the network interfaces to enable Layer 3 communication between the SeerEngine-DC management network and vBGP management network. · DTN does not support RDRS. · If the simulation management network and the controller management network are connected to the same switch, you must configure VPN instances. If the simulation management network and the controller management network are connected to different switches, make sure the switches are physically isolated. · Make sure the simulation management IP and the simulated device management IP are reachable to each other. |

IP address planning

Use Table 14 as a best practice to calculate IP addresses required for the networks.

Table 14 IP addresses required for the networks in the non-RDRS scenario

|

Component |

Network type |

Maximum team members |

Default team members |

Number of IP addresses |

Remarks |

||

|

SeerEngine-DC |

MACVLAN (management network) |

32 |

3 |

Number of team members + 1 (team IP) |

N/A |

||

|

vDHCP |

MACVLAN (management network) |

2 |

2 |

Number of vBGP clusters* team members + number of vBGP clusters (team IP) |

same MACVLAN management network. |

||

|

vBGP |

Management network and service network converged |

OVS-DPDK (management network) |

2 |

2 |

Number of team members + 1 (team IP) |

N/A |

|

|

Management network and service network separated |

OVS-DPDK (management network) |

2 |

2 |

Number of team members + 1 (team IP) |

N/A |

||

|

OVS-DPDK (service network) |

2 |

2 |

Number of team members |

N/A |

|||

|

DTN |

MACVLAN (simulation management network) |

1 |

1 |

1 (team IP) |

A separate network interface is required. For management IP address assignment for DTN hosts, see H3C SeerEngine-DC Simulation Network Deployment Guide. |

||

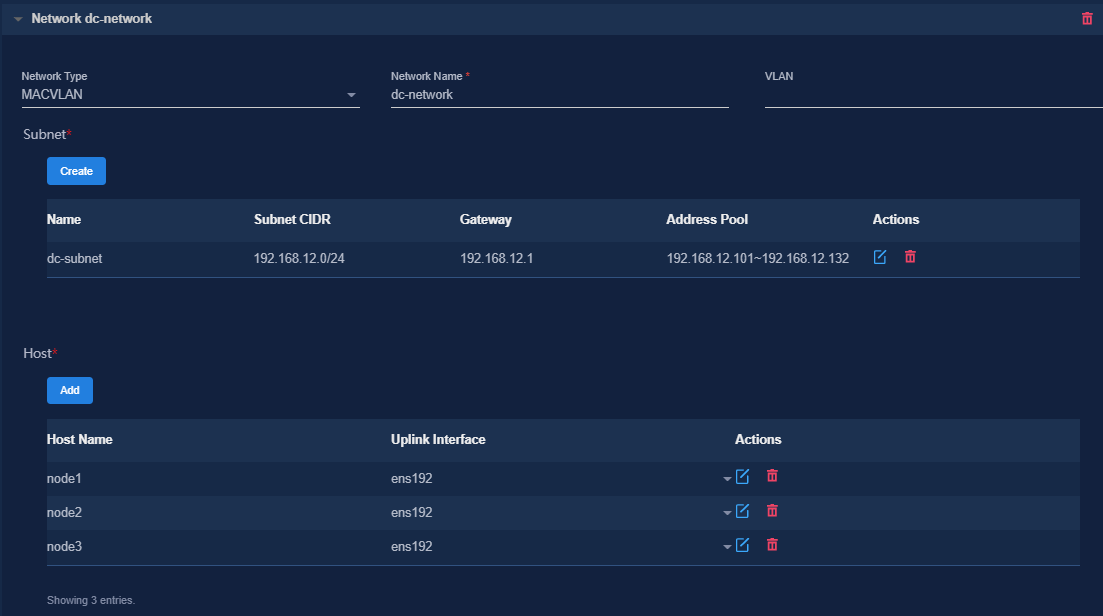

Table 15 shows an example of IP address planning for a single vBGP cluster in a non-RDRS scenario where the management network and service network are converged .

Table 15 IP address planning for the non-RDRS scenario

|

Component |

Network type |

IP addresses |

Remarks |

|

SeerEngine-DC |

MACVLAN (management network) |

Subnet: 192.168.12.0/24 (gateway 192.168.12.1) |

N/A |

|

Network address pool: 192.168.12.101 to 192.168.12.132 |

|||

|

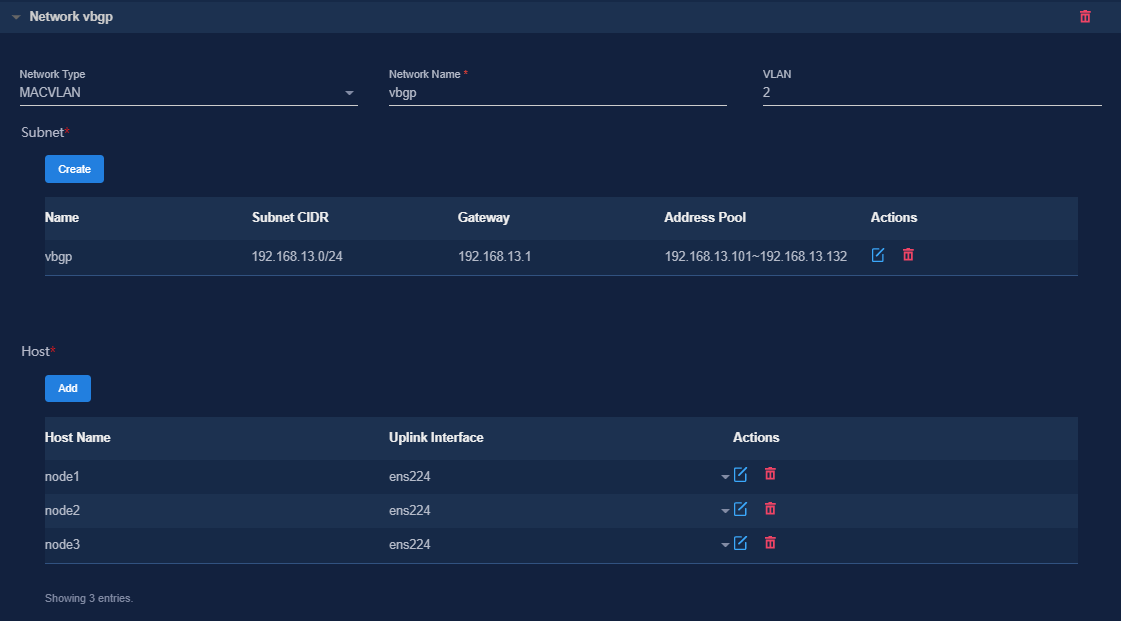

vBGP |

OVS-DPDK (management network) |

Subnet: 192.168.13.0/24 (gateway 192.168.13.1) |

Management network and service network are converged. |

|

Network address pool: 192.168.13.101 to 192.168.13.132 |

|||

|

DTN |

MACVLAN (simulation management network) |

Subnet: 192.168.12.0/24 (gateway 192.168.12.1) |

A separate network interface is required. For management IP address assignment for DTN hosts, see H3C SeerEngine-DC Simulation Network Deployment Guide. |

|

Network address pool: 192.168.12.133 to 192.168.12.133 |

Deploying the controller

1. Log in to the Unified Platform. Click System > Deployment.

2. Obtain the SeerEngine-DC installation packages. Table 16 provides the names of the installation packages. Make sure you select installation packages specific to your server type, x86 or ARM.

Table 16 Installation packages

|

Component |

Installation package name |

Remarks |

|

SeerEngine-DC |

· x86: SeerEngine_DC-version-MATRIX.zip · ARM: SeerEngine_DC-version-ARM64.zip |

Required |

|

vBGP |

· x86: vBGP-version.zip · ARM: vBGP-version-ARM64.zip |

Optional |

|

DTN |

· x86: SeerEngine_DC_DTN-version.zip · ARM: SeerEngine_DC_DTN-version-ARM64.zip |

Optional, for providing simulation services |

|

IMPORTANT: · For some controller versions, the installation packages are released only for one server architecture, x86 or ARM. · The DTN version must be consistent with the SeerEngine-DC version. |

3. Click Upload to upload the installation package and then click Next.

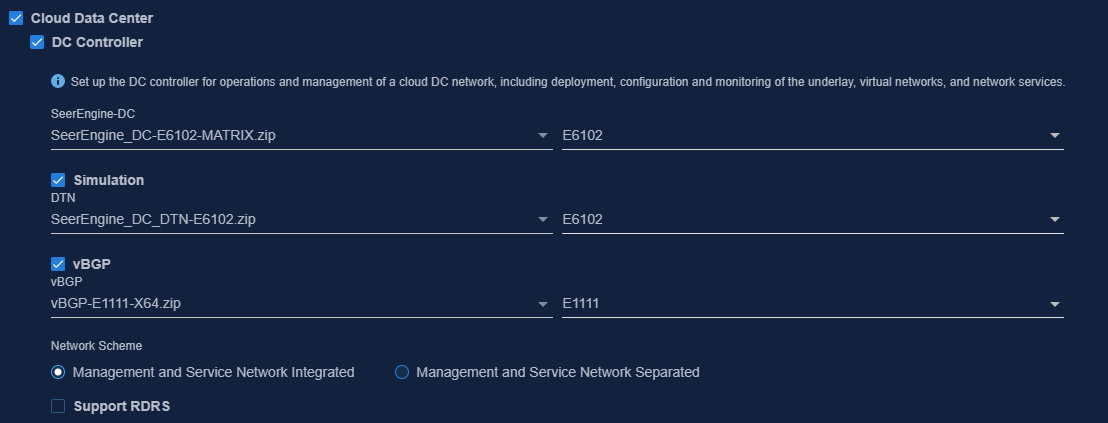

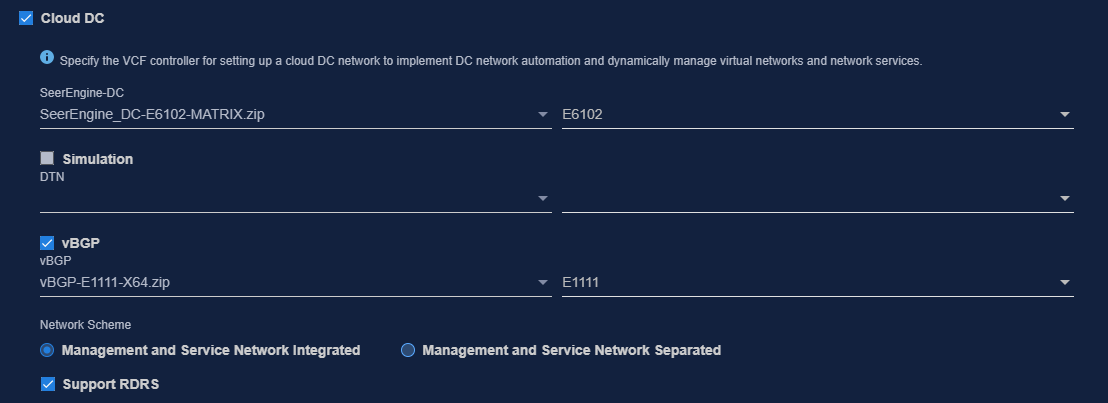

4. Select Cloud Data Center and then select DC Controller. To deploy the vBGP component simultaneously, select vBGP and select a network scheme for vBGP deployment. To deploy the DTN component simultaneously, select Simulation. Then click Next.

Figure 4 Selecting components

|

CAUTION: To avoid malfunction of simulation services, do not delete the worker node on which DTN has been deployed on the Matrix cluster deployment page. |

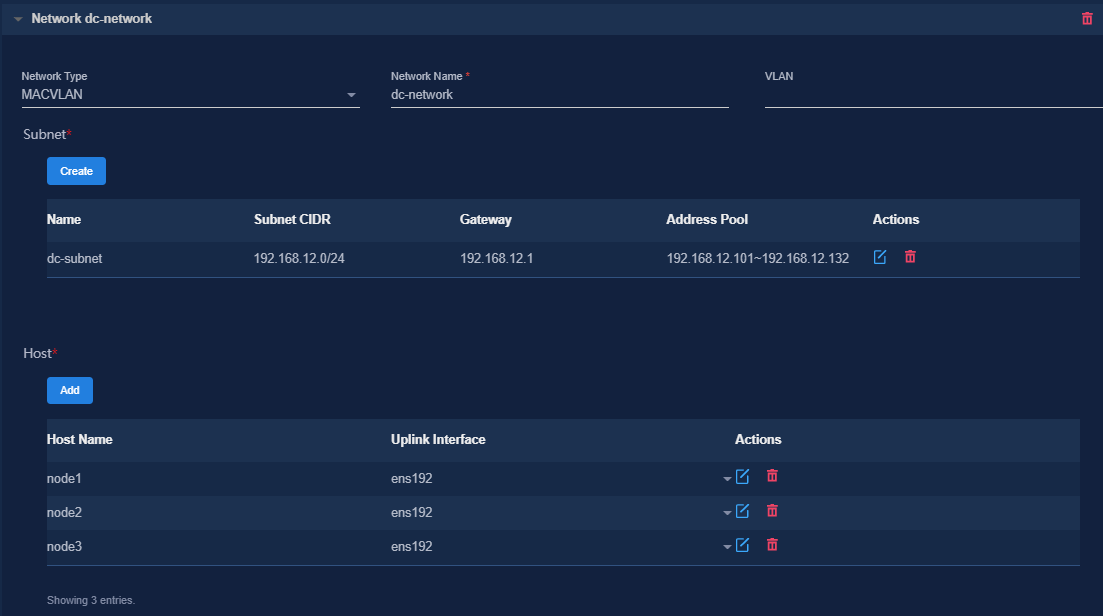

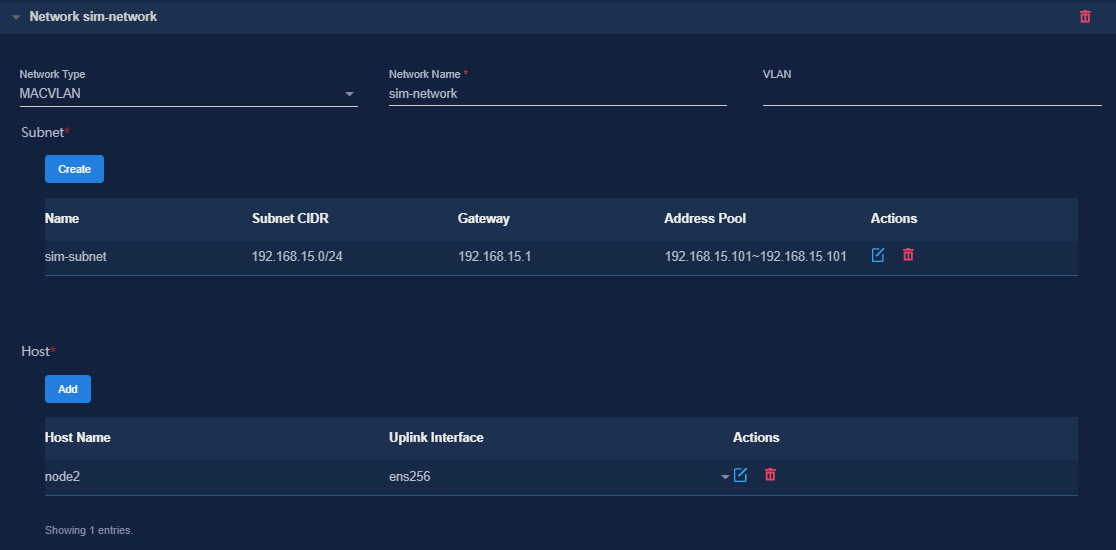

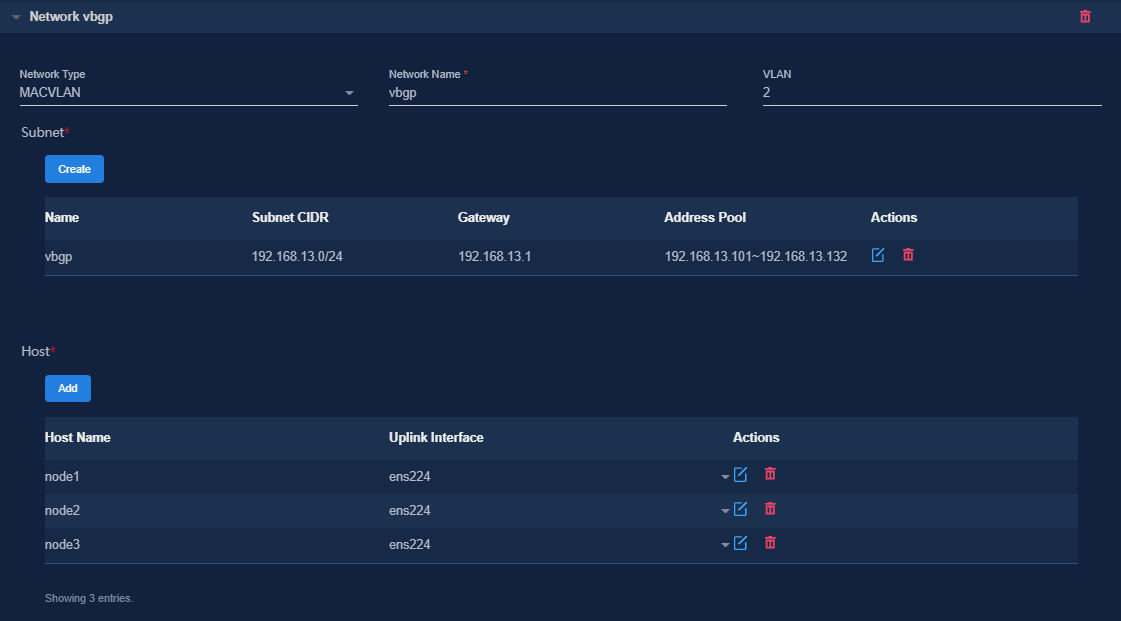

5. Configure the MACVLAN and OVS-DPDK networks and add the uplink interfaces according to the network plan in "Planning the network." If you are not to deploy vBGP, you only need to configure MACVLAN networks.

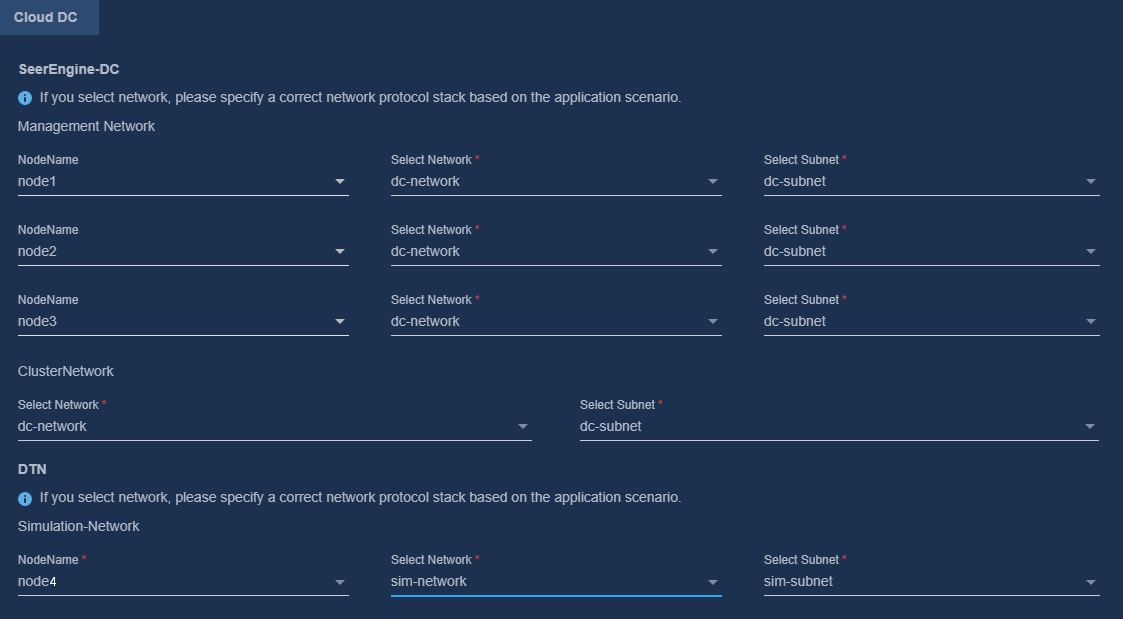

To use simulation services, configure the network settings as follows:

¡ Configure a separate MACVLAN network for the DTN component. Be sure that the subnet IP address pool for the network contains a minimum of one IP address.

¡ If the servers are in standard configuration, the DTN component must have an exclusive use of a worker node server. If the servers are in high-end configuration, the DTN component can have an exclusive use of a worker node server or be deployed on the same master node with a controller. In this example, the DTN component is deployed on a worker node residing on a high-end server.

Figure 5 Configuring a MACVLAN management network for the SeerEngine-DC component

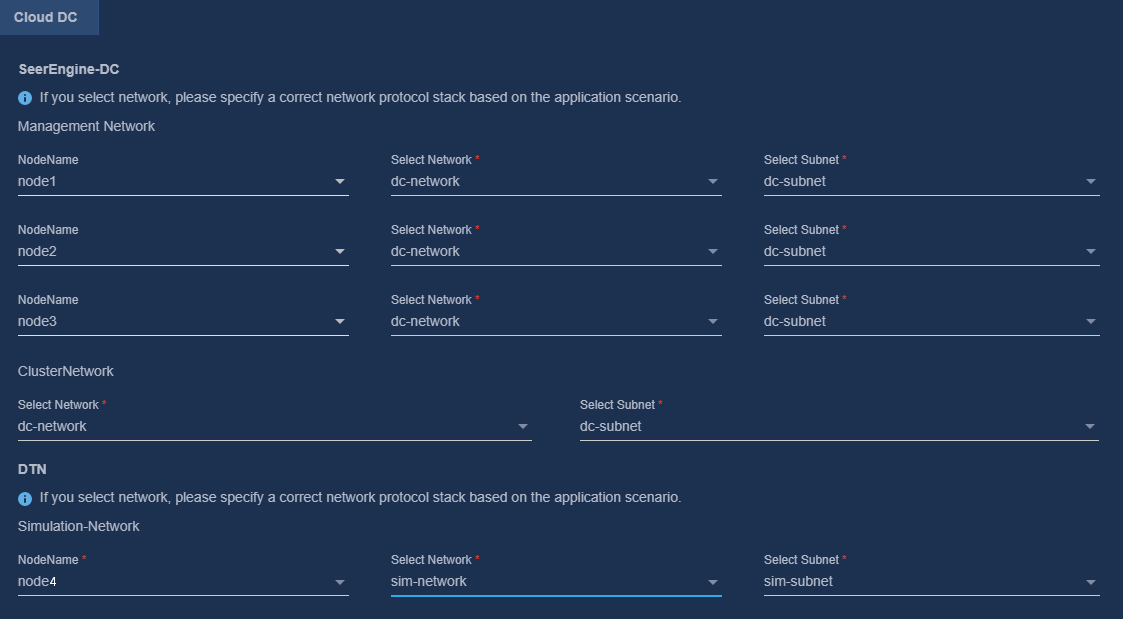

Figure 6 Configuring a MACVLAN management network for the DTN component

Figure 7 Configuring an OVS-DPDK network

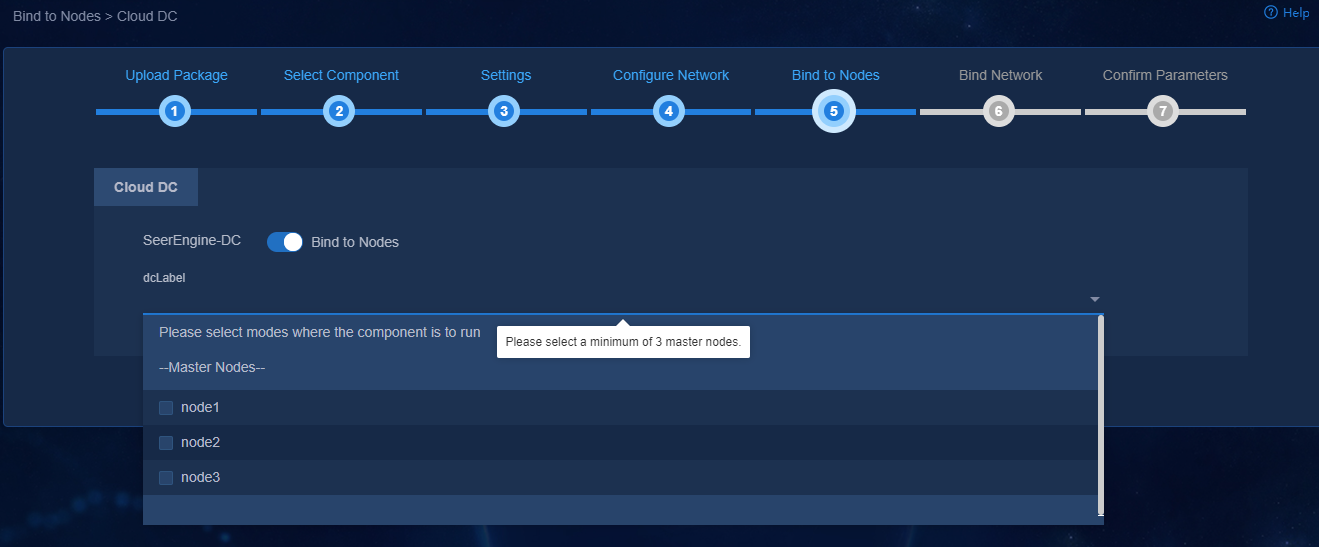

6. (Optional.) On the Bind to Nodes page, select whether to enable node binding. If you enable node binding, select a minimum of three master nodes to host and run microservice pods.

If a resource-intensive component such as SeerAnalyzer is required to be deployed simultaneously with the controller, enable node binding and bind the components to different nodes for better use of server resources.

Figure 8 Enabling node binding

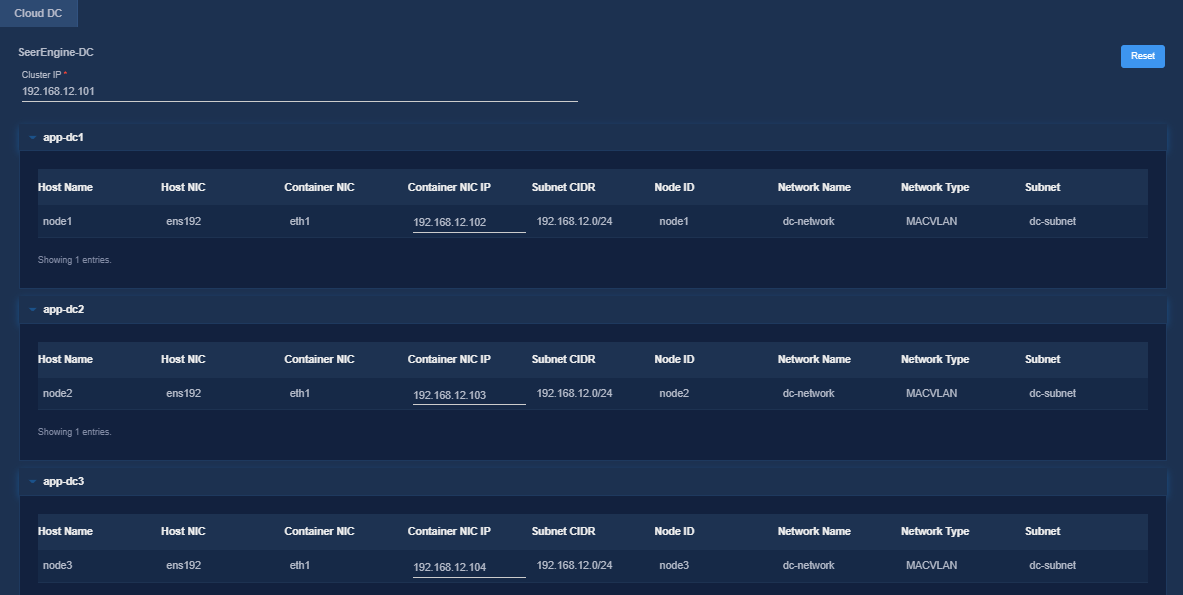

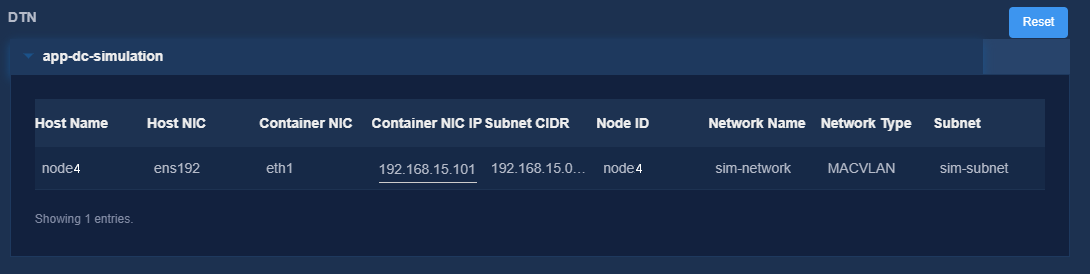

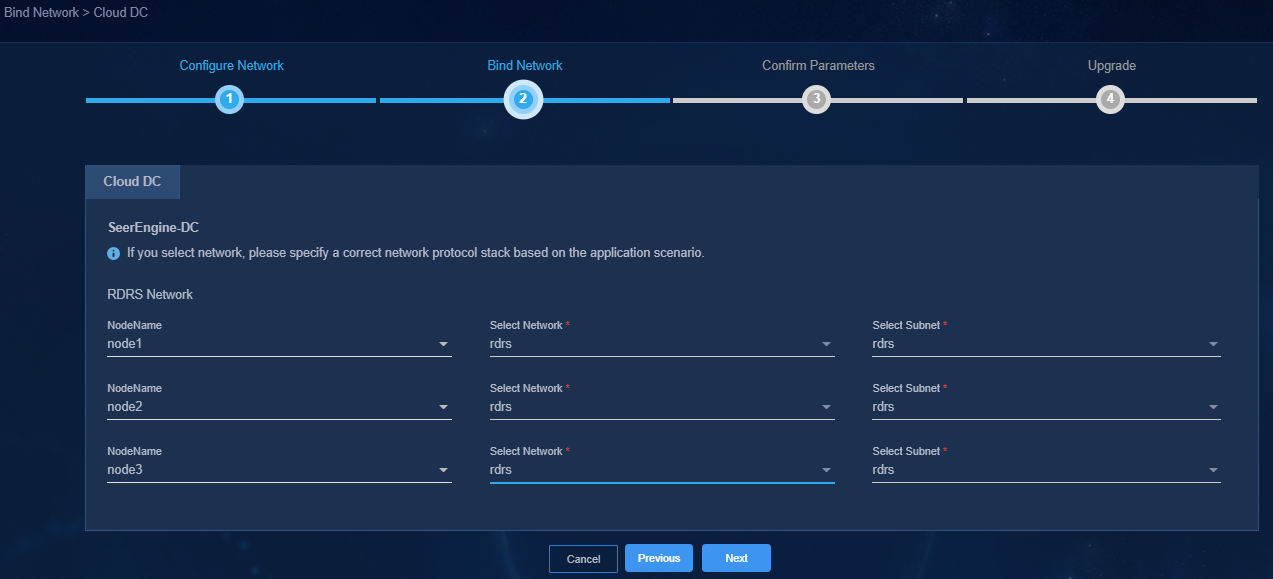

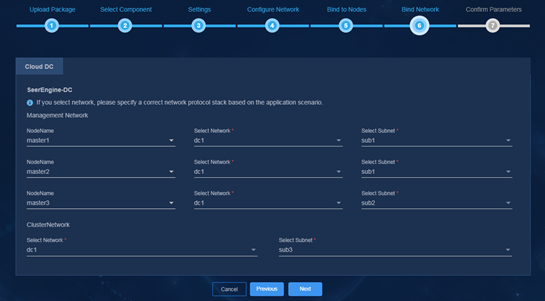

7. Bind networks to the components, assign IP address to the components, specify a network node for the service simulation network, and then click Next.

Figure 9 Binding networks

8. On the Confirm Parameters tab, verify network information and specify a VRRP group ID for the components.

A component automatically obtains an IP address from the IP address pool of the subnet bound to it. To modify the IP address, click Modify and then specify another IP address for the component. The IP address specified must be in the IP address range of the subnet bound to the component.

If vBGP is to be deployed, you are required to specify a VRRP group ID in the range of 1 to 255 for the components. The VRRP group ID must be unique within the same network.

Figure 10 Confirming parameters (SeerEngine-DC)

Figure 11 Confirming parameters (DTN)

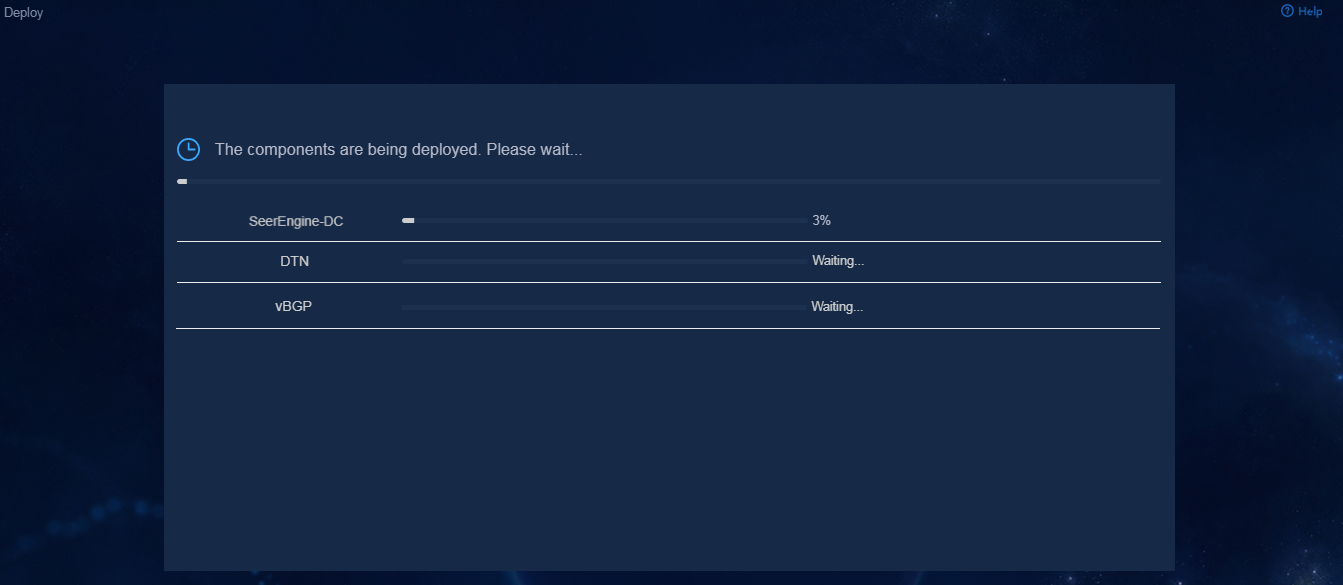

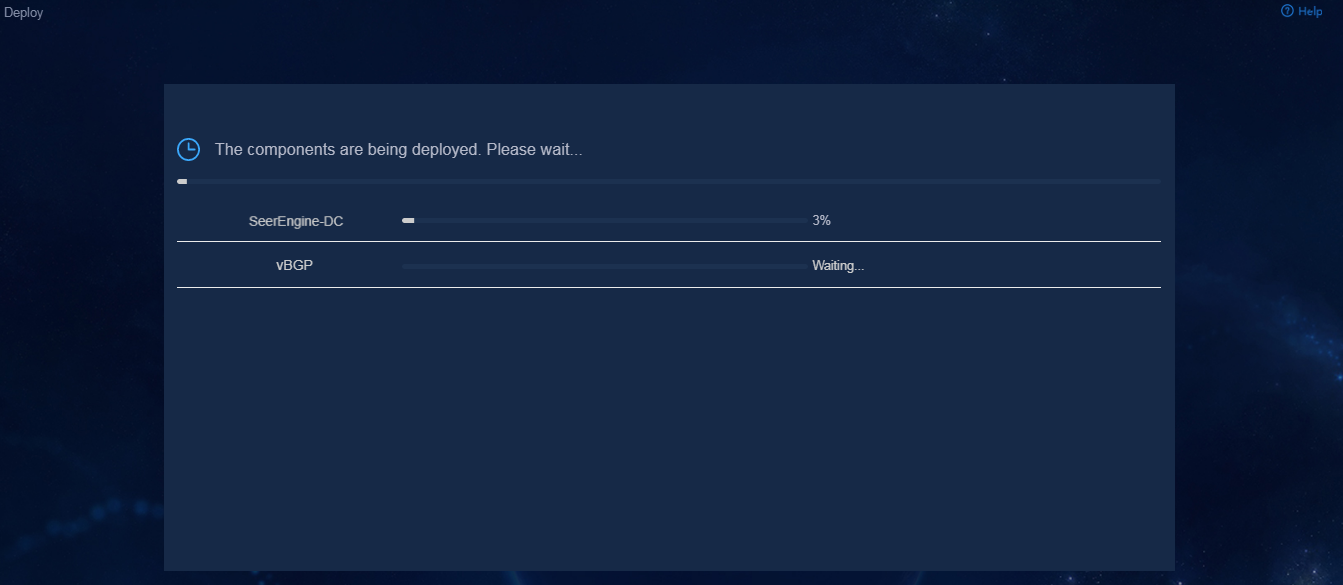

9. Click Deploy.

Figure 12 Deployment in progress

Accessing the controller

After the controller is deployed on the Unified Platform, the controller menu items will be loaded on the Unified Platform. Then you can access the Unified Platform to control and manage the controller.

To access the controller:

1. Enter the address for accessing the Unified Platform in the address bar and then press Enter.

By default, the login address is http://ip_address:30000/central/index.html.

¡ ip_address represents the northbound virtual IP address of the Unified Platform.

¡ 30000 is the port number.

Figure 13 Unified Platform login page

2. Enter the username and password, and then click Log in.

The default username is admin and the default password is Pwd@12345.

Figure 14 Unified Platform dashboard

Registering and installing licenses

Registering and installing licenses for the Unified Platform

For the Unified Platform license registration and installation procedure, see H3C Unified Platform Deployment Guide.

Registering and installing licenses for the controller

After you install the controller, you can use its complete features and functions for a 180-day trial period. After the trial period expires, you must get the controller licensed.

Installing the activation file on the license server

For the activation file request and installation procedure, see H3C Software Products Remote Licensing Guide.

Obtaining licenses

1. Log in to the Unified Platform and then click System > License Management > DC license.

2. Configure the parameters for the license server as described in Table 18.

Table 17 License server parameters

|

Item |

Description |

|

IP address |

Specify the IP address configured on the license server used for internal communication in the cluster. |

|

Port number |

Specify the service port number of the license server. The default value is 5555. |

|

Username |

Specify the client username configured on the license server. |

|

Password |

Specify the client password configured on the license server. |

3. Click Connect to connect the controller to the license server.

The controller will automatically obtain licensing information after connecting to the license server.

Backing up and restoring the controller configuration

You can back up and restore the controller configuration on the Unified Platform. For the procedures, see H3C Unified Platform Deployment Guide.

Upgrading the controller and DTN

|

CAUTION: · If both SeerEngine-DC and DTN require an upgrade, upgrade SeerEngine-DC before DTN. The DTN version must be consistent with the SeerEngine-DC version after the upgrade. · The upgrade might cause service interruption. Be cautious when you perform this operation. · Before upgrading or scaling out the Unified Platform or the controller, specify the manual switchover mode for the RDRS if the RDRS has been created. · Do not upgrade the controllers on the primary and backup sites simultaneously if the RDRS has been created. Upgrade the controller on a site first, and upgrade the controller on another site after data is synchronized between the two sites. · If the simulation network construction page has a display issue after the DTN component is upgraded, clear the browser cache and log in again. · After upgrading the DTN component from E6102 or earlier to E6103 or later, you must reinstall the operating system and reconfigure settings for DTN hosts and delete the original hosts from the simulation network and then reincorporate the hosts. For how to install the operating system and configure settings for DTN hosts, see H3C SeerEngine-DC Simulation Network Environment Deployment Guide. · After upgrading the DTN component from E6202 or earlier to E6203 or later, you must uninstall and reconfigure the DTN hosts and delete the original hosts from the simulation network and then reincorporate the hosts. For how to uninstall and configure DTN hosts, see H3C SeerEngine-DC Simulation Network Environment Deployment Guide. |

This section describes the procedure for upgrading and uninstalling the controller and DTN. For the upgrading and uninstallation procedure for the Unified Platform, see H3C Unified Platform Deployment Guide.

The components can be upgraded on the Unified Platform with the configuration retained.

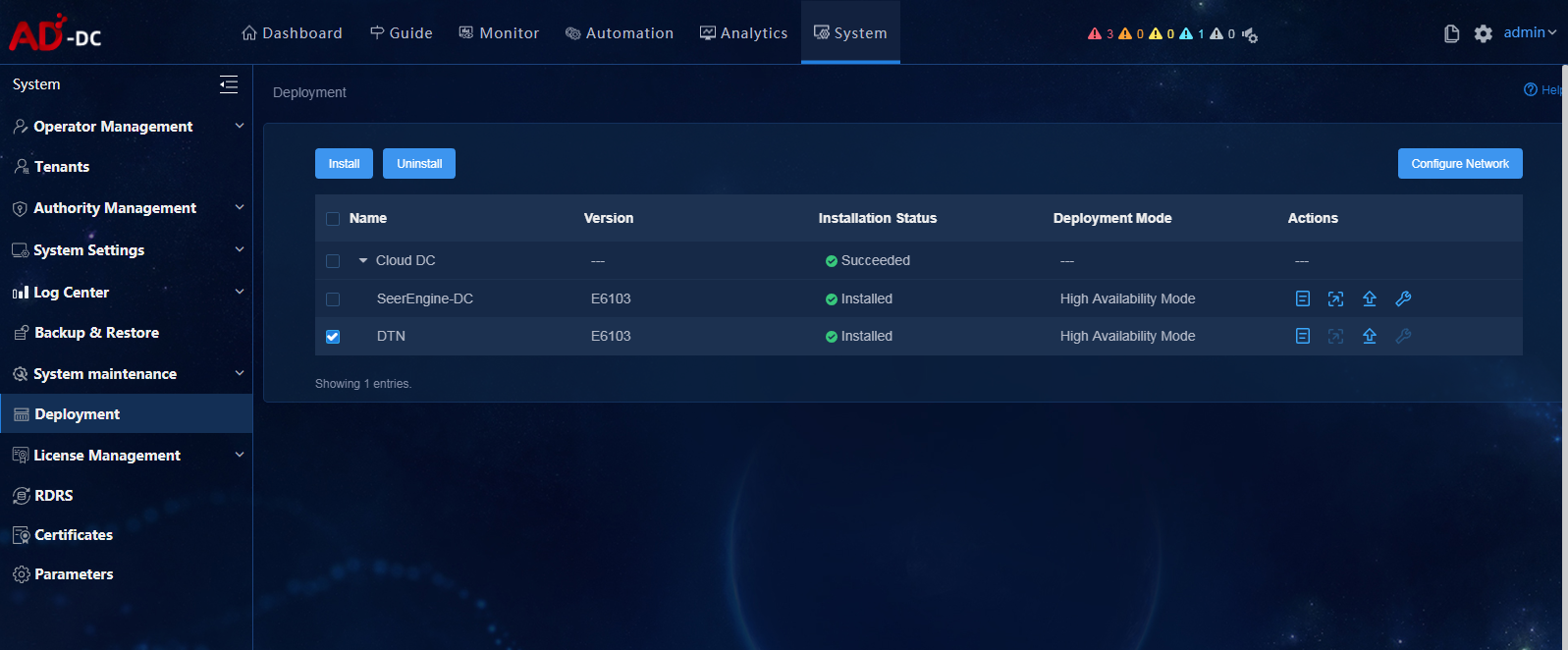

To upgrade the controller and DTN:

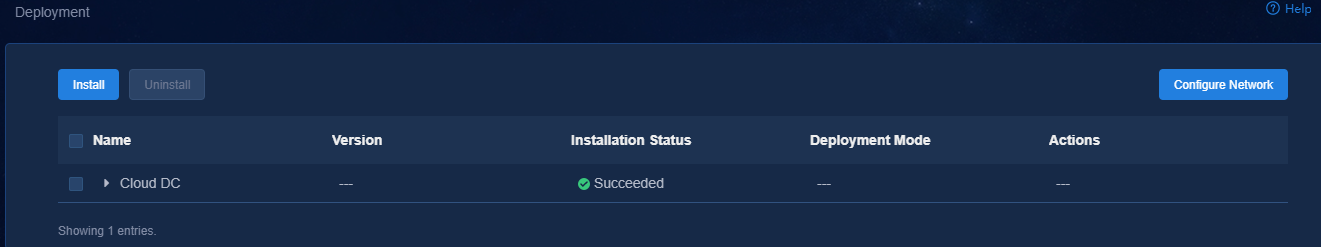

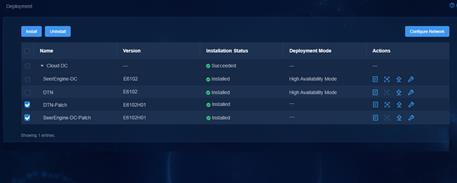

1. Log in to the Unified Platform. Click System > Deployment.

Figure 15 Deployment page

2. Click the left chevron button ![]() for Cloud DC to

expand component information. Then upgrade SeerEngine-DC and DTN.

for Cloud DC to

expand component information. Then upgrade SeerEngine-DC and DTN.

a. Click the ![]() icon for the

SeerEngine-DC component to upgrade the SeerEngine-DC component.

icon for the

SeerEngine-DC component to upgrade the SeerEngine-DC component.

- If the controller already supports RDRS, the upgrade page is displayed.

# Upload and select the installation package.

# Select whether to enable Add Master Node-Component Bindings. The nodes that have been selected during controller deployment cannot be modified or deleted.

# Click Upgrade.

# If the upgrade fails, click Roll Back to roll back to the previous version.

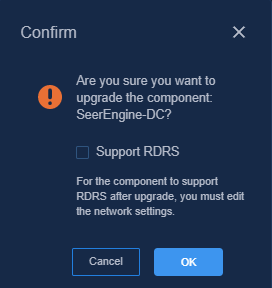

- If the controller does not support RDRS, the system displays a confirmation dialog box with a Support RDRS option.

If you leave the Support RDRS option unselected, the upgrade page is displayed. Proceed with the upgrade.

If you select the Support RDRS option, the system will guide you to upgrade the component to support RDRS. For the upgrade procedure, see "Upgrading SeerEngine-DC to support RDRS."

b. Click the ![]() icon for the DTN

component to upgrade the DTN component.

icon for the DTN

component to upgrade the DTN component.

# Upload and select the installation package.

# Click Upgrade.

# If the upgrade fails, click Roll Back to roll back to the previous version.

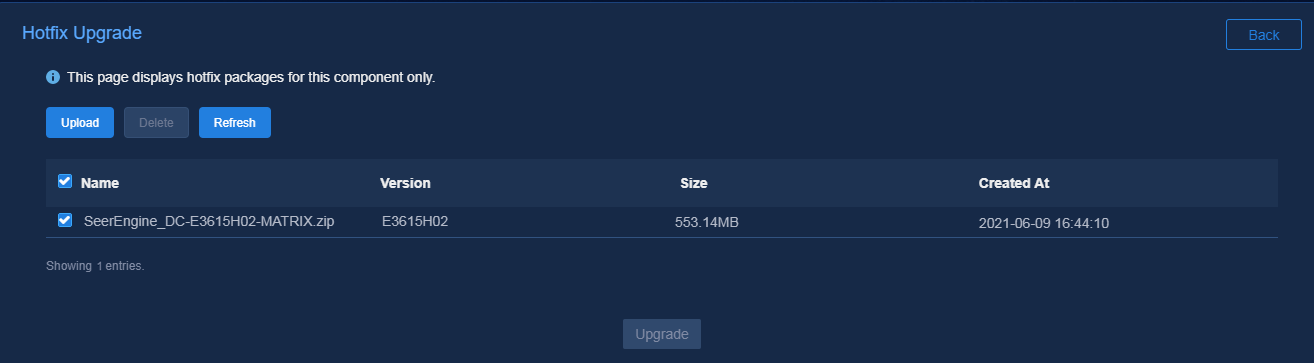

Hot patching the controller

|

CAUTION: · Hot patching the controller might cause service interruption. To minimize service interruption, select the time to hot patch the controller carefully. · You cannot upgrade the controller to support RDRS through hot patching. · If you are to hot patch the controller after the RDRS is created, first specify the manual switchover mode for the RDRS. · Do not hot patch the controllers at the primary and standby sites at the same time after the RDRS is created. Only after the controller at a site is upgraded and data is synchronized, you can upgrade the controller at the other site. |

On the United Platform, you can hot patch the controller with the configuration retained.

To hot patch the controller:

1. Log in to the Unified Platform. Click System > Deployment.

Figure 16 Deployment page

2. Click the left chevron button ![]() of the controller to

expand controller information, and then click the hot patching icon

of the controller to

expand controller information, and then click the hot patching icon ![]() .

.

3. Upload the patch package and select the patch of the required version, and then click Upgrade.

Figure 17 Hot patching page

4. If the upgrade fails, click Roll Back to roll back to the previous version or click Terminate to terminate the upgrade.

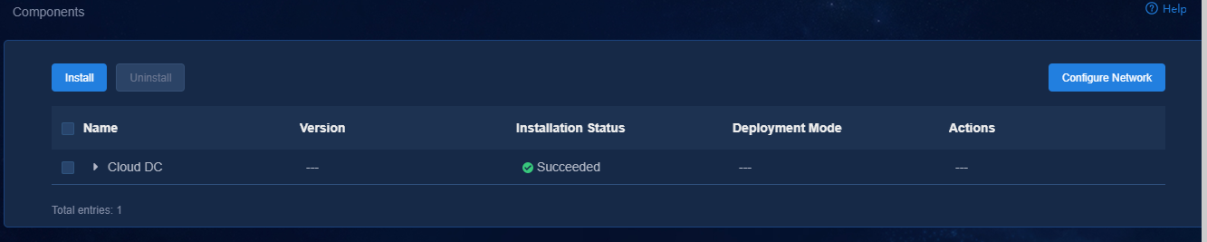

Uninstalling SeerEngine-DC and DTN

When you uninstall the SeerEngine-DC controller, DTN will be uninstalled simultaneously.

To uninstall SeerEngine-DC and DTN:

1. Log in to the Unified Platform. Click System > Deployment.

2. Click the ![]() icon to the left of the controller name and then

click Uninstall.

icon to the left of the controller name and then

click Uninstall.

Figure 18 Uninstalling the controller and DTN

Uninstalling the DTN component only

The DTN component can be uninstalled separately.

To uninstall the DTN component only:

1. Log in to the Unified Platform. Click System > Deployment.

2. Click the ![]() icon to the left of the DTN

component and then click Uninstall.

icon to the left of the DTN

component and then click Uninstall.

Figure 19 Uninstalling the DTN component

Uninstalling a hot patch

1. Log in to the Unified Platform. Click System > Deployment.

2. Select a patch, and then click Uninstall.

Figure 20 Uninstalling a hot patch

RDRS

About RDRS

A remote disaster recovery system (RDRS) provides disaster recovery services between the primary and backup sites. The controllers at the primary and backup sites back up each other. When the RDRS is operating correctly, data is synchronized between the site providing services and the peer site in real time. When the service-providing site becomes faulty because of power, network, or external link failure, the peer site immediately takes over to ensure service continuity.

The RDRS supports the following switchover modes:

· Manual switchover—In this mode, the RDRS does not automatically monitor state of the controllers on the primary or backup site. You must manually control the controller state on the primary and backup sites by specifying the Switch to Primary or Switch to Backup actions. This mode requires deploying the Unified Platform of the same version on the primary and backup sites.

· Auto switchover with arbitration—In this mode, the RDRS automatically monitors state of the controllers. Upon detecting a controller or Unified Platform failure (because of site power or network failure), the RDRS automatically switches controller state at both sites by using the arbitration service. This mode also supports manual switchover. To use this mode, you must deploy the Unified Platform of the same version at the primary and backup sites and the arbitration service as a third-party site.

The arbitration service can be deployed on the same server as the primary or backup site. However, when the server is faulty, the arbitration service might stop working. As a result, RDRS auto switchover will fail. As a best practice, configure the arbitration service on a separate server.

RDRS deployment procedure at a glance

1. Deploy the primary and backup sites.

2. Configure the RDRS settings for the controllers at the primary and backup sites.

3. Deploy the third-party arbitration service.

4. Create an RDRS.

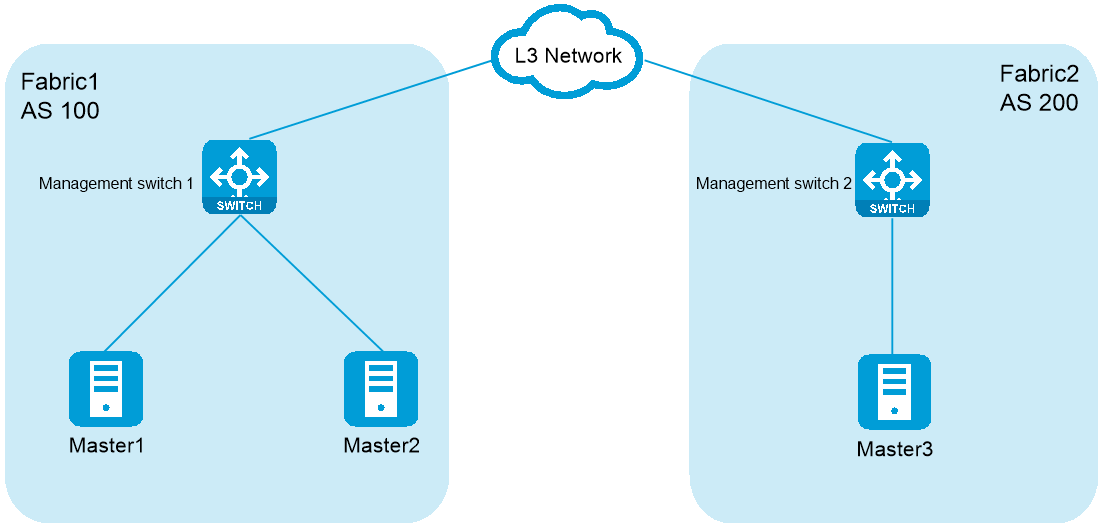

Planning the network

|

CAUTION: · To use vBGP, make sure the primary and backup sites have a consistent OVS-DPDK network scheme and the same number of vBGP clusters. · In an RDRS scenario, if you configure DHCP relay on the management switch for automated underlay network deployment, you must specify the controller clusters' IPs of both the primary and backup sites as relay servers. · To deploy RDRS for the controllers, make sure the primary and backup sites uses different IP addresses for the RDRS networks. |

Table 18 Network types and numbers used by components at the primary/ backup site in the RDRS scenario

|

Component |

Network type |

Number of networks |

Remarks |

|

|

SeerEngine-DC |

MACVLAN (management network) |

1 |

N/A |

|

|

MACVLAN (RDRS network) |

1 |

· Used for carrying traffic for real-time data synchronization between the primary and backup sites. · Used for communication between the RDRS networks at the primary and backup sites · As a best practice, use a separate network interface. |

||

|

vBGP |

Management network and service network converged |

OVS-DPDK (management network) |

1*number of vBGP clusters |

· Used for communication between the vBGP and SeerEngine-DC components and service traffic transmission. · Each vBGP cluster requires a separate OVS-DPDK management network. |

|

Management network and service network separated |

OVS-DPDK (management network) |

1*number of vBGP clusters |

· Used for communication between the vBGP and SeerEngine-DC components. · Each vBGP cluster requires a separate OVS-DPDK management network. |

|

|

OVS-DPDK (service network) |

1*number of vBGP clusters |

· Used for service traffic transmission. · Each vBGP cluster requires a separate OVS-DPDK service network. |

||

Figure 21 Cloud DC networks in an RDRS scenario (vBGP deployed)

Table 19 IP addresses required for the networks at the primary/backup site in the RDRS scenario

|

Component |

Network type |

Maximum team members |

Default team members |

Number of IP addresses |

Remarks |

|

|

SeerEngine-DC |

MACVLAN (management network) |

32 |

3 |

Number of cluster nodes + 1 (cluster IP) |

N/A |

|

|

MACVLAN (RDRS network) |

32 |

3 |

Number of cluster nodes |

A separate network interface is required. |

||

|

vBGP |

Management network and service network converged |

OVS-DPDK (management network) |

2 |

2 |

Number of vBGP clusters*number of cluster nodes + Number of vBGP clusters (cluster IP) |

Each vBGP cluster requires a separate OVS-DPDK management network. |

|

Management network and service network separated |

OVS-DPDK (management network) |

2 |

2 |

Number of vBGP clusters*number of cluster nodes + Number of vBGP clusters (cluster IP) |

Each vBGP cluster requires a separate OVS-DPDK management network. |

|

|

OVS-DPDK (service network) |

2 |

2 |

Number of vBGP clusters*number of cluster nodes |

Each vBGP cluster requires a separate OVS-DPDK service network. |

||

Table 21 shows an example of IP address planning for a single vBGP cluster in an RDRS scenario where the management network and service network are converged.

Table 20 IP address planning for the RDRS scenario

|

Site |

Component |

Network |

IP address |

Remarks |

|

Primary site |

SeerEngine-DC |

MACVLAN (management network) |

Subnet: 192.168.12.0/24 (gateway 192.168.12.1) |

Make sure the primary and backup sites use different IP addresses for the RDRS networks and controllers. |

|

Address pool: 192.168.12.101 to 192.168.12.132 |

||||

|

MACVLAN (RDRS network) |

Subnet: 192.168.16.0/24 (gateway 192.168.16.1) |

As a best practice, use a separate network interface. |

||

|

Address pool: 192.168.16.101 to 192.168.16.132 |

||||

|

vBGP |

OVS-DPDK (management network) |

Subnet: 192.168.13.0/24 (gateway 192.168.13.1) |

N/A |

|

|

Address pool: 192.168.13.101 to 192.168.13.132 |

||||

|

Backup site |

SeerEngine-DC |

MACVLAN (management network) |

Subnet: 192.168.12.0/24 (gateway 10.0.234.254) |

Make sure the primary and backup sites use different IP addresses for the RDRS networks and controllers. |

|

Address pool: 192.168.12.133 to 192.168.12.164 |

||||

|

MACVLAN (RDRS network) |

Subnet: 192.168.16.0/24 (gateway 192.168.16.1) |

As a best practice, use a separate network interface. |

||

|

Address pool: 192.168.16.133 to 192.168.16.164 |

||||

|

vBGP |

OVS-DPDK (management network) |

Subnet: 192.168.13.0/24 (gateway 192.168.13.1) |

N/A |

|

|

Address pool: 192.168.13.133 to 192.168.13.164 |

Deploying the primary and backup sites

Restrictions and guidelines

Follow these restrictions and guidelines when you deploy the primary and backup sites and a site:

· The Unified Platform version, transfer protocol (HTTP or HTTPS), username and password, and IP version of the primary and backup sites must be the same.

· The arbitration service package on the site must match the Unified Platform version on the primary and backup sites.

· To use the auto switchover with arbitration mode, you must deploy a standalone Unified Platform as the site, and deploy arbitration services on the site.

· To use the allowlist feature in an RDRS scenario, you must add the IP addresses of all nodes on the backup site to the allowlist on the primary site, and add the IP addresses of all nodes on the primary site to the allowlist on the backup site.

Procedure

This procedure uses a separate server as the site and deploys the Unified Platform in standalone mode on this site.

To deploy the primary and backup sites and a site:

1. Deploy Matrix on primary and backup sites and the site. For the deployment procedure, see H3C Unified Platform Deployment Guide.

2. Deploy the Unified Platform on primary and backup sites. Specify the same NTP server for the primary and backup sites. For the deployment procedure, see H3C Unified Platform Deployment Guide.

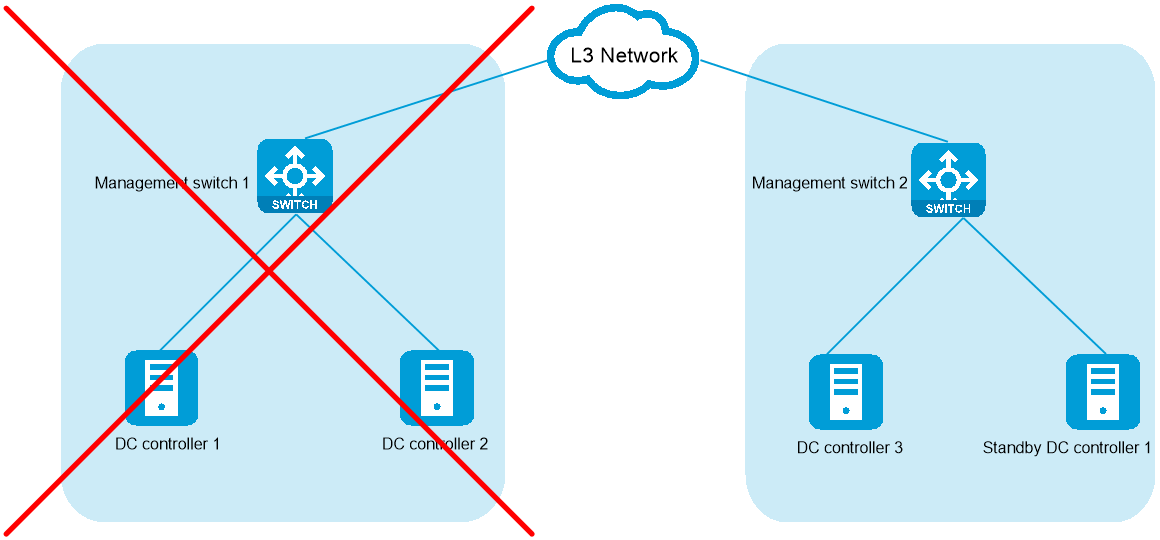

Configuring the RDRS settings for the controllers at the primary and backup sites

Restrictions and guidelines

If the controller installed on the specified backup site does not support disaster recovery or is not in backup state, remove the controller and install it again.

Procedure

1. Log in to the Unified Platform. Click System > Deployment.

2. Obtain the SeerEngine-DC installation package.

The SeerEngine-DC installation packages used for the primary and backup sites must be consistent in version and name.

3. Click Upload to upload the installation package and then click Next.

4. Select Cloud DC and DC controller. Then select the Support for RDRS network scheme. To deploy the vBGP component simultaneously, select vBGP and select the Management and service network converged network scheme for the vBGP component. Then click Next.

Figure 22 Selecting components

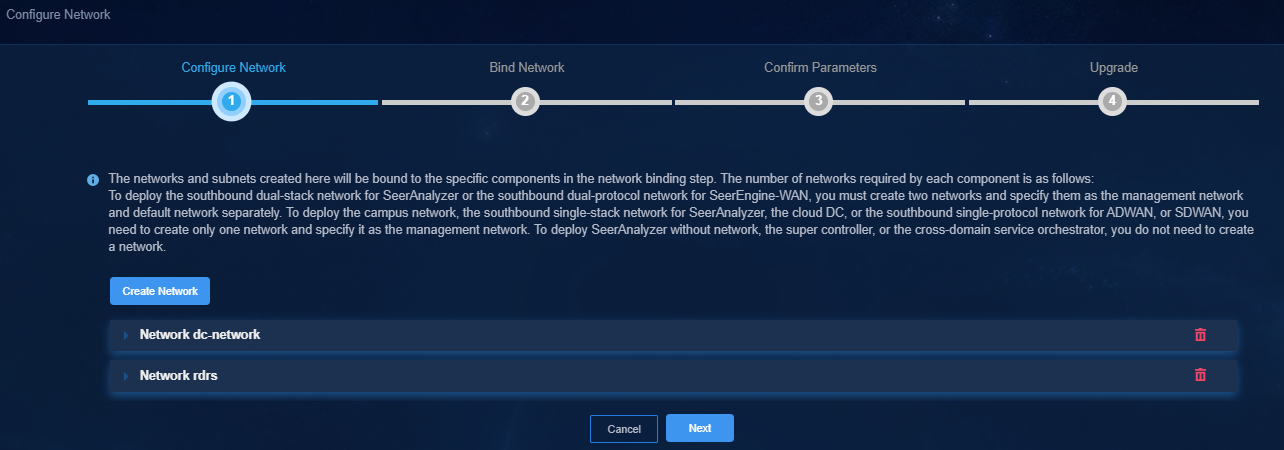

5. Configure the networks required by the components and add the uplink interfaces according to the network plan in "Planning the network."

¡ Configure a separate MACVLAN as the RDRS network.

¡ To deploy vBGP, configure an OVS-DPDK network. Make sure the network solutions are the same at the primary and backup sites.

The following shows network configurations at the primary site.

Figure 23 Configuring a MACVLAN management network for the SeerEngine-DC controller

Figure 24 Configuring an RDRS network

Figure 25 Configuring an OVS-DPDK network

6. (Optional.) On the Bind Node page, select whether to enable node binding. If you enable node binding, select a minimum of three master nodes to host and run microservice pods.

If a resource-intensive component such as SeerAnalyzer is required to be deployed simultaneously with the controller, enable node binding and bind the components to different nodes for better use of server resources.

7. Bind networks to the components and assign IP address to the components. Then click Next.

Figure 26 Binding networks (1)

8. On the Confirm Parameters page, verify network information and specify the RDRS status and a VRRP group ID for the components.

A component automatically obtains an IP address from the IP address pool of the subnet bound to it. To modify the IP address, click Modify and then specify another IP address for the component. The IP address specified must be in the IP address range of the subnet bound to the component.

Configure the RDRS status for the controllers:

¡ Select Primary from the Status in RDRS list for a controller at the primary site.

¡ Select Backup from the Status in RDRS list for a controller at the backup site.

If vBGP is to be deployed, you are required to specify a VRRP group ID in the range of 1 to 255 for the components. The VRRP group ID must be unique within the same network.

9. Click Deploy.

Figure 27 Deployment in progress

Deploying the third-party arbitration service

Preparing for deployment

Hardware requirements

The third-party site can be deployed on a physical server. Table 22 describes the hardware requirements for a server to host the third-party site.

Table 21 Hardware requirements for a server to host the third-party site

|

CPU |

Memory size |

Drive |

Network interface |

|

x86-64 (Intel64/AMD64) 2 cores 2.0 GHz or above |

16 GB or above |

The drives must be configured in RAID 1. · Drive configuration option 1: ¡ System drive: 2 × 480 GB SSDs configured in RAID1 that provides a minimum total drive size of 256 GB. ¡ ETCD drive: 2 × 480 GB SSDs, configured in RAID1 that provides a minimum total drive size of 20 GB. (Installation path: /var/lib/etcd.) · Drive configuration option 2: ¡ System drive: 2 × 600 GB 7.2K RPM or above HDDs configured in RAID 1 that provides a minimum total drive size of 256 GB ¡ ETCD drive: 2 × 600 GB 7.2K RPM or above HDDs that provides a minimum total drive size of 20 GB ¡ Storage controller: 1 GB cache, power fail protected with a supercapacitor installed |

1 × 10 Gbps or above |

Software requirements

As a best practice, deploy the third-party arbitration service on the CentOS 7.0 system. The correct operation of the third-party arbitration service requires installation of the CentOS 7.0 software dependencies described in Table 23.

Table 22 CentOS 7.0 software dependencies and their versions

|

Software dependency name |

Version |

|

java openjdk |

1.8.0 |

|

Zip |

3.0 |

|

Unzip |

6.0 |

|

docker-ce |

18.09.6 |

|

Containerd |

1.2.13 |

Deploying the third-party arbitration service

Restrictions and guidelines

Make sure the application software package at the third-party site matches the SNA Center version at the primary and backup sites.

To avoid service failure, do not change the system time after the deployment.

Execution of the ifconfig command on network interfaces might cause loss of default routes. For correct deployment and operation of the third-party arbitration service, use the ifup and ifdown commands to configure network interfaces.

If multiple network interfaces exist on the node that hosts the third-party arbitration service, make sure all other network interfaces except the network interface used for the third-party arbitration service are down. If such a network interface is up, use the ifdown command to shut down it.

Pre-installation checklist

Before deploying the third-party arbitration service, check the environment against the checklist described in Table 24 to be sure that all requirements are met.

Table 23 Pre-installation checklist

|

Item |

Requirements |

|

|

Server |

Hardware |

The hardware of the server, including CPUs, memory, disks, and network interfaces, meets the requirements. |

|

Software |

· The version of the operating system is as required. · The system time settings are configured correctly. As a best practice, configure NTP for time synchronization and make sure the devices on the network synchronize to the same clock source. · Network settings, including IP addresses, have been configured correctly. |

|

|

Whether the third-party arbitration service has been installed in the system |

If the third-party arbitration service has been installed in the system, uninstall and reinstall the service again. |

|

|

Network interface |

Make sure the service has an exclusive use of a network interface. No subinterfaces or subnet IPs have been configured on the network interface. |

|

|

IP address |

The IP addresses of other interfaces on the node that hosts the third-party arbitration service must not be on the same network segment with the IP address used for the third-party arbitration service. |

|

|

Time zone |

For the arbitration service to function correctly, make sure the third-party arbitration service and the primary and backup sites are in the same time zone. You can use the timedatectl command to view the system time zone of each node. |

|

|

Power supply |

To protect the file system from irreversible damages (such as damage of the configuration file of the docker.service, etcd.service, or chrony.conf component), do not restart the node, power off the node forcibly, or reset the VM during the deployment and operation of the third-party arbitration service. |

|

Uploading the third-party arbitration service installation package

Obtain and copy the third-party arbitration service installation package to the installation directory on the server, or upload the installation package to the installation directory by using a file transfer protocol such as FTP.

The installation package is named in the SeerEngine_DC_ARBITRATOR-version.zip (applicable to a x86 system) or SeerEngine_DC_ARBITRATOR-version-ARM64.zip (applicable to an ARM system) format.

For some controller versions, the installation package is released only for one server architecture, x86 or ARM.

|

CAUTION: To avoid installation package damage, select the binary code if you use FTP or TFTP to upload or download the installation package. |

Installing the third-party arbitration service

The system supports the use of the root user or a non-root user to install the third-party arbitration service. As a best practice, use the root user for the installation. To use a non-root user to install the software package, you must use the root user to modify the configuration file by using the visudo command and assign installation permission to the non-root user by adding the following configuration items at the end of the configuration file:

[root@rdr01 ~]# visudo

<username> ALL=(root) NOPASSWD:/bin/bash

The following procedure uses the root user for the installation. To use a non-root user for the installation, execute the sudo ./install.sh command.

1. Access the directory where the third-party arbitration service installation package is saved and install the third-party arbitration service.

[root@rdr01 ~]# unzip SeerEngine_DC_ARBITRATOR-E3611.zip

[root@rdr01 ~]# cd SeerEngine_DC_ARBITRATOR-E3611/

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# ./install.sh

Installing...

2021-03-04 16:42:52 [info] -----------------------------------

2021-03-04 16:42:52 [info] SeerEngine_DC_ARBITRATOR-E3611

2021-03-04 16:42:52 [info] H3Linux Release 1.1.2

2021-03-04 16:42:52 [info] Linux 3.10.0-957.27.2.el7.x86_64

2021-03-04 16:42:52 [info] -----------------------------------

2021-03-04 16:42:53 [warn] To avoid unknown error, do not interrupt this installation procedure.

2021-03-04 16:42:57 [info] Checking environment...

2021-03-04 16:42:57 [info] Decompressing rdrArbitrator package...

Complete!

2. Verify that the installation is successful. If the installation succeeds, the result is displayed as follows:

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# jps |grep rdr

19761 rdrs3rd-1.0.0.jar

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# docker ps |grep etcd

31b40e2d521d rdr-arbitrator/etcd3rd:1.0.0 "/entrypoint.sh" 5 minutes ago Up 5 minutes etcd3rd

Uninstalling the third-party arbitration service

1. Access the CLI of the operating system and execute the following commands to uninstall the third-party arbitration service.

[root@rdr01 ~]# cd SeerEngine_DC_ARBITRATOR-E3611 //Directory where the third-party arbitration service package is decompressed

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# ./uninstall.sh

Uninstalling…

Uninstalling...

2021-03-04 16:50:09 [info] Stopping rdrArbitrator service...

2021-03-04 16:50:09 [info] stop container 31b40e2d521d in docker daemon

2021-03-04 16:50:20 [info] stop container 31b40e2d521d in docker daemon success

2021-03-04 16:50:20 [info] Deleting image rdr-arbitrator/etcd3rd:1.0.0 in docker daemon

2021-03-04 16:50:20 [info] Delete image rdr-arbitrator/etcd3rd:1.0.0 in docker daemon success

2021-03-04 16:50:20 [info] Deleting rdrArbitrator dir...

Complete!

2. Verify that the uninstallation is successful.

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# jps |grep rdr

[root@rdr01 SeerEngine_DC_ARBITRATOR-E3611]# docker ps |grep etcd

Upgrading the third-party arbitration service

|

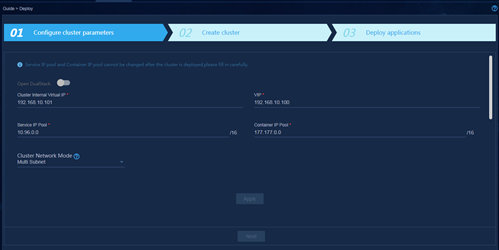

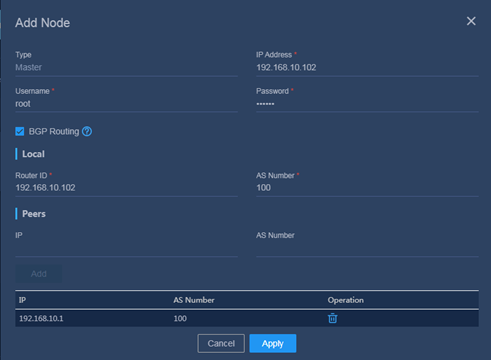

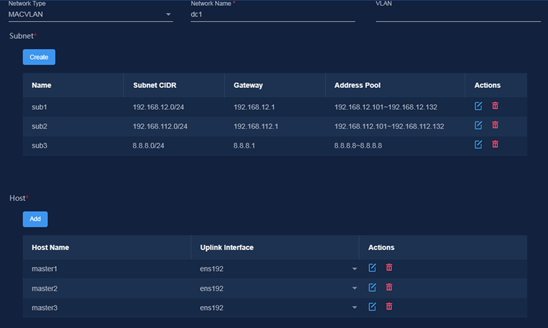

CAUTION: Specify the manual switchover mode for the RDRS before the upgrade. |