- Table of Contents

- Related Documents

-

01-Text

Download Book (4.12 MB)Using ping, tracert, and system debugging

Using a ping command to test network connectivity

Using a tracert command to identify failed or all nodes in a path

Debugging information control switches

Configuring NQA operations on the NQA client

NQA operation configuration task list

Configuring the ICMP echo operation

Configuring the ICMP jitter operation

Configuring the DHCP operation

Configuring the HTTP operation

Configuring the UDP jitter operation

Configuring the SNMP operation

Configuring the UDP echo operation

Configuring the UDP tracert operation

Configuring the voice operation

Configuring the DLSw operation

Configuring the path jitter operation

Configuring optional parameters for the NQA operation

Configuring the collaboration feature

Configuring threshold monitoring

Configuring the NQA statistics collection feature

Configuring the saving of NQA history records

Scheduling the NQA operation on the NQA client

Configuring NQA templates on the NQA client

NQA template configuration task list

Configuring the TCP half open template

Configuring the RADIUS template

Configuring optional parameters for the NQA template

Displaying and maintaining NQA

ICMP echo operation configuration example

ICMP jitter operation configuration example

DHCP operation configuration example

DNS operation configuration example

FTP operation configuration example

HTTP operation configuration example

UDP jitter operation configuration example

SNMP operation configuration example

TCP operation configuration example

UDP echo operation configuration example

UDP tracert operation configuration example

Voice operation configuration example

DLSw operation configuration example

Path jitter operation configuration example

NQA collaboration configuration example

ICMP template configuration example

DNS template configuration example

TCP template configuration example

TCP half open template configuration example

UDP template configuration example

HTTP template configuration example

FTP template configuration example

RADIUS template configuration example

Configuration restrictions and guidelines

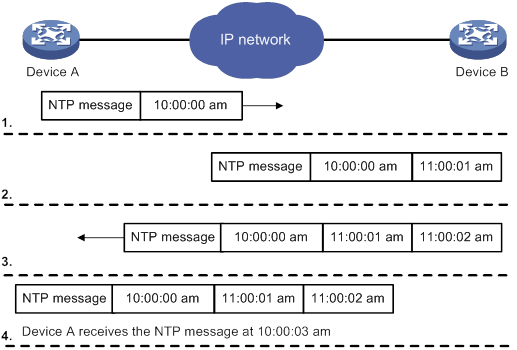

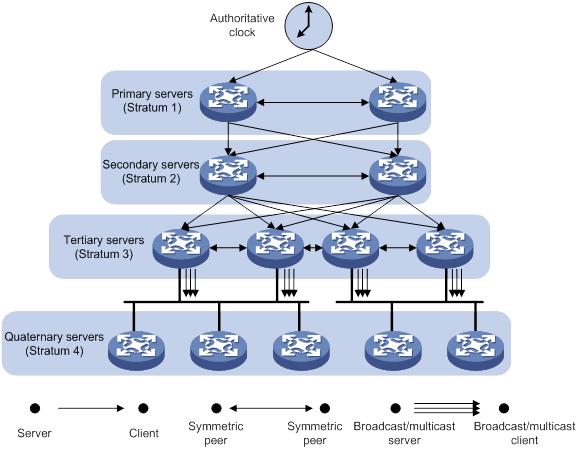

Configuring NTP association mode

Configuring NTP in client/server mode

Configuring NTP in symmetric active/passive mode

Configuring NTP in broadcast mode

Configuring NTP in multicast mode

Configuring access control rights

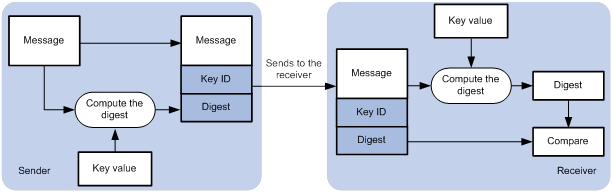

Configuring NTP authentication

Configuring NTP authentication in client/server mode

Configuring NTP authentication in symmetric active/passive mode

Configuring NTP authentication in broadcast mode

Configuring NTP authentication in multicast mode

Configuring NTP optional parameters

Specifying the source interface for NTP messages

Disabling an interface from receiving NTP messages

Configuring the maximum number of dynamic associations

Setting a DSCP value for NTP packets

Configuring the local clock as a reference source

Displaying and maintaining NTP

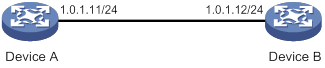

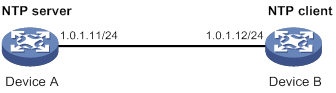

NTP client/server mode configuration example

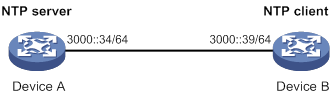

IPv6 NTP client/server mode configuration example

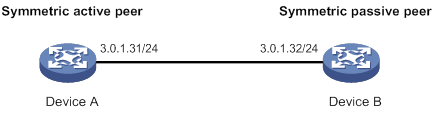

NTP symmetric active/passive mode configuration example

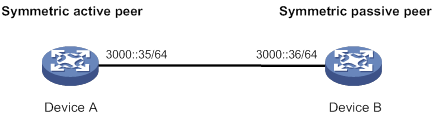

IPv6 NTP symmetric active/passive mode configuration example

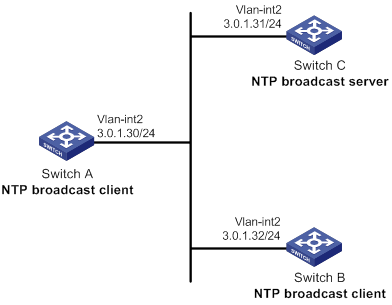

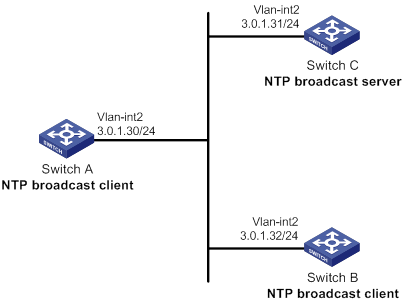

NTP broadcast mode configuration example

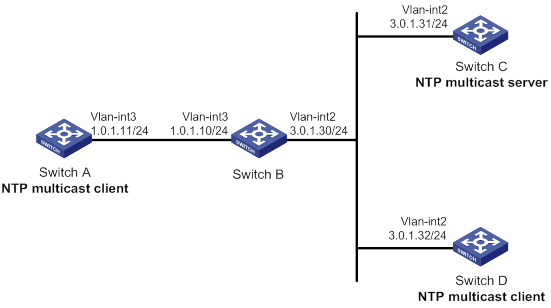

NTP multicast mode configuration example

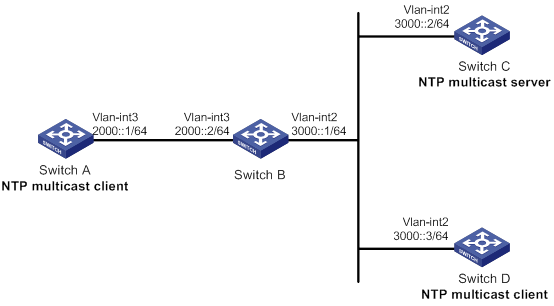

IPv6 NTP multicast mode configuration example

Configuration example for NTP client/server mode with authentication

Configuration example for NTP broadcast mode with authentication

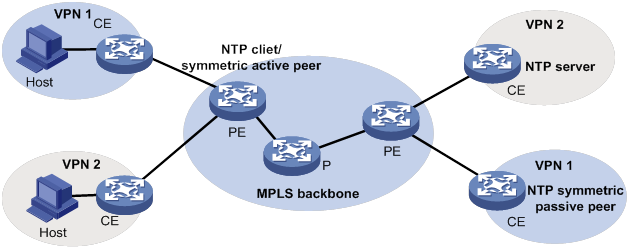

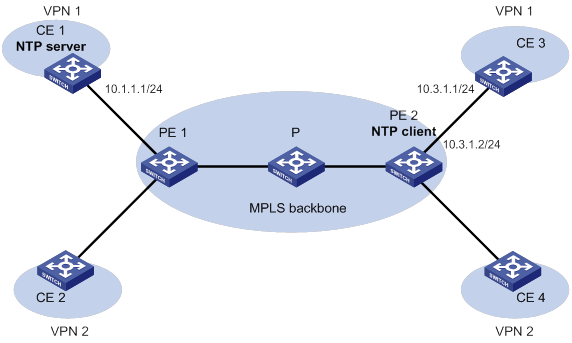

Configuration example for MPLS L3VPN network time synchronization in client/server mode

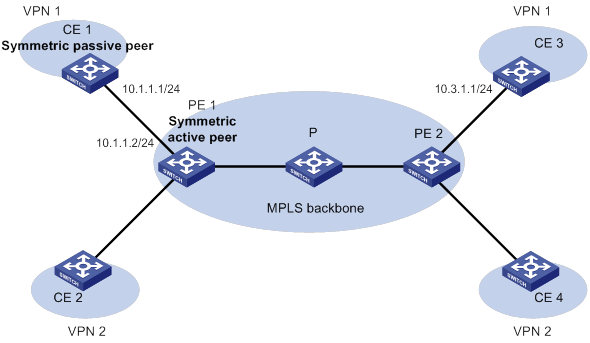

Configuration example for MPLS L3VPN network time synchronization in symmetric active/passive mode

Configuration restrictions and guidelines

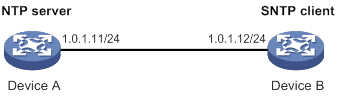

Specifying an NTP server for the device

Configuring SNTP authentication

Displaying and maintaining SNTP

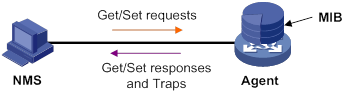

MIB and view-based MIB access control

Configuring SNMP basic parameters

Configuring SNMPv1 or SNMPv2c basic parameters

Configuring SNMPv3 basic parameters

Configuring SNMP notifications

Configuring the SNMP agent to send notifications to a host

SNMPv1/SNMPv2c configuration example

Sample types for the alarm group and the private alarm group

Configuring the RMON statistics function

Creating an RMON Ethernet statistics entry

Creating an RMON history control entry

Configuring the RMON alarm function

Displaying and maintaining RMON settings

Ethernet statistics group configuration example

History group configuration example

Alarm function configuration example

Event MIB configuration task list

Configuring Event MIB sampling

Configuring Event MIB object lists

Configuring a set action for an event

Configuring a notification action for an event

Configuring a Boolean trigger test

Configuring an existence trigger test

Configuring a threshold trigger test

Enabling SNMP notifications for Event MIB

Displaying and maintaining the Event MIB

Event MIB configuration examples

Existence trigger test configuration example

Boolean trigger test configuration example

Threshold trigger test configuration example

NETCONF configuration task list

Establishing a NETCONF session

Setting the NETCONF session idle timeout time

Subscribing to event notifications

Example for subscribing to event notifications

Locking/unlocking the configuration

Example for locking the configuration

Performing the <get>/<get-bulk> operation

Performing the <get-config>/<get-bulk-config> operation

Performing the <edit-config> operation

All-module configuration data retrieval example

Syslog configuration data retrieval example

Example for retrieving a data entry for the interface table

Example for changing the value of a parameter

Saving, rolling back, and loading the configuration

Rolling back the configuration based on a configuration file

Rolling back the configuration based on a rollback point

Example for saving the configuration

Example for filtering data with regular expression match

Example for filtering data by conditional match

Performing CLI operations through NETCONF

Retrieving NETCONF information

Retrieving NETCONF session information

Terminating another NETCONF session

Appendix A Supported NETCONF operations

Configuring a user-defined EAA environment variable

Configuration restrictions and guidelines

Configuring a monitor policy from the CLI

Configuring a monitor policy by using Tcl

Displaying and maintaining EAA settings

CLI event monitor policy configuration example

Track event monitor policy configuration example

CLI-defined policy with EAA environment variables configuration example

Tcl-defined policy configuration example

Displaying and maintaining a sampler

Sampler configuration example for IPv4 NetStream

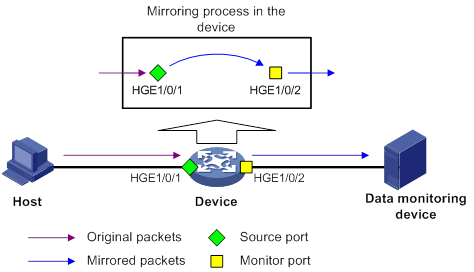

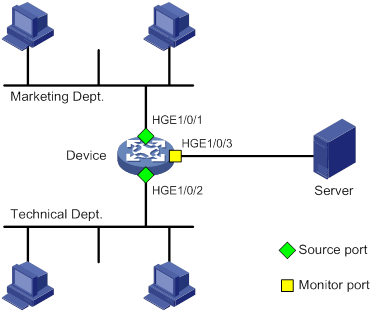

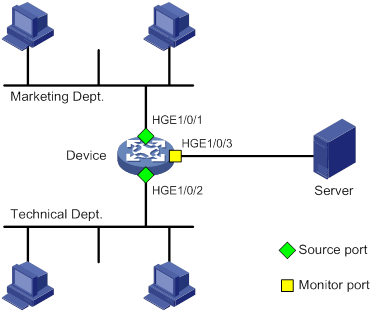

Port mirroring classification and implementation

Configuring local port mirroring

Local port mirroring configuration task list

Creating a local mirroring group

Configuring source ports for the local mirroring group

Configuring source CPUs for the local mirroring group

Configuring the monitor port for the local mirroring group

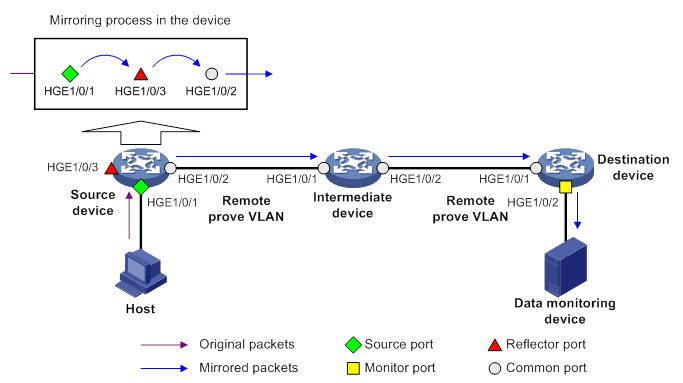

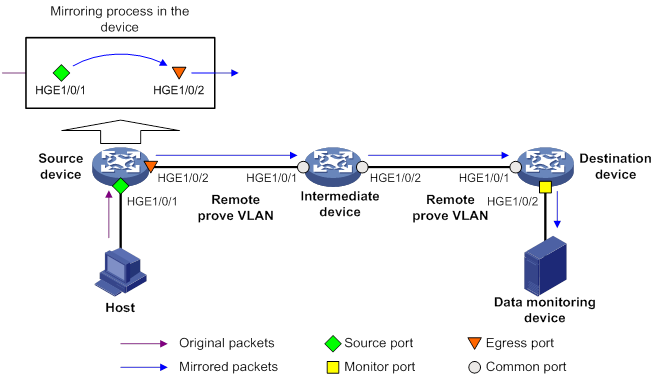

Configuring Layer 2 remote port mirroring

Layer 2 remote port mirroring with reflector port configuration task list

Layer 2 remote port mirroring with egress port configuration task list

Configuring a remote destination group on the destination device

Configuring a remote source group on the source device

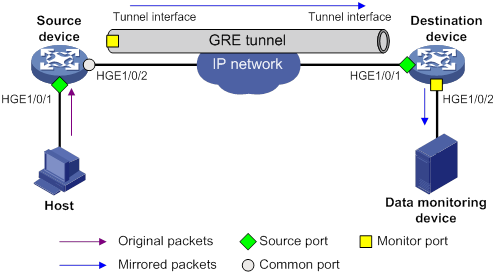

Configuring Layer 3 remote port mirroring

Layer 3 remote port mirroring configuration task list

Configuring local mirroring groups

Configuring source ports for a local mirroring group

Configuring source CPUs for a local mirroring group

Configuring the monitor port for a local mirroring group

Displaying and maintaining port mirroring

Port mirroring configuration examples

Local port mirroring configuration example (in source port mode)

Local port mirroring configuration example (in source CPU mode)

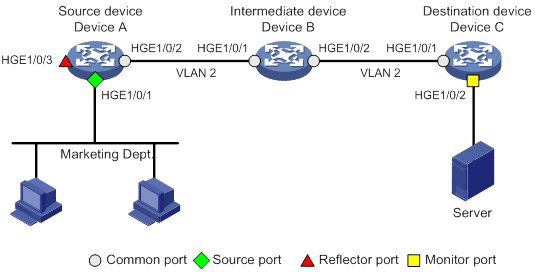

Layer 2 remote port mirroring configuration example (reflector port)

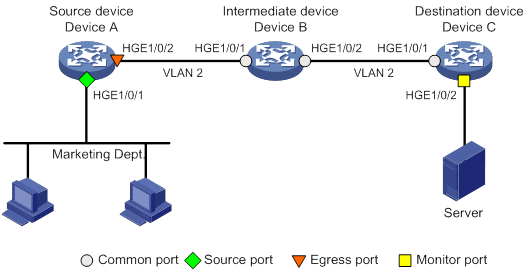

Layer 2 remote port mirroring configuration example (egress port)

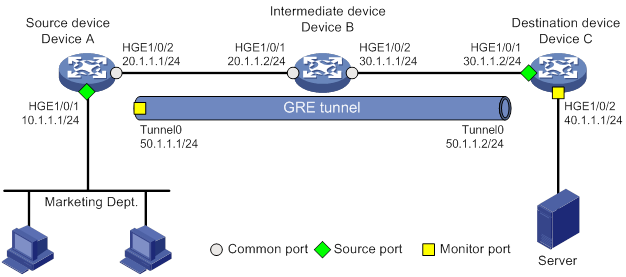

Layer 3 remote port mirroring configuration example

Flow mirroring configuration task list

Configuring a traffic behavior

Applying a QoS policy to an interface

Applying a QoS policy to a VLAN

Applying a QoS policy globally

Applying a QoS policy to the control plane

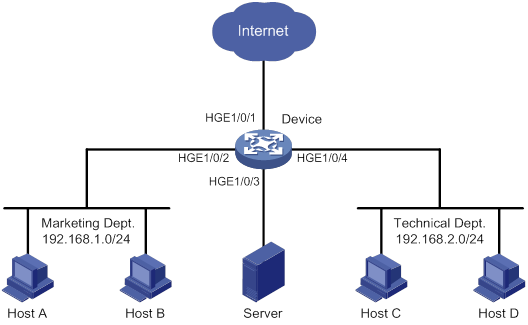

Flow mirroring configuration example

Feature and hardware compatibility

NetStream configuration task list

Configuring NetStream filtering

Configuring NetStream sampling

Configuring attributes of the NetStream data export

Configuring the NetStream data export format

Configuring the refresh rate for NetStream version 9 or version 10 template

Configuring MPLS-aware NetStream

Configuring VXLAN-aware NetStream

Configuring NetStream flow aging

Configuring the NetStream data export

Configuring the NetStream traditional data export

Configuring the NetStream aggregation data export

Displaying and maintaining NetStream

NetStream configuration examples

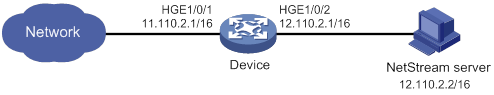

NetStream traditional data export configuration example

NetStream aggregation data export configuration example

Feature and hardware compatibility

IPv6 NetStream configuration task list

Configuring IPv6 NetStream filtering

Configuring IPv6 NetStream sampling

Configuring attributes of the IPv6 NetStream data export

Configuring the IPv6 NetStream data export format

Configuring the refresh rate for IPv6 NetStream version 9 or version 10 template

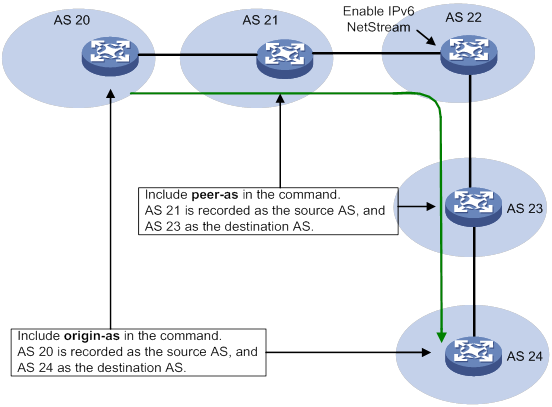

Configuring MPLS-aware IPv6 NetStream

Configuring IPv6 NetStream flow aging

Configuring the IPv6 NetStream data export

Configuring the IPv6 NetStream traditional data export

Configuring the IPv6 NetStream aggregation data export

Displaying and maintaining IPv6 NetStream

IPv6 NetStream configuration examples

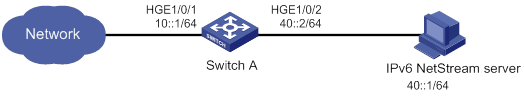

IPv6 NetStream traditional data export configuration example

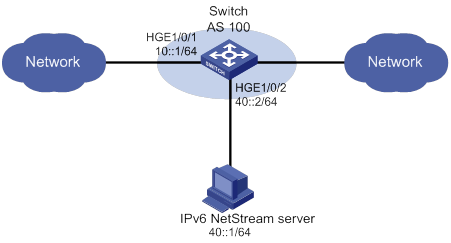

IPv6 NetStream aggregation data export configuration example

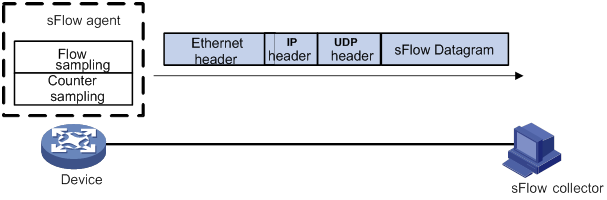

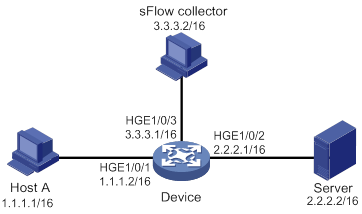

Configuring the sFlow agent and sFlow collector information

Displaying and maintaining sFlow

Troubleshooting sFlow configuration

The remote sFlow collector cannot receive sFlow packets

Configuring the information center

Default output rules for diagnostic logs

Default output rules for security logs

Default output rules for hidden logs

Default output rules for trace logs

Information center configuration task list

Outputting logs to the console

Outputting logs to the monitor terminal

Outputting logs to the log buffer

Saving security logs to the security log file

Managing the security log file

Saving diagnostic logs to the diagnostic log file

Configuring the maximum size of the trace log file

Setting the minimum storage period for log files and logs in the log buffer

Enabling synchronous information output

Enabling duplicate log suppression

Configuring log suppression for a module

Disabling an interface from generating link up or link down logs

Enabling SNMP notifications for system logs

Displaying and maintaining information center

Information center configuration examples

Configuration example for outputting logs to the console

Configuration example for outputting logs to a UNIX log host

Configuration example for outputting logs to a Linux log host

Configuring monitoring diagnostics

Configuring on-demand diagnostics

Starting a device startup check by using on-demand diagnostics

Starting on-demand diagnostics during device operation

Configuring the log buffer size

Displaying and maintaining GOLD

GOLD configuration example (in standalone mode)

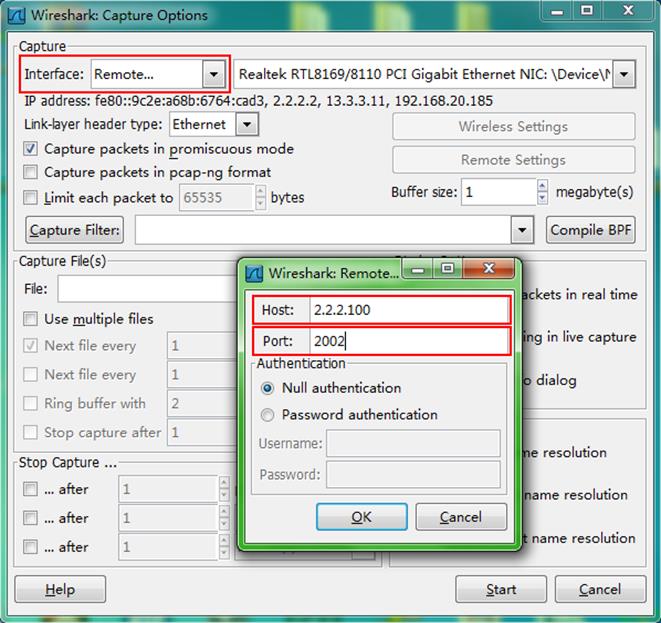

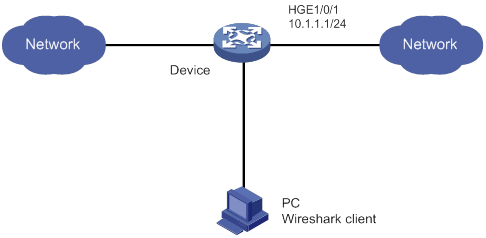

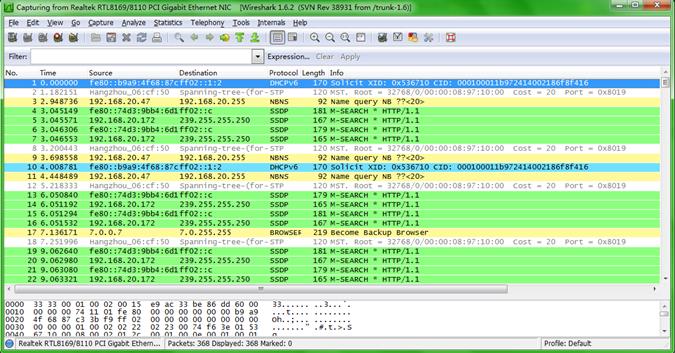

Configuring the packet capture

Packet capture configuration task list

Configuring local packet capture

Configuring remote packet capture

Configuring feature image-based packet capture

Saving captured packets to a file

Filtering packet data to display

Displaying the contents in a packet file

Displaying and maintaining packet capture

Packet capture configuration examples

Remote packet capture configuration example

Filtering packet data to display configuration example

Saving captured packets to a file configuration example

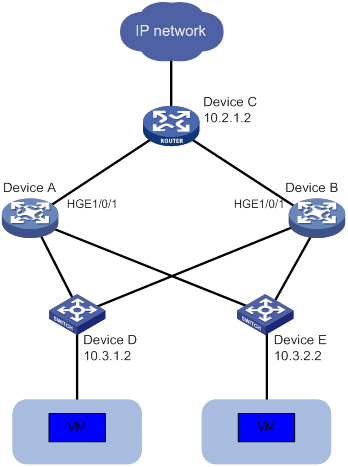

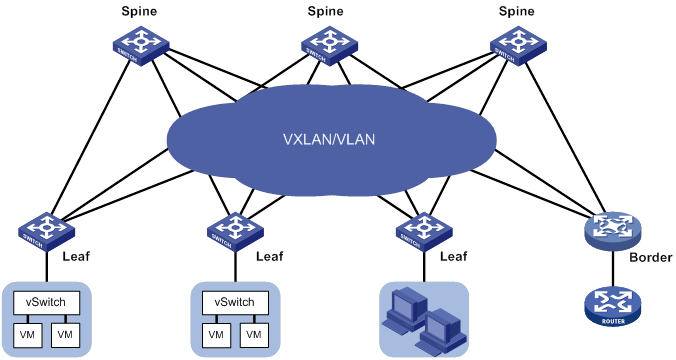

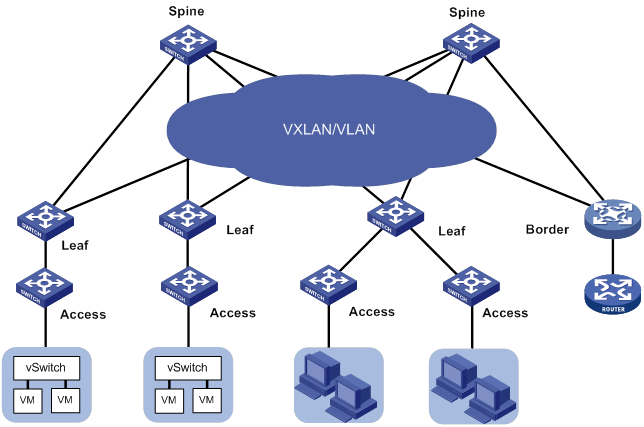

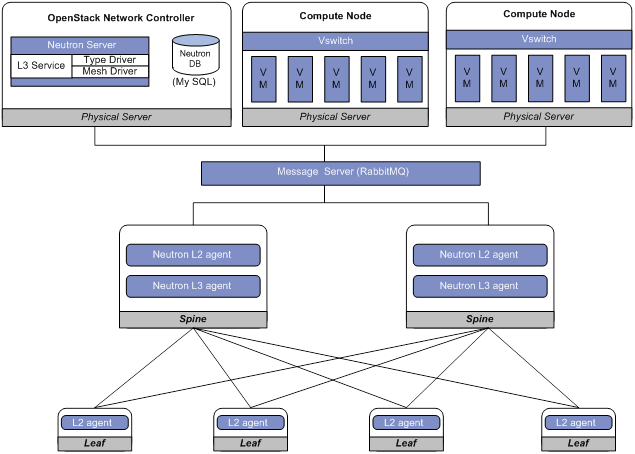

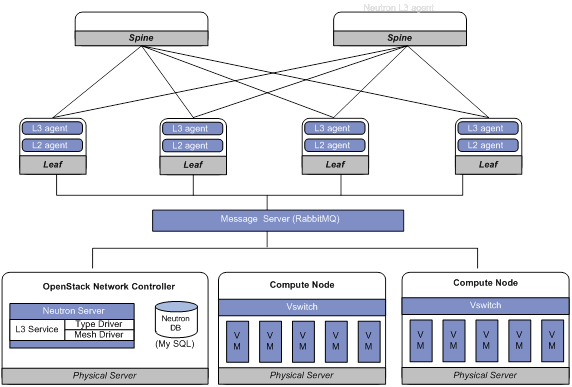

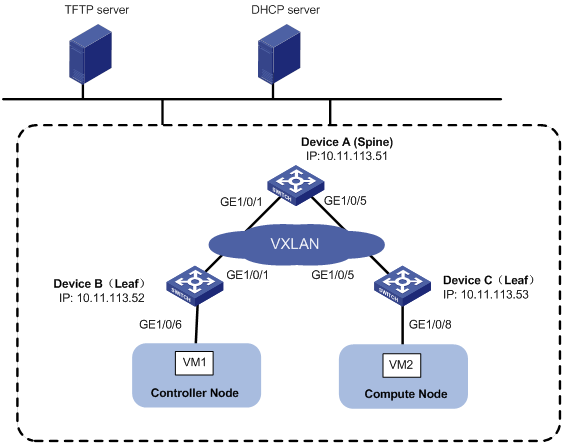

Neutron concepts and components

Automated VCF fabric provisioning and deployment

Automated underlay network provisioning

Automated overlay network deployment

Configuration restrictions and guidelines

VCF fabric configuration task list

Enabling VCF fabric topology discovery

Configuration restrictions and guidelines

Configuring automated underlay network provisioning

Configuration restrictions and guidelines

Configuring automated overlay network deployment

Configuration restrictions and guidelines

Displaying and maintaining VCF fabric

Automated VCF fabric configuration example

Using ping, tracert, and system debugging

This chapter covers ping, tracert, and information about debugging the system.

Ping

Use the ping utility to determine if an address is reachable.

Ping sends ICMP echo requests (ECHO-REQUEST) to the destination device. Upon receiving the requests, the destination device responds with ICMP echo replies (ECHO-REPLY) to the source device. The source device outputs statistics about the ping operation, including the number of packets sent, number of echo replies received, and the round-trip time. You can measure the network performance by analyzing these statistics.

Using a ping command to test network connectivity

Execute ping commands in any view.

|

Task |

Command |

|

Determine if an address in an IP network is reachable. |

When you configure the ping command for a low-speed network, set a larger value for the timeout timer (indicated by the -t keyword in the command). ·

For IPv4 networks: ·

For IPv6 networks: |

Ping example

Network requirements

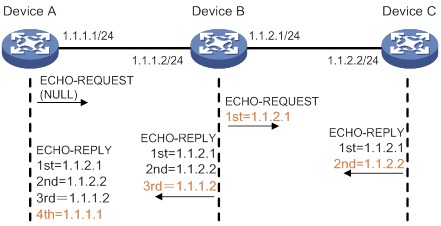

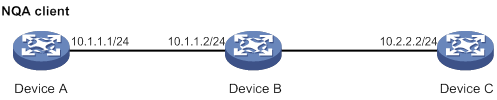

As shown in Figure 1, determine if Device A and Device C can reach each other. If they can reach each other, get detailed information about routes from Device A to Device C.

Configuration procedure

# Use the ping command on Device A to test connectivity to Device C.

Ping 1.1.2.2 (1.1.2.2): 56 data bytes, press CTRL_C to break

56 bytes from 1.1.2.2: icmp_seq=0 ttl=254 time=2.137 ms

56 bytes from 1.1.2.2: icmp_seq=1 ttl=254 time=2.051 ms

56 bytes from 1.1.2.2: icmp_seq=2 ttl=254 time=1.996 ms

56 bytes from 1.1.2.2: icmp_seq=3 ttl=254 time=1.963 ms

56 bytes from 1.1.2.2: icmp_seq=4 ttl=254 time=1.991 ms

--- Ping statistics for 1.1.2.2 ---

5 packet(s) transmitted, 5 packet(s) received, 0.0% packet loss

round-trip min/avg/max/std-dev = 1.963/2.028/2.137/0.062 ms

The output shows the following information:

· Device A sends five ICMP packets to Device C and Device A receives five ICMP packets.

· No ICMP packet is lost.

· The route is reachable.

# Get detailed information about routes from Device A to Device C.

<DeviceA> ping -r 1.1.2.2

Ping 1.1.2.2 (1.1.2.2): 56 data bytes, press CTRL_C to break

56 bytes from 1.1.2.2: icmp_seq=0 ttl=254 time=4.685 ms

RR: 1.1.2.1

1.1.2.2

1.1.1.2

1.1.1.1

56 bytes from 1.1.2.2: icmp_seq=1 ttl=254 time=4.834 ms (same route)

56 bytes from 1.1.2.2: icmp_seq=2 ttl=254 time=4.770 ms (same route)

56 bytes from 1.1.2.2: icmp_seq=3 ttl=254 time=4.812 ms (same route)

56 bytes from 1.1.2.2: icmp_seq=4 ttl=254 time=4.704 ms (same route)

--- Ping statistics for 1.1.2.2 ---

5 packet(s) transmitted, 5 packet(s) received, 0.0% packet loss

round-trip min/avg/max/std-dev = 4.685/4.761/4.834/0.058 ms

The test procedure of ping –r is as shown in Figure 1:

1. The source device (Device A) sends an ICMP echo request to the destination device (Device C) with the RR option blank.

2. The intermediate device (Device B) adds the IP address of its outbound interface (1.1.2.1) to the RR option of the ICMP echo request, and forwards the packet.

3. Upon receiving the request, the destination device copies the RR option in the request and adds the IP address of its outbound interface (1.1.2.2) to the RR option. Then the destination device sends an ICMP echo reply.

4. The intermediate device adds the IP address of its outbound interface (1.1.1.2) to the RR option in the ICMP echo reply, and then forwards the reply.

5. Upon receiving the reply, the source device adds the IP address of its inbound interface (1.1.1.1) to the RR option. The detailed information of routes from Device A to Device C is formatted as: 1.1.1.1 <-> {1.1.1.2; 1.1.2.1} <-> 1.1.2.2.

Tracert

Tracert (also called Traceroute) enables retrieval of the IP addresses of Layer 3 devices in the path to a destination. In the event of network failure, use tracert to test network connectivity and identify failed nodes.

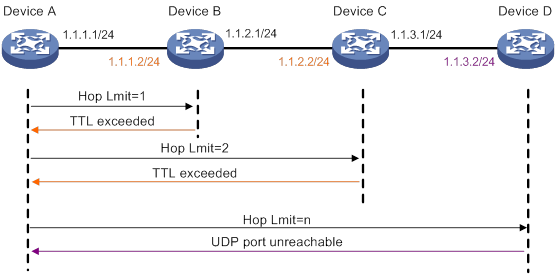

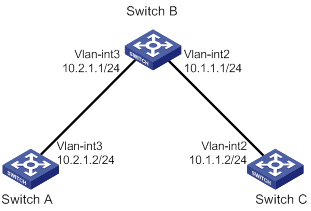

Figure 2 Tracert operation

Tracert uses received ICMP error messages to get the IP addresses of devices. Tracert works as shown in Figure 2:

1. The source device sends a UDP packet with a TTL value of 1 to the destination device. The destination UDP port is not used by any application on the destination device.

2. The first hop (Device B, the first Layer 3 device that receives the packet) responds by sending a TTL-expired ICMP error message to the source, with its IP address (1.1.1.2) encapsulated. This way, the source device can get the address of the first Layer 3 device (1.1.1.2).

3. The source device sends a packet with a TTL value of 2 to the destination device.

4. The second hop (Device C) responds with a TTL-expired ICMP error message, which gives the source device the address of the second Layer 3 device (1.1.2.2).

5. This process continues until a packet sent by the source device reaches the ultimate destination device. Because no application uses the destination port specified in the packet, the destination device responds with a port-unreachable ICMP message to the source device, with its IP address encapsulated. This way, the source device gets the IP address of the destination device (1.1.3.2).

6. The source device determines that:

? The packet has reached the destination device after receiving the port-unreachable ICMP message.

? The path to the destination device is 1.1.1.2 to 1.1.2.2 to 1.1.3.2.

Prerequisites

Before you use a tracert command, perform the tasks in this section.

For an IPv4 network:

· Enable sending of ICMP timeout packets on the intermediate devices (devices between the source and destination devices). If the intermediate devices are H3C devices, execute the ip ttl-expires enable command on the devices. For more information about this command, see Layer 3—IP Services Command Reference.

· Enable sending of ICMP destination unreachable packets on the destination device. If the destination device is an H3C device, execute the ip unreachables enable command. For more information about this command, see Layer 3—IP Services Command Reference.

For an IPv6 network:

· Enable sending of ICMPv6 timeout packets on the intermediate devices (devices between the source and destination devices). If the intermediate devices are H3C devices, execute the ipv6 hoplimit-expires enable command on the devices. For more information about this command, see Layer 3—IP Services Command Reference.

· Enable sending of ICMPv6 destination unreachable packets on the destination device. If the destination device is an H3C device, execute the ipv6 unreachables enable command. For more information about this command, see Layer 3—IP Services Command Reference.

Using a tracert command to identify failed or all nodes in a path

Execute tracert commands in any view.

|

Task |

Command

|

|

Display the routes from source to destination. |

·

For IPv4 networks: ·

For IPv6 networks: |

Tracert example

Network requirements

As shown in Figure 3, Device A failed to Telnet to Device C.

Test the network connectivity between Device A and Device C. If they cannot reach each other, locate the failed nodes in the network.

Configuration procedure

1. Configure the IP addresses for devices as shown in Figure 3.

2. Configure a static route on Device A.

<DeviceA> system-view

[DeviceA] ip route-static 0.0.0.0 0.0.0.0 1.1.1.2

[DeviceA] quit

3. Use the ping command to test connectivity between Device A and Device C.

<DeviceA> ping 1.1.2.2

Ping 1.1.2.2(1.1.2.2): 56 -data bytes, press CTRL_C to break

Request time out

Request time out

Request time out

Request time out

Request time out

--- Ping statistics for 1.1.2.2 ---

5 packet(s) transmitted,0 packet(s) received,100.0% packet loss

The output shows that Device A and Device C cannot reach each other.

4. Use the tracert command to identify failed nodes:

# Enable sending of ICMP timeout packets on Device B.

<DeviceB> system-view

[DeviceB] ip ttl-expires enable

# Enable sending of ICMP destination unreachable packets on Device C.

<DeviceC> system-view

[DeviceC] ip unreachables enable

# Execute the tracert command on Device A.

<DeviceA> tracert 1.1.2.2

traceroute to 1.1.2.2 (1.1.2.2) 30 hops at most,40 bytes each packet, press CTRL_C to break

1 1.1.1.2 (1.1.1.2) 1 ms 2 ms 1 ms

2 * * *

3 * * *

4 * * *

5

<DeviceA>

The output shows that Device A can reach Device B but cannot reach Device C. An error has occurred on the connection between Device B and Device C.

5. To identify the cause of the problem, execute the following commands on Device A and Device C:

? Execute the debugging ip icmp command and verify that Device A and Device C can send and receive the correct ICMP packets.

? Execute the display ip routing-table command to verify that Device A and Device C have a route to each other.

System debugging

The device supports debugging for the majority of protocols and features, and provides debugging information to help users diagnose errors.

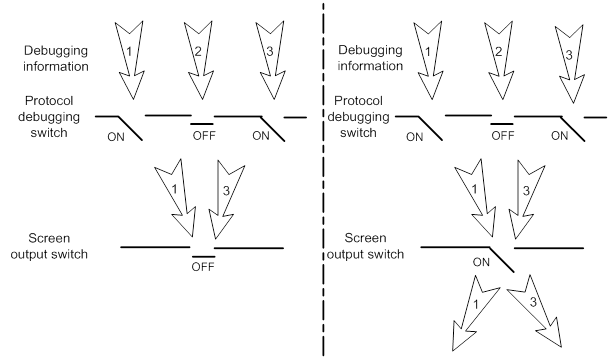

Debugging information control switches

The following switches control the display of debugging information:

· Module debugging switch—Controls whether to generate the module-specific debugging information.

· Screen output switch—Controls whether to display the debugging information on a certain screen. Use terminal monitor and terminal logging level commands to turn on the screen output switch. For more information about these two commands, see Network Management and Monitoring Command Reference.

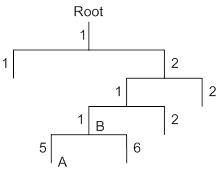

As shown in Figure 4, the device can provide debugging for the three modules 1, 2, and 3. The debugging information can be output on a terminal only when both the module debugging switch and the screen output switch are turned on.

Debugging information is typically displayed on a console. You can also send debugging information to other destinations. For more information, see "Configuring the information center."

Figure 4 Relationship between the module and screen output switch

Debugging a feature module

Output of debugging commands is memory intensive. To guarantee system performance, enable debugging only for modules that are in an exceptional condition. When debugging is complete, use the undo debugging all command to disable all the debugging functions.

To debug a feature module:

|

Step |

Command |

Remarks |

|

1. Enable debugging for a module in user view. |

debugging module-name [ option ] |

By default, all debugging functions are disabled. |

|

2. (Optional.) Display the enabled debugging in any view. |

display debugging [ module-name ] |

N/A |

Configuring NQA

Overview

Network quality analyzer (NQA) allows you to measure network performance, verify the service levels for IP services and applications, and troubleshoot network problems. It provides the following types of operations:

· ICMP echo.

· ICMP jitter.

· DHCP.

· DLSw.

· DNS.

· FTP.

· HTTP.

· Path jitter.

· SNMP.

· TCP.

· UDP echo.

· UDP jitter.

· UDP tracert.

· Voice.

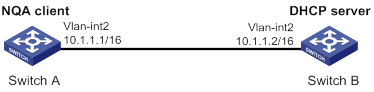

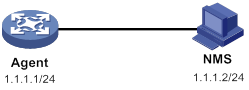

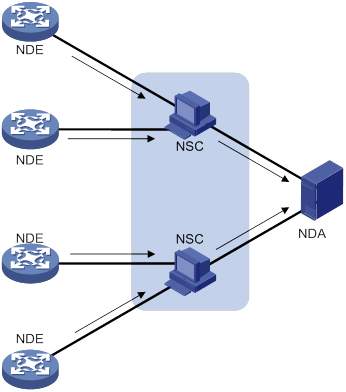

As shown in Figure 5, the NQA source device (NQA client) sends data to the NQA destination device by simulating IP services and applications to measure network performance. The obtained performance metrics include the one-way latency, jitter, packet loss, voice quality, application performance, and server response time.

All types of NQA operations require the NQA client, but only the TCP, UDP echo, UDP jitter, and voice operations require the NQA server. The NQA operations for services that are already provided by the destination device such as FTP do not need the NQA server.

You can configure the NQA server to listen and respond to specific IP addresses and ports to meet various test needs.

NQA operation

The following describes how NQA performs different types of operations:

· A TCP or DLSw operation sets up a connection.

· An ICMP jitter, UDP jitter, or voice operation sends a number of probe packets. The number of probe packets is set by using the probe packet-number command.

· An FTP operation uploads or downloads a file.

· An HTTP operation gets a Web page.

· A DHCP operation gets an IP address through DHCP.

· A DNS operation translates a domain name to an IP address.

· An ICMP echo operation sends an ICMP echo request.

· A UDP echo operation sends a UDP packet.

· An SNMP operation sends one SNMPv1 packet, one SNMPv2c packet, and one SNMPv3 packet.

· A path jitter operation is accomplished in the following steps:

a. The operation uses tracert to obtain the path from the NQA client to the destination. A maximum of 64 hops can be detected.

b. The NQA client sends ICMP echo requests to each hop along the path. The number of ICMP echo requests is set by using the probe packet-number command.

· A UDP tracert operation determines the routing path from the source to the destination. The number of the probe packets sent to each hop is set by using the probe count command.

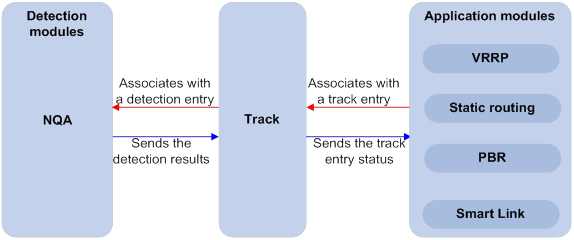

Collaboration

NQA can collaborate with the Track module to notify application modules of state or performance changes so that the application modules can take predefined actions.

The following describes how a static route destined for 192.168.0.88 is monitored through collaboration:

1. NQA monitors the reachability to 192.168.0.88.

2. When 192.168.0.88 becomes unreachable, NQA notifies the Track module of the change.

3. The Track module notifies the static routing module of the state change.

4. The static routing module sets the static route to invalid according to a predefined action.

For more information about collaboration, see High Availability Configuration Guide.

Threshold monitoring

Threshold monitoring enables the NQA client to take a predefined action when the NQA operation performance metrics violate the specified thresholds.

Table 1 describes the relationships between performance metrics and NQA operation types.

Table 1 Performance metrics and NQA operation types

|

Performance metric |

NQA operation types that can gather the metric |

|

Probe duration |

All NQA operation types except UDP jitter, UDP tracert, path jitter, and voice |

|

Number of probe failures |

All NQA operation types except UDP jitter, UDP tracert, path jitter, and voice |

|

Round-trip time |

ICMP jitter, UDP jitter, and voice |

|

Number of discarded packets |

ICMP jitter, UDP jitter, and voice |

|

One-way jitter (source-to-destination or destination-to-source) |

ICMP jitter, UDP jitter, and voice |

|

One-way delay (source-to-destination or destination-to-source) |

ICMP jitter, UDP jitter, and voice |

|

Calculated Planning Impairment Factor (ICPIF) (see "Configuring the voice operation") |

Voice |

|

Mean Opinion Scores (MOS) (see "Configuring the voice operation") |

Voice |

NQA configuration task list

|

Tasks at a glance |

Remarks |

|

Required for TCP, UDP echo, UDP jitter, and voice operations. |

|

|

(Required.) Enabling the NQA client |

N/A |

|

(Required.) Perform at least one of the following tasks: |

When you configure an NQA template to analyze network performance, the feature that uses the template performs the NQA operation. |

Configuring the NQA server

To perform TCP, UDP echo, UDP jitter, and voice operations, you must enable the NQA server on the destination device. The NQA server listens and responds to requests on the specified IP addresses and ports.

You can configure multiple TCP or UDP listening services on an NQA server, where each corresponds to a specific IP address and port number. The IP address and port number for a listening service must be unique on the NQA server and match the configuration on the NQA client.

To configure the NQA server:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Enable the NQA server. |

nqa server enable |

By default, the NQA server is disabled. |

|

3. Configure a TCP or UDP listening service. |

·

TCP listening service: ·

UDP listening service: |

The default ToS value is 0. You can set the ToS value in the IP header of reply packets sent by the NQA server. |

Enabling the NQA client

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Enable the NQA client. |

nqa agent enable |

By default, the NQA client is enabled. The NQA client configuration takes effect after you enable the NQA client. |

Configuring NQA operations on the NQA client

NQA operation configuration task list

|

Tasks at a glance |

|

(Required.) Perform at least one of the following tasks: · Configuring the ICMP echo operation · Configuring the ICMP jitter operation · Configuring the DHCP operation · Configuring the DNS operation · Configuring the FTP operation · Configuring the HTTP operation · Configuring the UDP jitter operation · Configuring the SNMP operation · Configuring the TCP operation · Configuring the UDP echo operation · Configuring the UDP tracert operation · Configuring the voice operation |

|

(Optional.) Configuring optional parameters for the NQA operation |

|

(Optional.) Configuring the collaboration feature |

|

(Optional.) Configuring threshold monitoring |

|

(Optional.) Configuring the NQA statistics collection feature |

|

(Optional.) Configuring the saving of NQA history records |

|

(Required.) Scheduling the NQA operation on the NQA client |

Configuring the ICMP echo operation

The ICMP echo operation measures the reachability of a destination device. It has the same function as the ping command, but provides more output information. In addition, if multiple paths exist between the source and destination devices, you can specify the next hop for the ICMP echo operation.

To configure the ICMP echo operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the ICMP echo type and enter its view. |

type icmp-echo |

N/A |

|

4. Specify the destination IP address for ICMP echo requests. |

·

IPv4 address: ·

IPv6 address: |

By default, no destination IP address is specified. |

|

5. (Optional.) Set the payload size for each ICMP echo request. |

data-size size |

The default setting is 100 bytes. |

|

6. (Optional.) Specify the payload fill string for ICMP echo requests. |

data-fill string |

The default payload fill string is hexadecimal string 00010203040506070809. |

|

7. (Optional.) Specify the output interface for ICMP echo requests. |

out interface interface-type interface-number |

By default, the output interface for ICMP echo requests is not specified. The NQA client determines the output interface based on the routing table lookup. |

|

8. (Optional.) Specify the source IP address for ICMP echo requests. |

·

Use the IP address of the specified interface

as the source IP address: ·

Specify the source IPv4 address: ·

Specify the source IPv6 address: |

By default, the requests take the primary IP address of the output interface as their source IP address. If you execute the source interface, source ip, and source ipv6 commands multiple times, the most recent configuration takes effect. The specified source interface must be up. The specified source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no probe packets can be sent out. |

|

9. (Optional.) Specify the next hop IP address for ICMP echo requests. |

·

IPv4 address: ·

IPv6 address: |

By default, no next hop IP address is configured. |

Configuring the ICMP jitter operation

The ICMP jitter operation measures unidirectional and bidirectional jitters. The operation result helps you to determine whether the network can carry jitter-sensitive services such as real-time voice and video services.

The ICMP jitter operation works as follows:

1. The NQA client sends ICMP packets to the destination device.

2. The destination device time stamps each packet it receives, and then sends the packet back to the NQA client.

3. Upon receiving the responses, the NQA client calculates the jitter according to the timestamps.

Before starting the operation, make sure the network devices are time synchronized by using NTP. For more information about NTP, see "Configuring NTP."

To configure the ICMP jitter operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the ICMP jitter type and enter its view. |

type icmp-jitter |

N/A |

|

4. Specify the destination address of ICMP packets. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. (Optional.) Set the number of ICMP packets sent in one ICMP jitter operation. |

probe packet-number packet-number |

The default setting is 10. |

|

6. (Optional.) Set the interval for sending ICMP packets. |

probe packet-interval interval |

The default setting is 20 milliseconds. |

|

7. (Optional.) Specify how long the NQA client waits for a response from the server before it regards the response times out. |

probe packet-timeout timeout |

The default setting is 3000 milliseconds. |

|

8. (Optional.) Specify the source IP address for ICMP packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no ICMP packets can be sent out. |

|

|

NOTE: Use the display nqa result or display nqa statistics command to verify the ICMP jitter operation. The display nqa history command does not display the ICMP jitter operation results or statistics. |

Configuring the DHCP operation

The DHCP operation measures whether or not the DHCP server can respond to client requests. DHCP also measures the amount of time it takes the NQA client to obtain an IP address from a DHCP server.

The NQA client simulates the DHCP relay agent to forward DHCP requests for IP address acquisition from the DHCP server. The interface that performs the DHCP operation does not change its IP address. When the DHCP operation completes, the NQA client sends a packet to release the obtained IP address.

To configure the DHCP operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the DHCP type and enter its view. |

type dhcp |

N/A |

|

4. Specify the IP address of the DHCP server as the destination IP address of DHCP packets. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. (Optional.) Specify an output interface for DHCP request packets. |

out interface interface-type interface-number |

By default, the output interface for DHCP request packets is not specified. The NQA client determines the output interface based on the routing table lookup. |

|

6. (Optional.) Specify the source IP address of DHCP request packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The specified source IP address must be the IP address of a local interface, and the local interface must be up. Otherwise, no probe packets can be sent out. The NQA client adds the source IP address to the giaddr field in DHCP requests to be sent to the DHCP server. For more information about the giaddr field, see Layer 3—IP Services Configuration Guide. |

Configuring the DNS operation

The DNS operation measures the time for the NQA client to translate a domain name into an IP address through a DNS server.

A DNS operation simulates domain name resolution and does not save the obtained DNS entry.

To configure the DNS operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the DNS type and enter its view. |

type dns |

N/A |

|

4. Specify the IP address of the DNS server as the destination address of DNS packets. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. Specify the domain name to be translated. |

resolve-target domain-name |

By default, no domain name is specified. |

Configuring the FTP operation

The FTP operation measures the time for the NQA client to transfer a file to or download a file from an FTP server.

When you configure the FTP operation, follow these restrictions and guidelines:

· When you perform the put operation with the filename command configured, make sure the file exists on the NQA client.

· If you get a file from the FTP server, make sure the file specified in the URL exists on the FTP server.

· The NQA client does not save the file obtained from the FTP server.

· Use a small file for the FTP operation. A big file might result in transfer failure because of timeout, or might affect other services for occupying much network bandwidth.

To configure the FTP operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the FTP type and enter its view. |

type ftp |

N/A |

|

4. Specify the URL of the destination FTP server. |

url url |

By default, no URL is specified for the destination FTP server. Enter the URL in one of the following formats: · ftp://host/filename. · ftp://host:port/filename. When you perform the get operation, the file name is required. |

|

5. (Optional.) Specify the source IP address of FTP request packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no FTP requests can be sent out. |

|

6. (Optional.) Specify the FTP operation type. |

operation { get | put } |

By default, the FTP operation type is get, which means obtaining files from the FTP server. |

|

7. Specify an FTP login username. |

username username |

By default, no FTP login username is configured. |

|

8. Specify an FTP login password. |

password { cipher | simple } string |

By default, no FTP login password is configured. |

|

9. (Optional.) Specify the name of a file to be transferred. |

filename file-name |

By default, no file is specified. This step is required if you perform the put operation. |

|

10. Set the data transmission mode. |

mode { active | passive } |

The default mode is active. |

Configuring the HTTP operation

An HTTP operation measures the time for the NQA client to obtain data from an HTTP server.

To configure an HTTP operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the HTTP type and enter its view. |

type http |

N/A |

|

4. Specify the URL of the destination HTTP server. |

url url |

By default, no URL is specified for the destination HTTP server. Enter the URL in one of the following formats: · http://host/resource. · http://host:port/resource. |

|

5. Specify an HTTP login username. |

username username |

By default, no HTTP login username is specified. |

|

6. Specify an HTTP login password. |

password { cipher | simple } string |

By default, no HTTP login password is specified. |

|

7. (Optional.) Specify the source IP address of request packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no request packets can be sent out. |

|

8. (Optional.) Specify the HTTP version. |

By default, HTTP 1.0 is used. |

|

|

9. (Optional.) Specify the HTTP operation type. |

operation { get | post | raw } |

By default, the HTTP operation type is get, which means obtaining data from the HTTP server. If you set the HTTP operation type to raw, configure the content of the HTTP request to be sent to the HTTP server in raw request view. |

|

10. (Optional.) Enter raw request view. |

raw-request |

Every time you enter raw request view, the previously configured content of the HTTP request is removed. |

|

11. (Optional.) Specify the HTTP request content. |

Enter or paste the content. |

By default, no contents are specified. This step is required for the raw operation. |

|

12. Save the input and return to HTTP operation view. |

quit |

N/A |

Configuring the UDP jitter operation

|

|

CAUTION: To ensure successful UDP jitter operations and avoid affecting existing services, do not perform the operations on well-known ports from 1 to 1023. |

Jitter means inter-packet delay variance. A UDP jitter operation measures unidirectional and bidirectional jitters. The operation result helps you to determine whether the network can carry jitter-sensitive services such as real-time voice and video services through the UDP jitter operation.

The UDP jitter operation works as follows:

1. The NQA client sends UDP packets to the destination port.

2. The destination device time stamps each packet it receives, and then sends the packet back to the NQA client.

3. Upon receiving the responses, the NQA client calculates the jitter according to the timestamps.

The UDP jitter operation requires both the NQA server and the NQA client. Before you perform the UDP jitter operation, configure the UDP listening service on the NQA server. For more information about UDP listening service configuration, see "Configuring the NQA server."

Before starting the operation, make sure the network devices are time synchronized by using NTP. For more information about NTP, see "Configuring NTP."

To configure a UDP jitter operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the UDP jitter type and enter its view. |

type udp-jitter |

N/A |

|

4. Specify the destination address of UDP packets. |

destination ip ip-address |

By default, no destination IP address is specified. The destination IP address must be the same as the IP address of the listening service on the NQA server. |

|

5. Specify the destination port of UDP packets. |

destination port port-number |

By default, no destination port number is specified. The destination port number must be the same as the port number of the listening service on the NQA server. |

|

6. (Optional.) Specify the source IP address for UDP packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no UDP packets can be sent out. |

|

7. (Optional.) Specify the source port number of UDP packets. |

source port port-number |

By default, no source port number is specified. |

|

8. (Optional.) Set the payload size for each UDP packet. |

data-size size |

The default setting is 100 bytes. |

|

9. (Optional.) Specify the payload fill string for UDP packets. |

data-fill string |

The default payload fill string is hexadecimal string 00010203040506070809. |

|

10. (Optional.) Set the number of UDP packets sent in one UDP jitter operation. |

probe packet-number packet-number |

The default setting is 10. |

|

11. (Optional.) Set the interval for sending UDP packets. |

probe packet-interval interval |

The default setting is 20 milliseconds. |

|

12. (Optional.) Specify how long the NQA client waits for a response from the server before it regards the response times out. |

probe packet-timeout timeout |

The default setting is 3000 milliseconds. |

|

|

NOTE: Use the display nqa result or display nqa statistics command to verify the UDP jitter operation. The display nqa history command does not display the UDP jitter operation results or statistics. |

Configuring the SNMP operation

The SNMP operation measures the time for the NQA client to get a response packet from an SNMP agent.

To configure the SNMP operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the SNMP type and enter its view. |

type snmp |

N/A |

|

4. Specify the destination address of SNMP packets. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. (Optional.) Specify the source port of SNMP packets. |

source port port-number |

By default, no source port number is specified. |

|

6. (Optional.) Specify the source IP address of SNMP packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no SNMP packets can be sent out. |

|

7. (Optional.) Specify the community name for the SNMP operation if the operation uses the SNMPv1 or SNMPv2c agent. |

community read { cipher | simple } community-name |

By default, the SNMP operation uses community name public. Make sure the specified community name is the same as the community name configured on the SNMP agent. |

Configuring the TCP operation

The TCP operation measures the time for the NQA client to establish a TCP connection to a port on the NQA server.

The TCP operation requires both the NQA server and the NQA client. Before you perform a TCP operation, configure a TCP listening service on the NQA server. For more information about the TCP listening service configuration, see "Configuring the NQA server."

To configure the TCP operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the TCP type and enter its view. |

type tcp |

N/A |

|

4. Specify the destination address of TCP packets. |

destination ip ip-address |

By default, no destination IP address is specified. The destination address must be the same as the IP address of the listening service configured on the NQA server. |

|

5. Specify the destination port of TCP packets. |

destination port port-number |

By default, no destination port number is configured. The destination port number must be the same as the port number of the listening service on the NQA server. |

|

6. (Optional.) Specify the source IP address of TCP packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no TCP packets can be sent out. |

Configuring the UDP echo operation

The UDP echo operation measures the round-trip time between the client and a UDP port on the NQA server.

The UDP echo operation requires both the NQA server and the NQA client. Before you perform a UDP echo operation, configure a UDP listening service on the NQA server. For more information about the UDP listening service configuration, see "Configuring the NQA server."

To configure the UDP echo operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the UDP echo type and enter its view. |

type udp-echo |

N/A |

|

4. Specify the destination address of UDP packets. |

destination ip ip-address |

By default, no destination IP address is specified. The destination address must be the same as the IP address of the listening service configured on the NQA server. |

|

5. Specify the destination port of UDP packets. |

destination port port-number |

By default, no destination port number is specified. The destination port number must be the same as the port number of the listening service on the NQA server. |

|

6. (Optional.) Set the payload size for each UDP packet. |

data-size size |

The default setting is 100 bytes. |

|

7. (Optional.) Specify the payload fill string for UDP packets. |

data-fill string |

The default payload fill string is hexadecimal string 00010203040506070809. |

|

8. (Optional.) Specify the source port of UDP packets. |

source port port-number |

By default, no source port number is specified. |

|

9. (Optional.) Specify the source IP address of UDP packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no UDP packets can be sent out. |

Configuring the UDP tracert operation

The UDP tracert operation determines the routing path from the source device to the destination device.

Before you configure the UDP tracert operation, perform the following tasks:

· Enable sending ICMP time exceeded messages on the intermediate devices between the source and destination devices. If the intermediate devices are H3C devices, use the ip ttl-expires enable command.

· Enable sending ICMP destination unreachable messages on the destination device. If the destination device is an H3C device, use the ip unreachables enable command.

For more information about the ip ttl-expires enable and ip unreachables enable commands, see Layer 3—IP Services Command Reference.

The UDP tracert operation is not supported in IPv6 networks. To determine the routing path that the IPv6 packets traverse from the source to the destination, use the tracert ipv6 command. For more information about the command, see Network Management and Monitoring Command Reference.

To configure the UDP tracert operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the UDP tracert operation type and enter its view. |

type udp-tracert |

N/A |

|

4. Specify the destination device for the operation. |

·

Specify the destination device by its

host name: ·

Specify the destination device by its

IP address: |

By default, no destination IP address or host name is specified. |

|

5. (Optional.) Specify the destination port of UDP packets. |

destination port port-number |

By default, the destination port number is 33434. This port number must be an unused number on the destination device, so that the destination device can reply with ICMP port unreachable messages. |

|

6. (Optional.) Set the payload size for each UDP packet. |

data-size size |

The default setting is 100 bytes. |

|

7. (Optional.) Enable the no-fragmentation feature. |

no-fragment enable |

By default, the no-fragmentation feature is disabled. |

|

8. (Optional.) Set the maximum number of consecutive probe failures. |

max-failure times |

The default setting is 5. |

|

9. (Optional.) Set the TTL value for UDP packets in the start round of the UDP tracert operation. |

init-ttl value |

The default setting is 1. |

|

10. (Optional.) Specify an output interface for UDP packets. |

out interface interface-type interface-number |

By default, the output interface for UDP packets is not specified. The NQA client determines the output interface based on the routing table lookup. |

|

11. (Optional.) Specify the source port of UDP packets. |

source port port-number |

By default, no source port number is specified. |

|

12. (Optional.) Specify the source IP address of UDP packets. |

·

Specify the IP address of the specified

interface as the source IP address: ·

Specify the source IP address: |

By default, the packets take the primary IP address of the output interface as their source IP address. If you execute the source ip and source interface commands multiple times, the most recent configuration takes effect. The specified source interface must be up. The source IP address must be the IP address of a local interface, and the local interface must be up. Otherwise, no probe packets can be sent out. |

Configuring the voice operation

|

|

CAUTION: To ensure successful voice operations and avoid affecting existing services, do not perform the operations on well-known ports from 1 to 1023. |

The voice operation measures VoIP network performance.

The voice operation works as follows:

1. The NQA client sends voice packets at sending intervals to the destination device (NQA server).

The voice packets are of one of the following codec types:

? G.711 A-law.

? G.711 μ-law.

? G.729 A-law.

2. The destination device time stamps each voice packet it receives and sends it back to the source.

3. Upon receiving the packet, the source device calculates the jitter and one-way delay based on the timestamp.

The following parameters that reflect VoIP network performance can be calculated by using the metrics gathered by the voice operation:

· Calculated Planning Impairment Factor (ICPIF)—Measures impairment to voice quality in a VoIP network. It is decided by packet loss and delay. A higher value represents a lower service quality.

· Mean Opinion Scores (MOS)—A MOS value can be evaluated by using the ICPIF value, in the range of 1 to 5. A higher value represents a higher service quality.

The evaluation of voice quality depends on users' tolerance for voice quality. For users with higher tolerance for voice quality, use the advantage-factor command to set an advantage factor. When the system calculates the ICPIF value, it subtracts the advantage factor to modify ICPIF and MOS values for voice quality evaluation.

The voice operation requires both the NQA server and the NQA client. Before you perform a voice operation, configure a UDP listening service on the NQA server. For more information about UDP listening service configuration, see "Configuring the NQA server."

The voice operation cannot repeat.

Before starting the operation, make sure the network devices are time synchronized by using NTP. For more information about NTP, see "Configuring NTP."

To configure the voice operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the voice type and enter its view. |

type voice |

N/A |

|

4. Specify the destination address of voice packets. |

destination ip ip-address |

By default, no destination IP address is configured. The destination IP address must be the same as the IP address of the listening service on the NQA server. |

|

5. Specify the destination port of voice packets. |

destination port port-number |

By default, no destination port number is configured. The destination port number must be the same as the port number of the listening service on the NQA server. |

|

6. (Optional.) Specify the codec type. |

codec-type { g711a | g711u | g729a } |

By default, the codec type is G.711 A-law. |

|

7. (Optional.) Set the advantage factor for calculating MOS and ICPIF values. |

advantage-factor factor |

By default, the advantage factor is 0. |

|

8. (Optional.) Specify the source IP address of voice packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no voice packets can be sent out. |

|

9. (Optional.) Specify the source port number of voice packets. |

source port port-number |

By default, no source port number is specified. |

|

10. (Optional.) Set the payload size for each voice packet. |

data-size size |

By default, the voice packet size varies by codec type. The default packet size is 172 bytes for G.711A-law and G.711 μ-law codec type, and 32 bytes for G.729 A-law codec type. |

|

11. (Optional.) Specify the payload fill string for voice packets. |

data-fill string |

The default payload fill string is hexadecimal string 00010203040506070809. |

|

12. (Optional.) Set the number of voice packets to be sent in a voice probe. |

probe packet-number packet-number |

The default setting is 1000. |

|

13. (Optional.) Set the interval for sending voice packets. |

probe packet-interval interval |

The default setting is 20 milliseconds. |

|

14. (Optional.) Specify how long the NQA client waits for a response from the server before it regards the response times out. |

probe packet-timeout timeout |

The default setting is 5000 milliseconds. |

|

|

NOTE: Use the display nqa result or display nqa statistics command to verify the voice operation. The display nqa history command does not display the voice operation results or statistics. |

Configuring the DLSw operation

The DLSw operation measures the response time of a DLSw device.

To configure the DLSw operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the DLSw type and enter its view. |

type dlsw |

N/A |

|

4. Specify the destination IP address of probe packets. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. (Optional.) Specify the source IP address of probe packets. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no probe packets can be sent out. |

Configuring the path jitter operation

The path jitter operation measures the jitter, negative jitters, and positive jitters from the NQA client to each hop on the path to the destination.

Before you configure the path jitter operation, perform the following tasks:

· Enable sending ICMP time exceeded messages on the intermediate devices between the source and destination devices. If the intermediate devices are H3C devices, use the ip ttl-expires enable command.

· Enable sending ICMP destination unreachable messages on the destination device. If the destination device is an H3C device, use the ip unreachables enable command.

For more information about the ip ttl-expires enable and ip unreachables enable commands, see Layer 3—IP Services Command Reference.

To configure the path jitter operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify the path jitter type and enter its view. |

type path-jitter |

N/A |

|

4. Specify the destination address of ICMP echo requests. |

destination ip ip-address |

By default, no destination IP address is specified. |

|

5. (Optional.) Set the payload size for each ICMP echo request. |

data-size size |

The default setting is 100 bytes. |

|

6. (Optional.) Specify the payload fill string for ICMP echo requests. |

data-fill string |

The default payload fill string is hexadecimal string 00010203040506070809. |

|

7. Specify the source IP address of ICMP echo requests. |

source ip ip-address |

By default, the packets take the primary IP address of the output interface as their source IP address. The source IP address must be the IP address of a local interface, and the interface must be up. Otherwise, no ICMP echo requests can be sent out. |

|

8. (Optional.) Set the number of ICMP echo requests to be sent in a path jitter operation. |

probe packet-number packet-number |

The default setting is 10. |

|

9. (Optional.) Set the interval for sending ICMP echo requests. |

probe packet-interval interval |

The default setting is 20 milliseconds. |

|

10. (Optional.) Specify how long the NQA client waits for a response from the server before it regards the response times out. |

probe packet-timeout timeout |

The default setting is 3000 milliseconds. |

|

11. (Optional.) Specify an LSR path. |

lsr-path ip-address&<1-8> |

By default, no LSR path is specified. The path jitter operation uses the tracert to detect the LSR path to the destination, and sends ICMP echo requests to each hop on the LSR. |

|

12. (Optional.) Perform the path jitter operation only on the destination address. |

target-only |

By default, the path jitter operation is performed on each hop on the path to the destination. |

Configuring optional parameters for the NQA operation

Unless otherwise specified, the following optional parameters apply to all types of NQA operations.

The parameter settings take effect only on the current operation.

To configure optional parameters for an NQA operation:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify an NQA operation type and enter its view. |

type { dhcp | dlsw | dns | ftp | http | icmp-echo | icmp-jitter | path-jitter | snmp | tcp | udp-echo | udp-jitter | udp-tracert | voice } |

N/A |

|

4. Configure a description. |

description text |

By default, no description is configured. |

|

5. Set the interval at which the NQA operation repeats. |

frequency interval |

For a voice or path jitter operation, the default setting is 60000 milliseconds. For other types of operations, the default setting is 0 milliseconds, and only one operation is performed. If the operation is not completed when the interval expires, the next operation does not start. |

|

6. Specify the probe times. |

probe count times |

By default: · In an UDP tracert operation, the NQA client performs three probes to each hop to the destination. · In other types of operations, the NQA client performs one probe to the destination per operation. This command is not available for the path jitter and voice operations. Each of these operations performs only one probe. |

|

7. Set the probe timeout time. |

probe timeout timeout |

The default setting is 3000 milliseconds. This command is not available for the ICMP jitter, path jitter, UDP jitter, or voice operations. |

|

8. Set the maximum number of hops that the probe packets can traverse. |

ttl value |

The default setting is 30 for probe packets of the UDP tracert operation, and is 20 for probe packets of other types of operations. This command is not available for the DHCP or path jitter operation. |

|

9. Set the ToS value in the IP header of probe packets. |

tos value |

The default setting is 0. |

|

10. Enable the routing table bypass feature. |

route-option bypass-route |

By default, the routing table bypass feature is disabled. This command is not available for the DHCP and path jitter operations. This command does not take effect if the destination address of the NQA operation is an IPv6 address. |

|

11. Specify the VPN instance where the operation is performed. |

vpn-instance vpn-instance-name |

By default, the operation is performed on the public network. |

Configuring the collaboration feature

Collaboration is implemented by associating a reaction entry of an NQA operation with a track entry. The reaction entry monitors the NQA operation. If the number of operation failures reaches the specified threshold, the configured action is triggered.

To configure the collaboration feature:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify an NQA operation type and enter its view. |

type { dhcp | dlsw | dns | ftp | http | icmp-echo | snmp | tcp | udp-echo } |

The collaboration feature is not available for the ICMP jitter, path jitter, UDP tracert, UDP jitter, or voice operations. |

|

4. Configure a reaction entry. |

reaction item-number checked-element probe-fail threshold-type consecutive consecutive-occurrences action-type trigger-only |

By default, no reaction entry is configured. You cannot modify the content of an existing reaction entry. |

|

5. Return to system view. |

quit |

N/A |

|

6. Associate Track with NQA. |

See High Availability Configuration Guide. |

N/A |

|

7. Associate Track with an application module. |

See High Availability Configuration Guide. |

N/A |

Configuring threshold monitoring

This feature allows you to monitor the NQA operation running status.

Threshold types

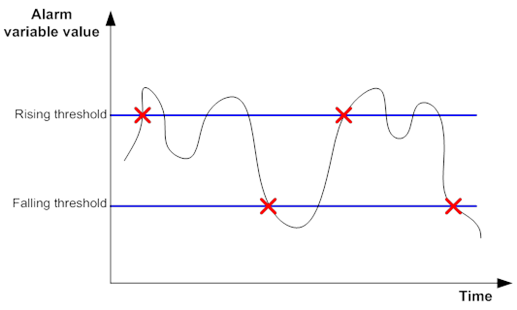

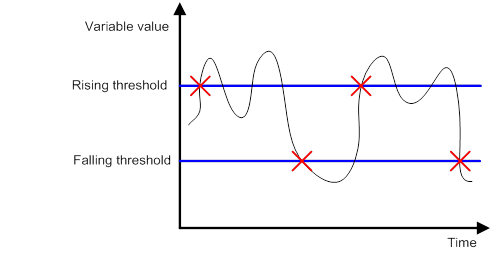

An NQA operation supports the following threshold types:

· average—If the average value for the monitored performance metric either exceeds the upper threshold or goes below the lower threshold, a threshold violation occurs.

· accumulate—If the total number of times that the monitored performance metric is out of the specified value range reaches or exceeds the specified threshold, a threshold violation occurs.

· consecutive—If the number of consecutive times that the monitored performance metric is out of the specified value range reaches or exceeds the specified threshold, a threshold violation occurs.

Threshold violations for the average or accumulate threshold type are determined on a per NQA operation basis. The threshold violations for the consecutive type are determined from the time the NQA operation starts.

Triggered actions

The following actions might be triggered:

· none—NQA displays results only on the terminal screen. It does not send traps to the NMS.

· trap-only—NQA displays results on the terminal screen, and meanwhile it sends traps to the NMS.

· trigger-only—NQA displays results on the terminal screen, and meanwhile triggers other modules for collaboration.

The DNS operation does not support the action of sending trap messages.

Reaction entry

In a reaction entry, configure a monitored element, a threshold type, and an action to be triggered to implement threshold monitoring.

The state of a reaction entry can be invalid, over-threshold, or below-threshold.

· Before an NQA operation starts, the reaction entry is in invalid state.

· If the threshold is violated, the state of the entry is set to over-threshold. Otherwise, the state of the entry is set to below-threshold.

If the action is trap-only for a reaction entry, a trap message is sent to the NMS when the state of the entry changes.

Configuration procedure

Before you configure threshold monitoring, configure the destination address of the trap messages by using the snmp-agent target-host command. For more information about the command, see Network Management and Monitoring Command Reference.

To configure threshold monitoring:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Enter NQA operation view. |

type { dhcp | dlsw | dns | ftp | http | icmp-echo | icmp-jitter | snmp | tcp | udp-echo | udp-jitter | udp-tracert | voice } |

The threshold monitoring feature is not available for path jitter operations. |

|

4. Enable sending traps to the NMS when specific conditions are met. |

reaction trap { path-change | probe-failure consecutive-probe-failures | test-complete | test-failure [ accumulate-probe-failures ] } |

By default, no traps are sent to the NMS. The ICMP jitter, UDP jitter, and voice operations support only the test-complete keyword. The following parameters are not available for the UDP tracert operation: · The probe-failure consecutive-probe-failures option. · The accumulate-probe-failures argument. |

|

5. Configure threshold monitoring. |

·

Monitor the operation duration (not

supported in the ICMP jitter, UDP jitter, UDP tracert, or voice operations): ·

Monitor failure times (not supported in the

ICMP jitter, UDP jitter, UDP tracert, or voice operations): ·

Monitor the round-trip time (only for the ICMP

jitter, UDP jitter, and voice operations): ·

Monitor packet loss (only for the ICMP jitter,

UDP jitter, and voice operations): ·

Monitor the one-way jitter (only for the ICMP

jitter, UDP jitter, and voice operations): ·

Monitor the one-way delay (only for the ICMP

jitter, UDP jitter, and voice operations): ·

Monitor the ICPIF value (only for the voice

operation): ·

Monitor the MOS value (only for the voice

operation): |

N/A |

Configuring the NQA statistics collection feature

NQA forms statistics within the same collection interval as a statistics group. To display information about the statistics groups, use the display nqa statistics command.

If you use the frequency command to set the interval to 0 milliseconds for an NQA operation, NQA does not generate any statistics group for the operation.

To configure the NQA statistics collection feature:

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Specify an NQA operation type and enter its view. |

type { dhcp | dlsw | dns | ftp | http | icmp-echo | icmp-jitter | path-jitter | snmp | tcp | udp-echo | udp-jitter | voice } |

The NQA statistics collection feature is not available for UDP tracert operations. |

|

4. (Optional.) Set the interval for collecting the statistics. |

statistics interval interval |

The default setting is 60 minutes. |

|

5. (Optional.) Set the maximum number of statistics groups that can be saved. |

statistics max-group number |

The default setting is two groups. To disable the NQA statistics collection feature, set the maximum number to 0. When the maximum number of statistics groups is reached, to save a new statistics group, the oldest statistics group is deleted. |

|

6. (Optional.) Set the hold time of statistics groups. |

statistics hold-time hold-time |

The default setting is 120 minutes. A statistics group is deleted when its hold time expires. |

Configuring the saving of NQA history records

|

Step |

Command |

Remarks |

|

1. Enter system view. |

system-view |

N/A |

|

2. Create an NQA operation and enter NQA operation view. |

nqa entry admin-name operation-tag |

By default, no NQA operations exist. |

|

3. Enter NQA operation type view. |

type { dhcp | dlsw | dns | ftp | http | icmp-echo | snmp | tcp | udp-echo | udp-tracert } |

The history record saving feature is not available for the ICMP jitter, UDP jitter, path jitter, or voice operations. |

|

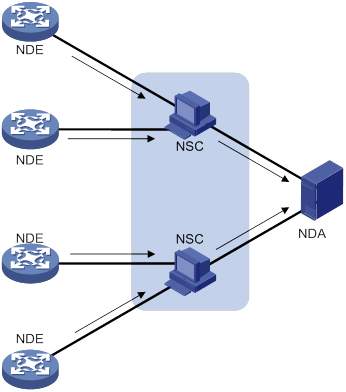

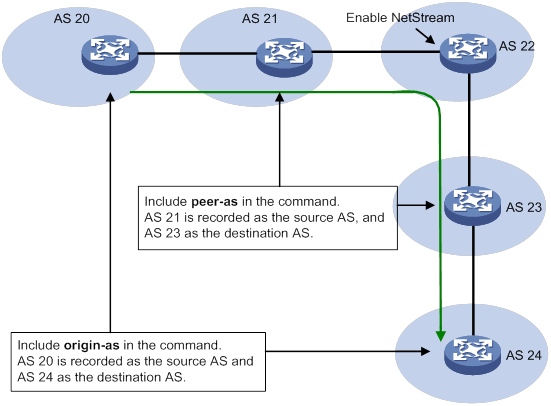

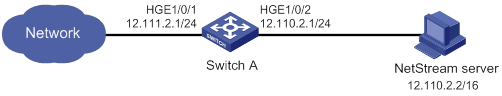

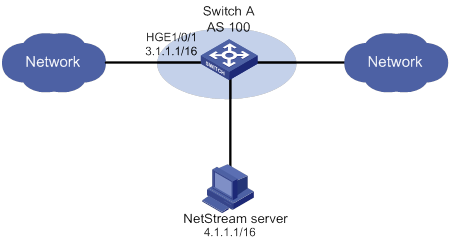

4. Enable the saving of history records for the NQA operation. |